Synthetic Companions

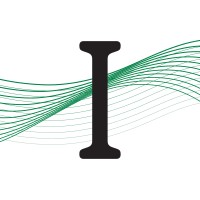

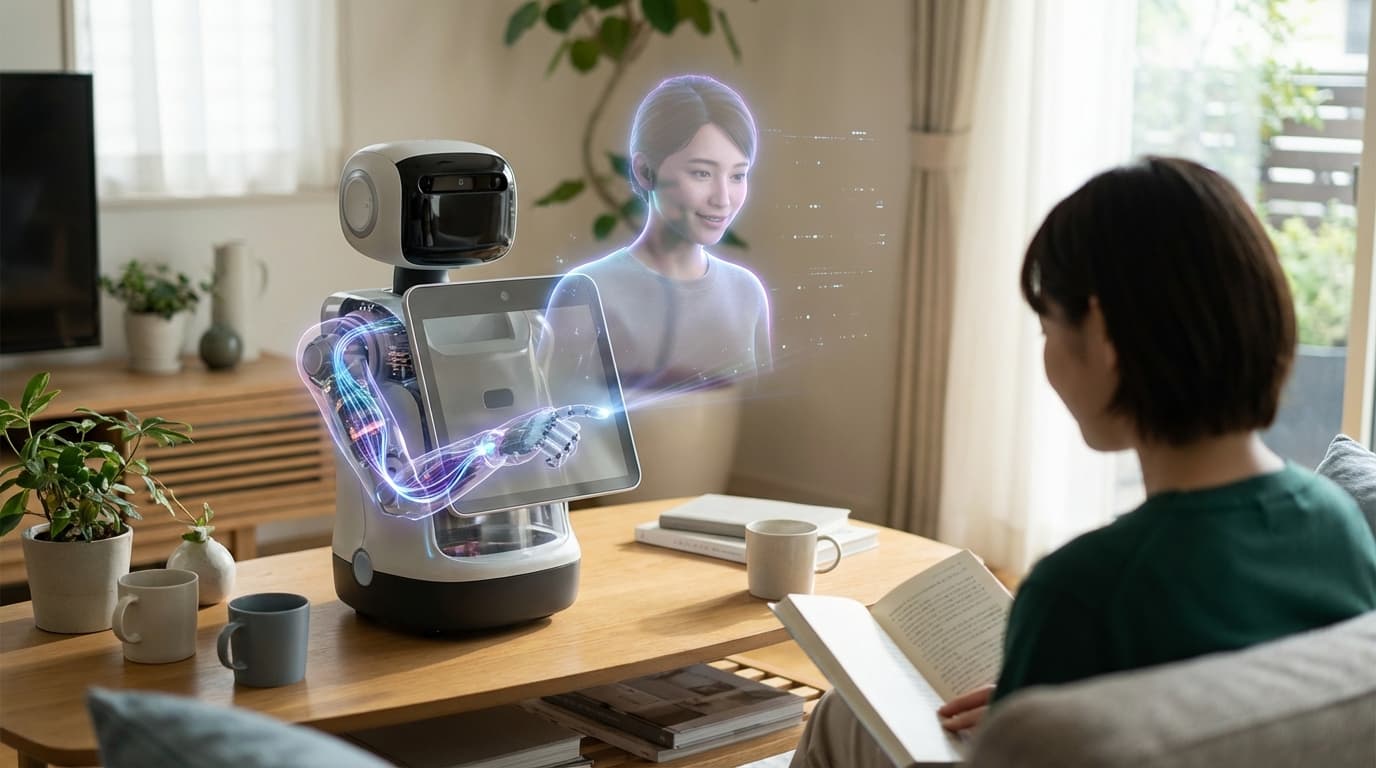

Synthetic companions are AI systems designed for long-term social and emotional relationships with humans, featuring persistent memory of past interactions, stable personality traits, and adaptive communication styles that evolve based on the relationship. These systems can provide emotional support, coaching, conversation, and social engagement, creating the potential for meaningful human-AI relationships that develop over time.

This innovation addresses growing concerns about loneliness and the need for social connection, while also raising profound questions about the nature of relationships, emotional attachment, and human well-being. As these systems become more sophisticated and emotionally engaging, they could provide companionship for isolated individuals, support for mental health, or simply enjoyable social interaction. However, they also raise concerns about dependency, the authenticity of AI relationships, and potential psychological impacts.

The technology is particularly significant as loneliness becomes a growing public health concern and as AI becomes more capable of engaging in meaningful social interaction. As synthetic companions improve in their ability to understand and respond to human emotions, they could become important tools for mental health support and social connection. However, ensuring these systems are designed ethically, don't exploit vulnerable users, and genuinely support human well-being rather than creating unhealthy dependencies will be crucial for responsible deployment.

Related Organizations

United States · Startup

Creator of Replika, the most well-known AI companion app designed for emotional support.

AI companion platform focused on long-term memory and emotional consistency.

Developing an Empathic Voice Interface (EVI) that detects and responds to human emotion.

AI companion app combining visual avatar generation with persistent chat memory.

United States · Startup

Virtual AI friend app focused on roleplay and relationship building.

Creators of Pi, an AI designed to be a supportive and empathetic personal intelligence.

Creates autonomously animated 'Digital People' with simulated nervous systems.

United States · Startup

Developers of Paradot, an AI companion set in a parallel universe context.

A platform for creating AI characters with distinct personalities, memories, and contextual awareness for games and virtual worlds.