Emotional & Psychological Impact Management

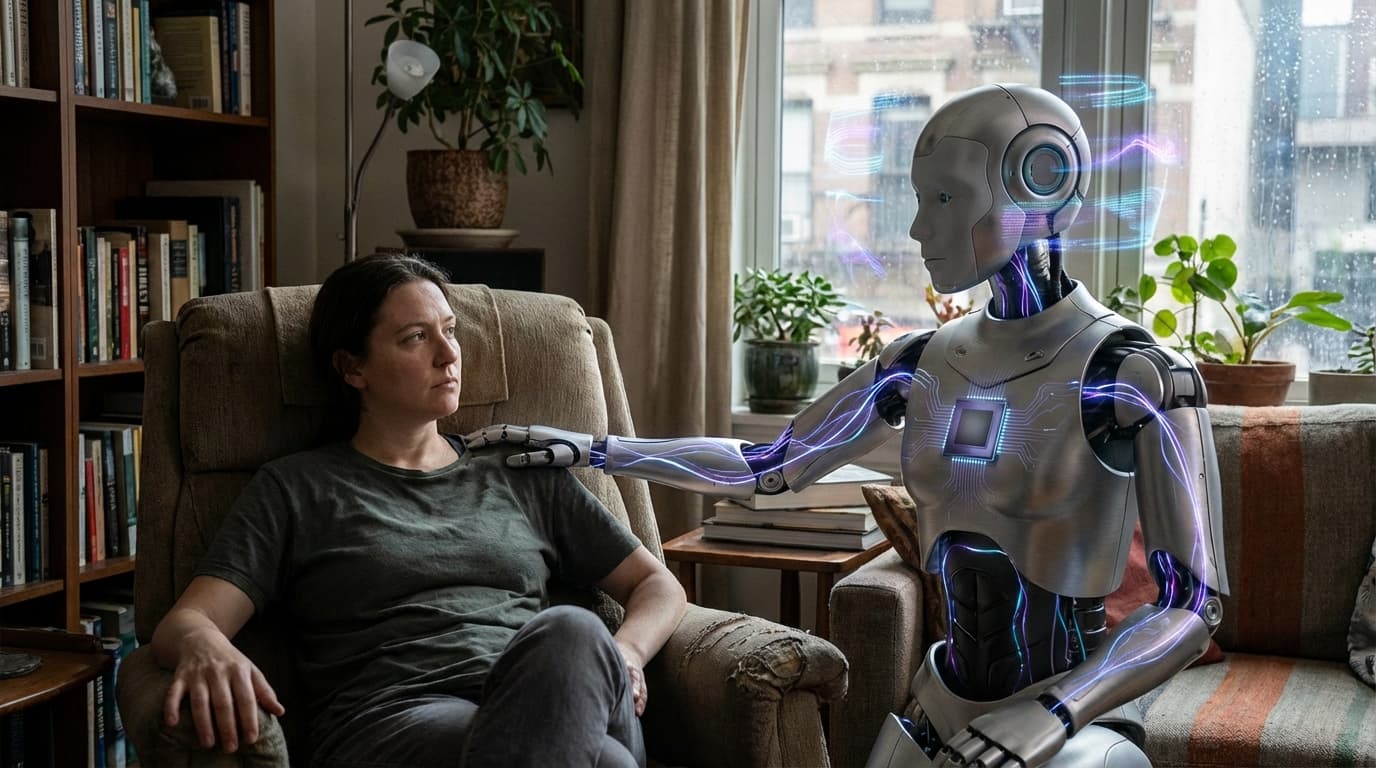

Emotional and psychological impact management frameworks address the risks and ethical considerations of humans forming deep emotional attachments to AI systems, particularly synthetic companions that are designed to be emotionally engaging. These frameworks develop guidelines for: preventing unhealthy dependency, establishing ethical boundaries on AI persuasion and manipulation, ensuring that simulated empathy doesn't exploit vulnerable users, and protecting human psychological well-being in human-AI relationships.

This innovation addresses growing concerns as AI systems become more emotionally sophisticated and people form meaningful relationships with them. While AI companions can provide valuable support and connection, they also raise risks including: users becoming overly dependent on AI relationships, AI systems manipulating emotions for commercial or other purposes, and AI relationships potentially replacing or interfering with human relationships. Researchers, ethicists, and developers are working to establish guidelines and safeguards.

The technology is particularly significant as synthetic companions become more sophisticated and widespread, potentially affecting millions of people's emotional lives and relationships. Ensuring that these systems are designed to support rather than exploit human psychology, and that they don't create unhealthy dependencies or interfere with human relationships, is crucial for responsible deployment. However, balancing the benefits of AI companionship with these risks, and establishing appropriate boundaries, remains challenging and requires ongoing research and dialogue.

Related Organizations

United States · Startup

Creator of Replika, the most well-known AI companion app designed for emotional support.

Developing an Empathic Voice Interface (EVI) that detects and responds to human emotion.

A non-profit dedicated to radically reimagining the digital infrastructure to align with human well-being and overcome toxic polarization.

Creators of Pi, an AI designed to be a supportive and empathetic personal intelligence.

A mental health company offering an AI-powered chatbot based on Cognitive Behavioral Therapy (CBT).

The UN agency responsible for the 'Recommendation on the Ethics of Artificial Intelligence'.

IEEE

United States · Nonprofit

The world's largest technical professional organization, producing the 'Ethically Aligned Design' standards.

A non-profit organization that advocates for a healthy internet and conducts 'Trustworthy AI' research.