Neural Radiance Fields (NeRFs)

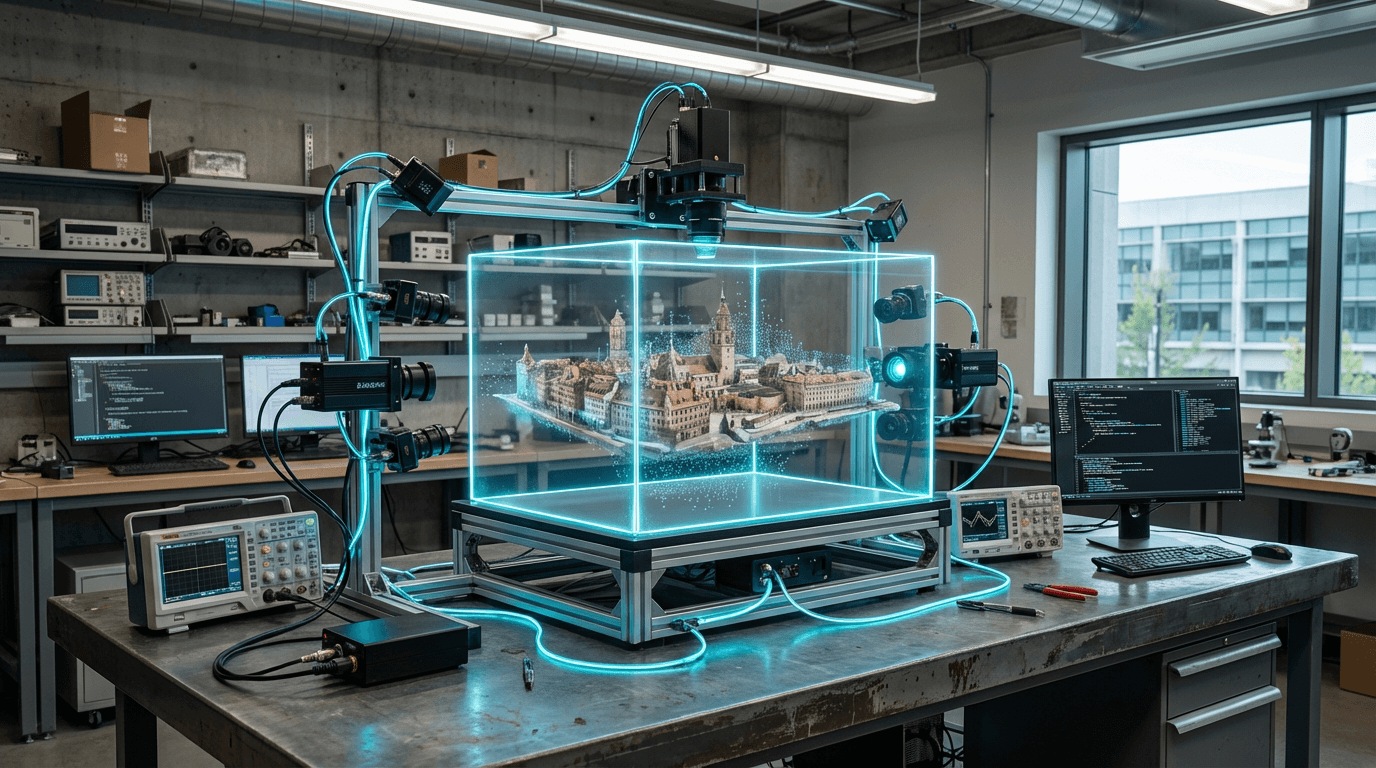

Neural Radiance Fields represent a breakthrough in computer graphics that fundamentally reimagines how three-dimensional scenes can be captured, represented, and rendered. Unlike traditional 3D modeling approaches that rely on explicit geometric representations like meshes or point clouds, NeRFs employ neural networks to encode the volumetric properties of a scene—specifically, how light radiates through space at every point and from every viewing angle. The technology works by training a neural network on a collection of 2D photographs taken from different viewpoints, learning to predict the color and density of light at any arbitrary position and direction within the scene. This implicit representation allows the system to interpolate smoothly between known viewpoints, generating photorealistic novel perspectives that were never directly photographed. The neural network essentially learns a continuous function that maps 5D coordinates (spatial location plus viewing direction) to color and opacity values, enabling the reconstruction of complex lighting effects, reflections, and fine details that would be extraordinarily difficult to model using conventional techniques.

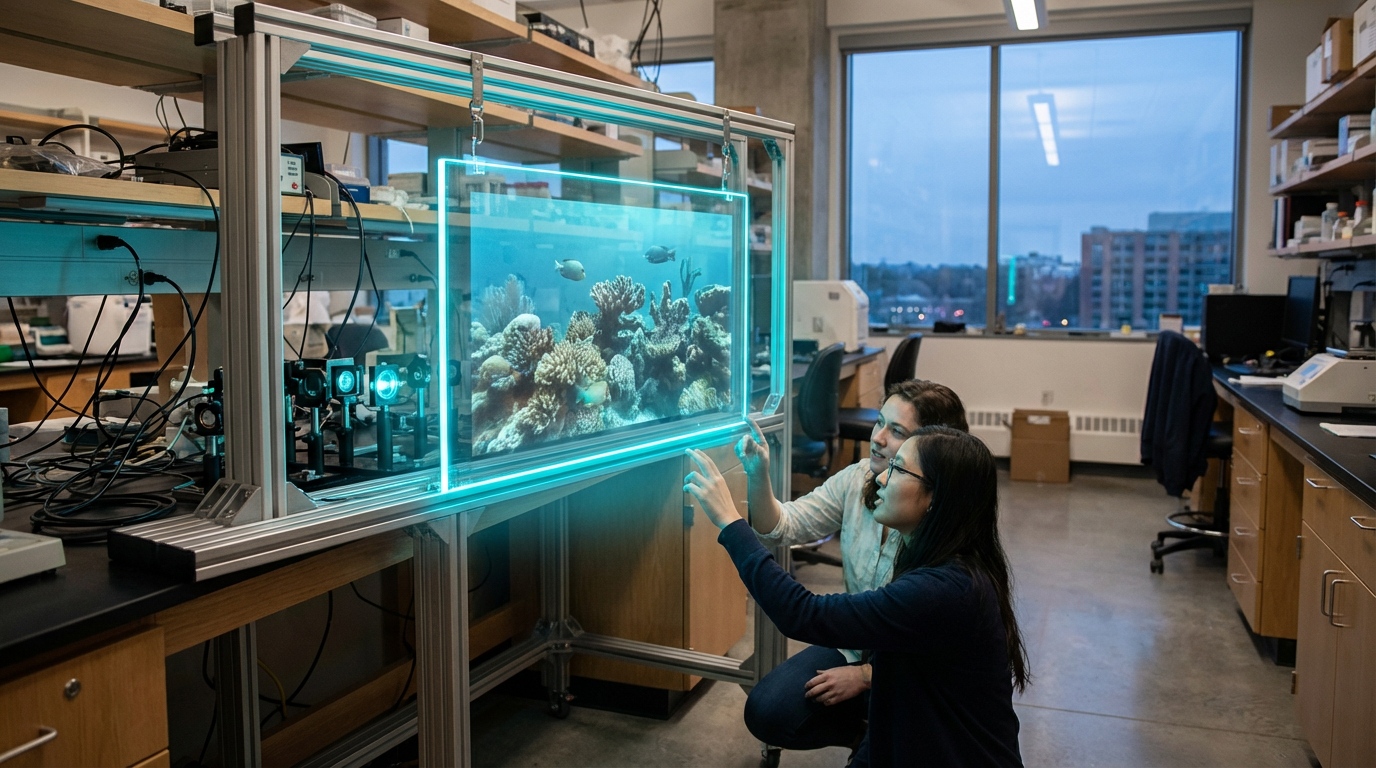

For the entertainment and streaming industries, NeRFs address several critical production challenges that have long constrained creative possibilities and inflated costs. Traditional methods of creating realistic 3D environments for film, television, and gaming require extensive manual modeling, texturing, and lighting work by skilled artists—a process that can take weeks or months for complex scenes. NeRFs dramatically accelerate this pipeline by allowing production teams to capture real-world locations or physical sets with standard cameras and automatically generate fully navigable 3D representations. This capability is particularly transformative for virtual production workflows, where filmmakers need to create convincing digital backgrounds and environments that can be viewed from multiple angles. The technology also enables new forms of volumetric video capture, where performances or real-world scenes can be recorded and replayed from any viewpoint, opening possibilities for immersive storytelling formats that go beyond traditional fixed-camera perspectives.

Early adoption of NeRF technology is already visible across major entertainment studios and streaming platforms exploring next-generation content formats. Production companies are experimenting with NeRFs for creating digital doubles of actors, reconstructing historical locations for period pieces, and generating virtual sets that can be explored interactively in post-production. Gaming studios are investigating how NeRFs can bridge the gap between photorealistic asset creation and real-time rendering, potentially allowing games to feature environments with unprecedented visual fidelity derived from real-world captures. Research groups and technology companies continue to refine the approach, developing faster training methods and real-time rendering techniques that could eventually enable live volumetric broadcasts or interactive streaming experiences where viewers control their perspective within a scene. As computational capabilities advance and the technology matures, NeRFs are positioned to become a foundational tool in the evolution toward more immersive, photorealistic, and spatially flexible entertainment experiences—aligning with broader industry movements toward virtual production, volumetric content, and the convergence of traditional media with interactive formats.

Related Organizations

The originators of the original NeRF paper and developers of MultiNeRF and immersive view technologies for Maps.

Creators of Dream Machine, a high-quality video generation model, and 3D capture technology.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

Home to the BAIR lab and researchers like Angjoo Kanazawa who pioneered NeRF technologies.

Specializes in NeRF workflows for Virtual Production, allowing editing of volumetric backgrounds.

Developers of KIRI Engine, a cloud-based photogrammetry and Neural Surface Reconstruction tool.

Social media and camera company developing AR spectacles.

Creators of Skybox AI, which generates 360-degree environments that can be used as inputs for NeRF-based scene reconstruction.