Neural Radiance Fields (NeRF) Streaming

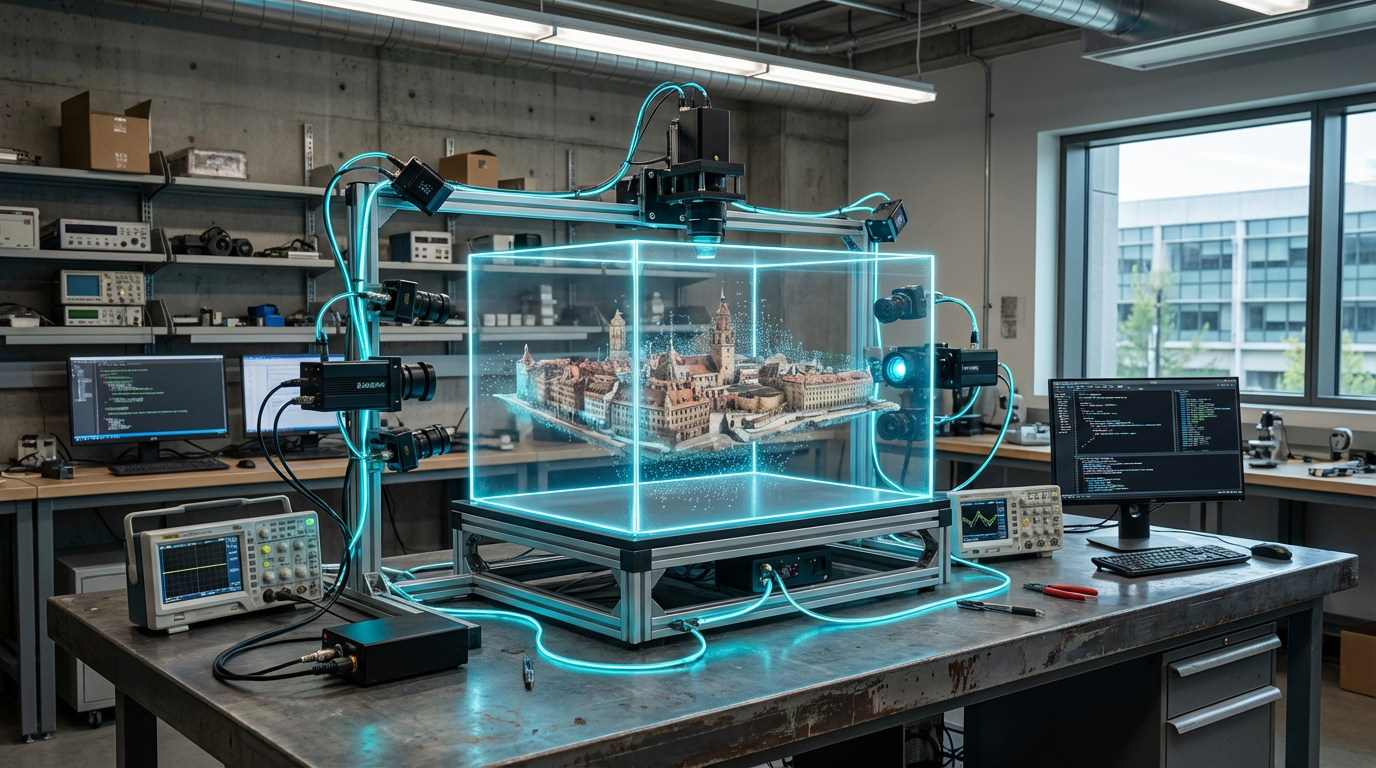

Neural radiance field (NeRF) streaming pipelines reconstruct photoreal environments from sparse photos or LiDAR, then stream the learned volumetric model to clients who render novel viewpoints on-device. Instead of transmitting heavy polygon meshes, servers send compact neural weights and camera poses; client GPUs evaluate the NeRF on demand, aided by tensor cores and real-time denoisers. Hybrid systems pre-bake parts of the field into Gaussian splats or voxels so performance stays consistent on consoles and mobile.

Game studios use NeRF streaming to drop players into exact replicas of real cities, eSports arenas, or branded pop-ups captured hours earlier. UGC creators scan favorite hangouts and host sessions without mastering photogrammetry, and digital tourism platforms let users portal from one scanned site to another seamlessly. In competitive play, NeRFs power mixed-reality replays where analysts freely orbit live-action events.

TRL 4 technology faces runtime costs and tooling gaps: evaluating dense NeRFs stresses GPUs, and authoring workflows must blend neural fields with traditional assets. Standards groups (MPEG I, Metaverse Standards Forum) are drafting containers and level-of-detail schemes, while startups like Luma Labs and Nvidia Instant NeRF release SDKs for games. As accelerators improve and engines offer native NeRF components, streamed neural scenes will become a staple alongside polygons and voxels.

Related Organizations

The originators of the original NeRF paper and developers of MultiNeRF and immersive view technologies for Maps.

Creators of Dream Machine, a high-quality video generation model, and 3D capture technology.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

The French National Institute for Research in Digital Science and Technology, heavily involved in AI research and Scikit-learn.

AR platform company that develops the Lightship ARDK and owns Scaniverse, a 3D scanning app leveraging LiDAR.

Specializes in NeRF workflows for Virtual Production, allowing editing of volumetric backgrounds.

Common Sense Machines

United States · Startup

AI company focused on translating the physical world into 3D simulation-ready assets.

Germany · Company

Developers of 'Postshot', a specialized tool for training and rendering Radiance Fields and Gaussian Splats.

Developers of KIRI Engine, a cloud-based photogrammetry and Neural Surface Reconstruction tool.