Real-Time NeRF Engines

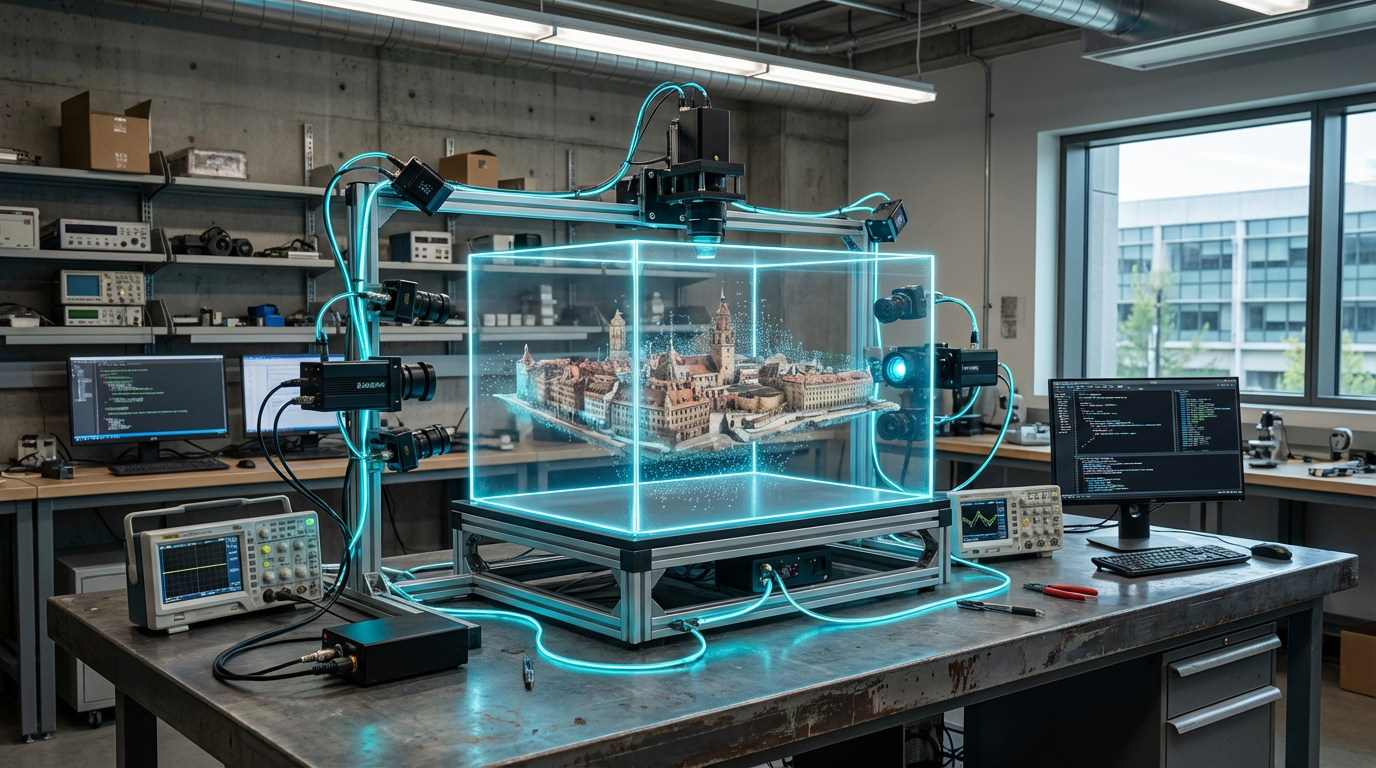

Real-time NeRF engines ingest synchronized camera feeds, run differentiable rendering pipelines, and update neural radiance fields on the fly so a scene can be reprojected from any angle milliseconds after capture. They rely on CUDA kernels, tensor cores, and neural compression to maintain 90+ FPS, and increasingly run on edge appliances placed inside stages to avoid backhauling dozens of camera feeds to the cloud. Post pipelines can tap the NeRF via USD or OpenXR endpoints instead of waiting for dense meshes.

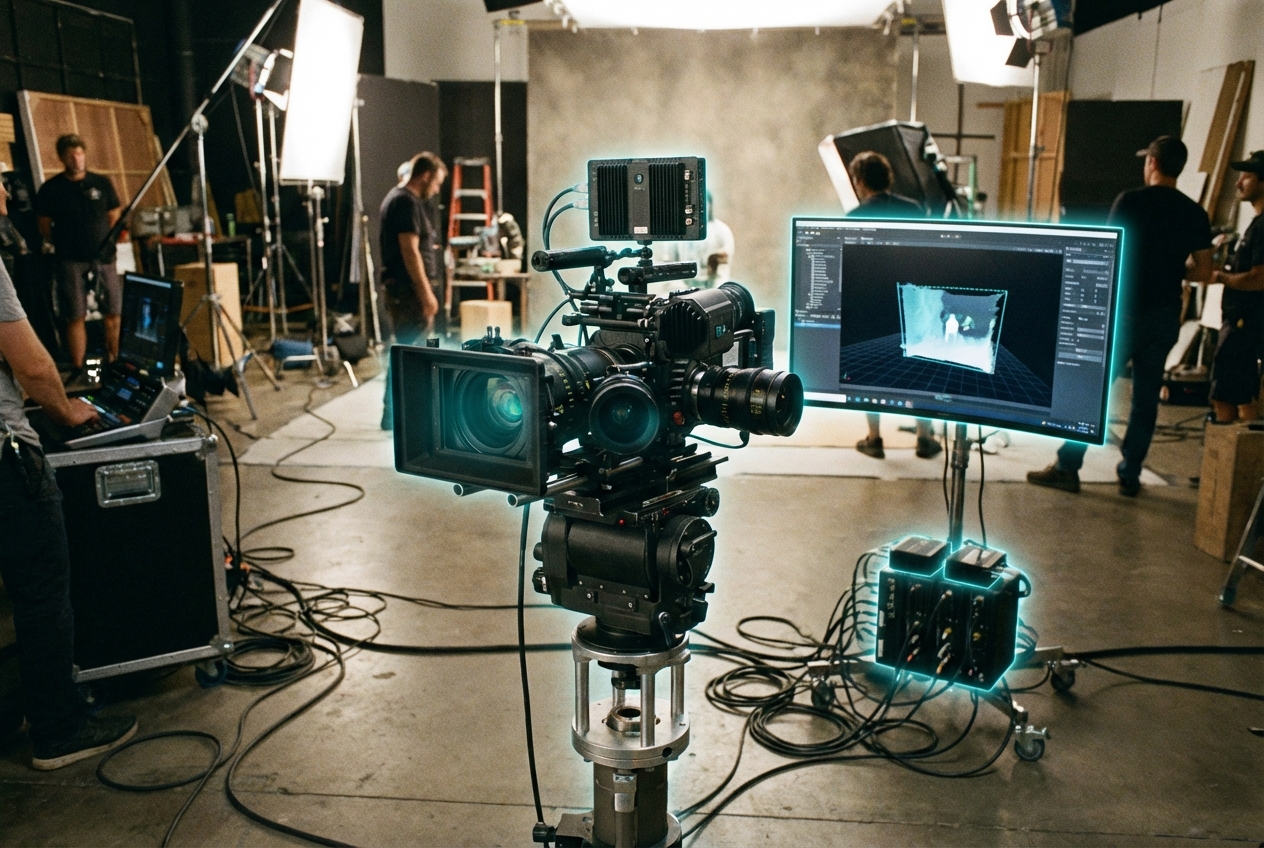

Studios use the tech for live volumetric replays, telepresence, and real-time set extensions—think sports broadcasts where viewers swing behind an athlete mid-play, or newsroom interviews captured volumetrically for later repackaging in XR. Virtual production teams scan practical sets between takes to match CG extensions, and remote collaborators explore scenes in headsets moments after they are shot. Because NeRFs are differentiable, VFX teams can tweak lighting and materials directly within the neural representation.

The workflow is at TRL 6: mature enough for pilot episodes but still demanding specialized talent. Standards groups such as the Metaverse Standards Forum discuss NeRF interchange formats, and vendors like Nvidia, Arcturus, and startups such as Luma AI ship turnkey appliances. As GPU prices fall and creative tools add NeRF-native editing, expect live neural reconstruction to become a default option alongside traditional photogrammetry.

Related Organizations

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

The originators of the original NeRF paper and developers of MultiNeRF and immersive view technologies for Maps.

Creators of Dream Machine, a high-quality video generation model, and 3D capture technology.

Specializes in NeRF workflows for Virtual Production, allowing editing of volumetric backgrounds.

Develops technology to capture and stream 3D volumetric video of live events into virtual worlds in real-time.

The French National Institute for Research in Digital Science and Technology, heavily involved in AI research and Scikit-learn.

AR platform company that develops the Lightship ARDK and owns Scaniverse, a 3D scanning app leveraging LiDAR.

Developers of KIRI Engine, a cloud-based photogrammetry and Neural Surface Reconstruction tool.

Developers of Unreal Engine 5, which features Lumen, a fully dynamic global illumination and reflection system designed for next-gen consoles and PC.