Volumetric Capture Rigs

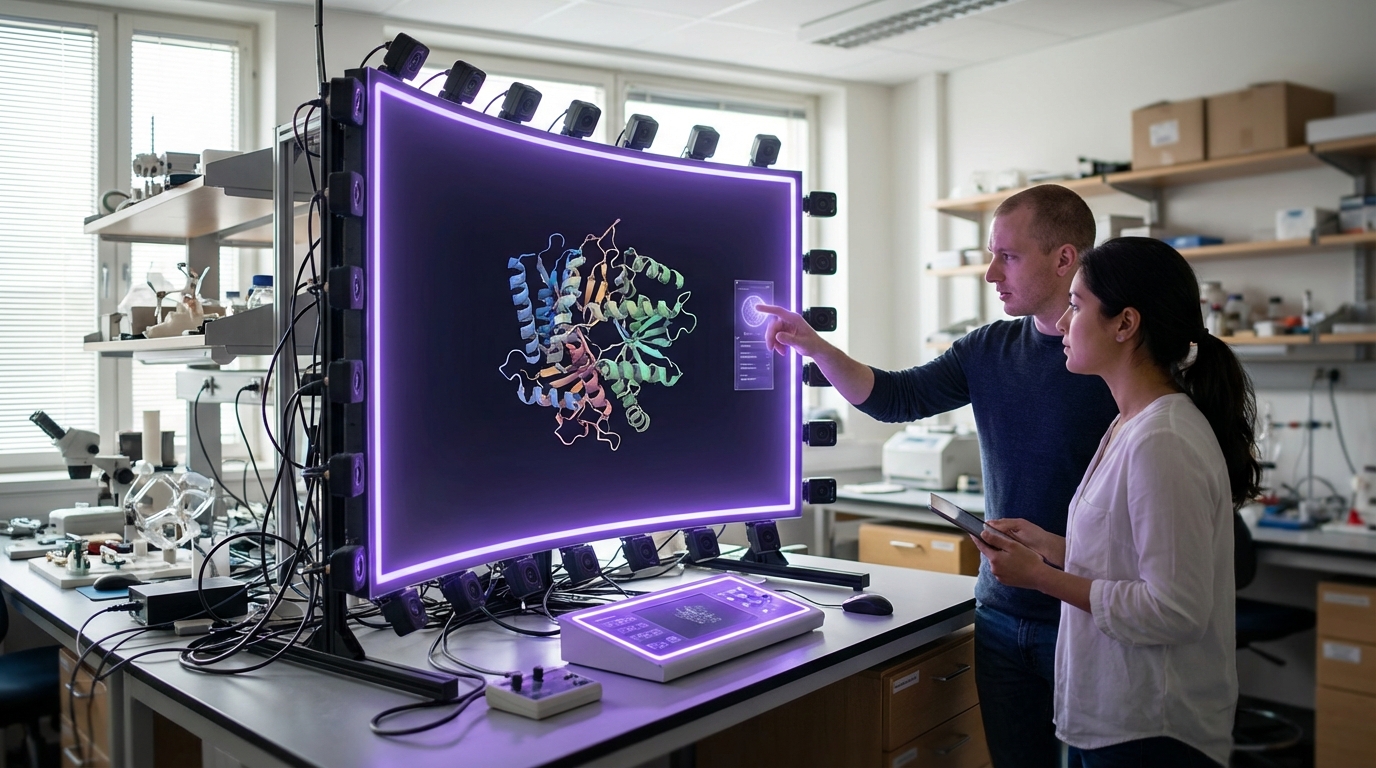

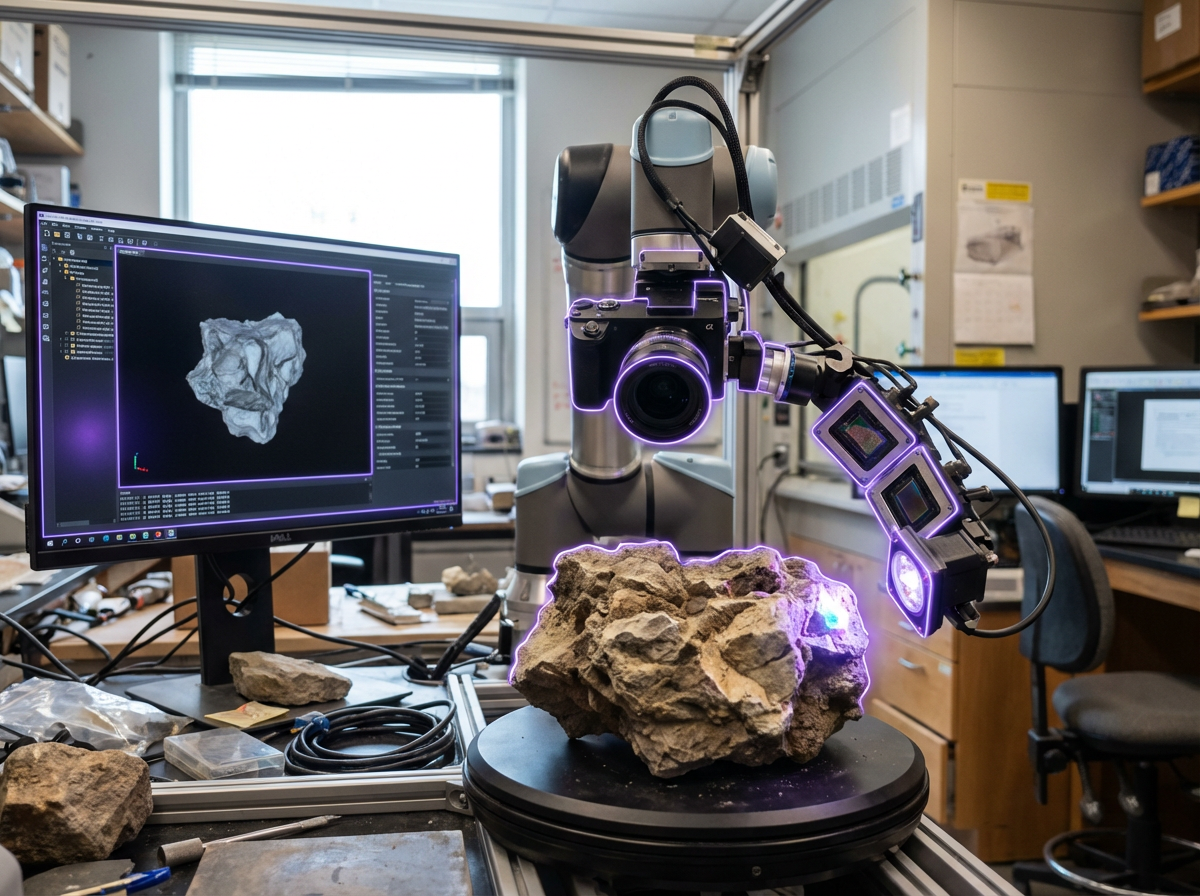

Volumetric capture rigs represent a convergence of multiple sensing technologies designed to record three-dimensional reality as dynamic digital assets. Unlike traditional video, which captures flat images from a single viewpoint, these systems employ arrays of synchronized RGB cameras, depth sensors, and lidar units positioned around a subject or environment. The cameras capture color and texture information from multiple angles simultaneously, while depth sensors and lidar measure the precise spatial coordinates of surfaces and objects in real time. Advanced processing algorithms then fuse these data streams into a unified volumetric representation—essentially a moving point cloud or mesh that preserves the full three-dimensional geometry and appearance of the captured subject. The resulting datasets are time-varying 3D models that viewers can observe from any angle, enabling a fundamentally different form of visual media that bridges the gap between traditional video and computer-generated imagery. Some systems operate in fixed studio environments with dozens of precisely calibrated cameras, while emerging portable rigs compress this capability into mobile configurations suitable for location shooting.

The entertainment and enterprise sectors face persistent challenges in creating realistic digital representations of human performance and physical spaces. Traditional motion capture requires actors to wear specialized suits covered in markers, limiting natural movement and requiring extensive post-production to add realistic appearance. Photogrammetry can produce high-quality static 3D models but struggles with moving subjects. Volumetric capture addresses these limitations by recording both geometry and appearance simultaneously, preserving subtle details like fabric movement, facial expressions, and lighting interactions that are difficult to recreate artificially. For the film and gaming industries, this enables the integration of real human performances into virtual environments with unprecedented fidelity. In enterprise contexts, volumetric capture supports immersive training scenarios where learners can observe complex procedures from optimal vantage points, or review recorded events from multiple perspectives. The technology also enables new forms of remote collaboration, where participants appear as realistic three-dimensional presences rather than flat video feeds, preserving spatial relationships and non-verbal communication cues that are lost in conventional video conferencing.

Major production studios have deployed permanent volumetric capture stages for entertainment content, while research institutions and technology companies are exploring applications in medical training, sports analysis, and cultural preservation. Museums and heritage organizations are beginning to use portable volumetric systems to create interactive archives of performances, ceremonies, and historical sites, capturing not just static geometry but the movement and atmosphere of living cultural practices. In the telepresence domain, early deployments indicate that volumetric representations significantly improve the sense of co-presence compared to traditional video, particularly in scenarios requiring spatial reasoning or collaborative physical tasks. As processing capabilities improve and capture systems become more compact and affordable, the technology is expanding beyond specialized studios into broader commercial and educational use. The convergence of volumetric capture with real-time rendering engines and spatial computing platforms positions this approach as a foundational element in the emerging ecosystem of immersive media, where the boundaries between physical and digital presence continue to blur.

Related Organizations

Manufactures the HOLOSYS volumetric capture system used by studios worldwide for high-fidelity 3D video.

A leading volumetric production studio that has produced high-profile volumetric experiences for fashion and music.

A premier volumetric capture stage in Los Angeles, utilizing Microsoft Mixed Reality Capture technology.

Through Copilot and the 'Recall' feature in Windows, Microsoft is integrating persistent memory and agentic capabilities directly into the operating system.

Creators of HoloSuite, a post-production and streaming platform for volumetric video, enabling adaptive streaming of 3D data.

Multinational corporation specializing in optical, imaging, and industrial products.

Provides a software platform for the capture, rendering, and streaming of volumetric video.

Creators of Depthkit, a software tool allowing volumetric capture using accessible depth sensors.

Manufactures compact, high-speed video cameras (Volucam) specifically designed for synchronized volumetric capture arrays.

AI-powered software that enables volumetric capture using standard smartphones rather than expensive studio rigs.