Inverse Rendering Engines

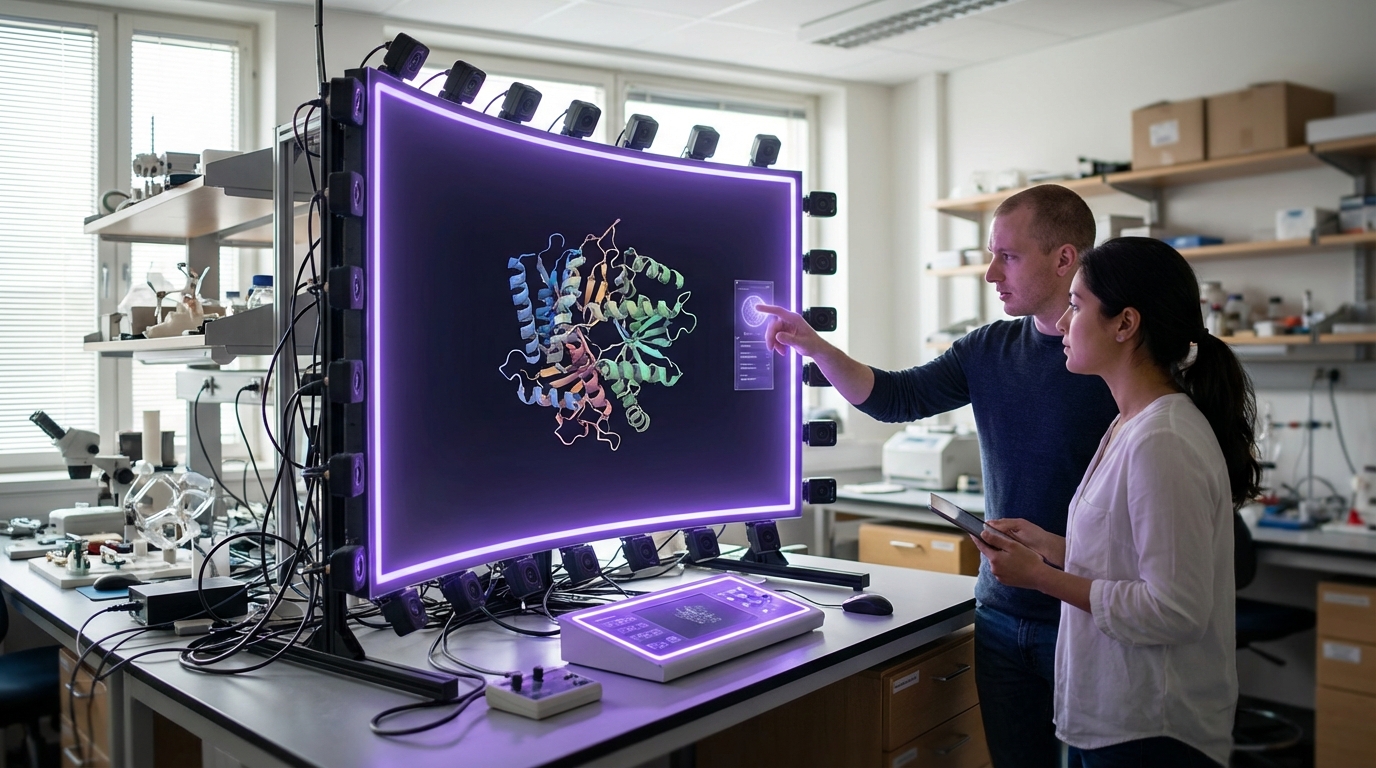

Inverse rendering engines represent a fundamental reversal of the traditional computer graphics pipeline, working backward from captured images to deduce the underlying physical properties that produced them. While conventional rendering synthesizes images from known 3D models, materials, and lighting, inverse rendering analyzes real-world photographs or video to extract these constituent elements—surface reflectance, geometry, illumination conditions, and material characteristics. This process relies on sophisticated machine learning models and physics-based optimization algorithms that iteratively refine estimates of scene properties until they can reproduce the observed images. The technology builds upon decades of computer vision research, combining neural networks trained on vast datasets of materials and lighting conditions with physically-based rendering equations that describe how light interacts with surfaces. By decomposing visual observations into their fundamental components, these engines can infer properties that would otherwise require specialized equipment or manual measurement, such as the roughness of a surface, the index of refraction of glass, or the distribution of light sources in a complex environment.

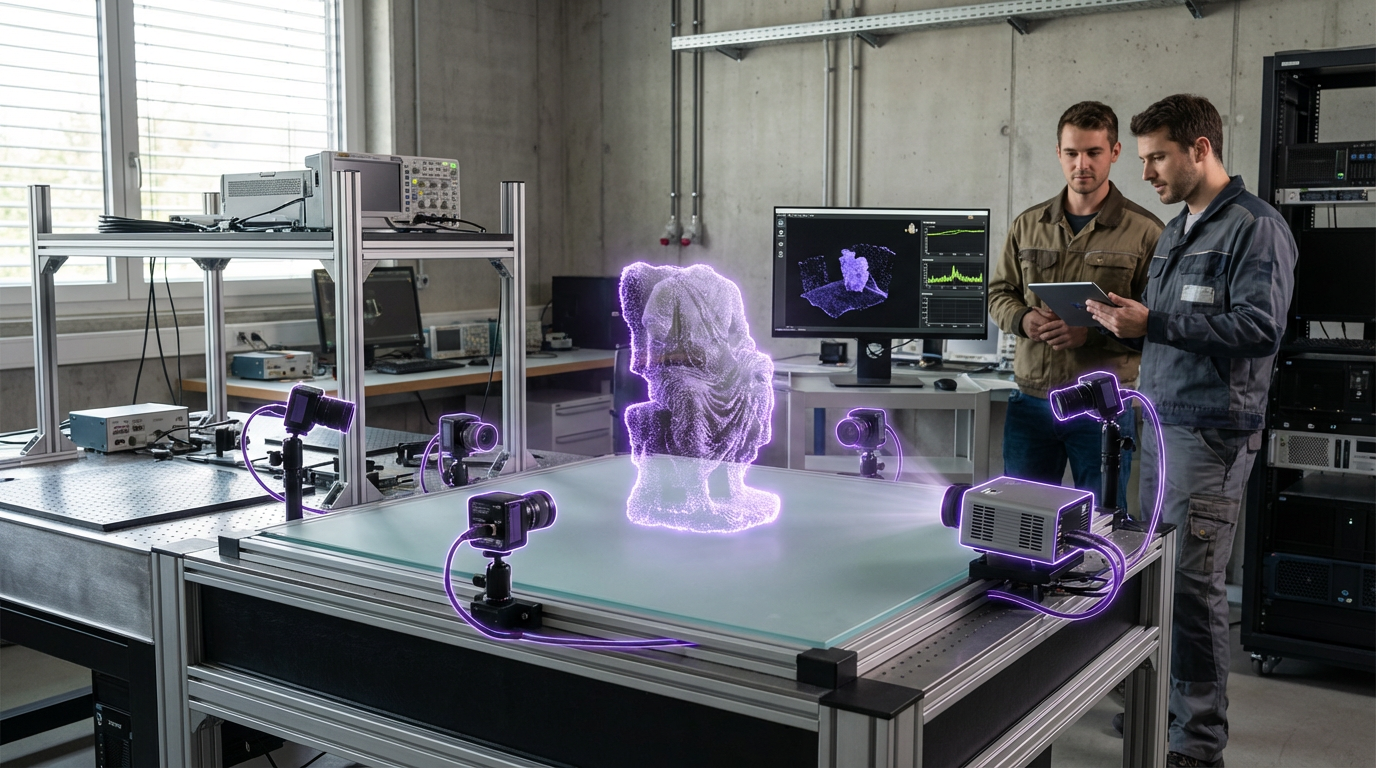

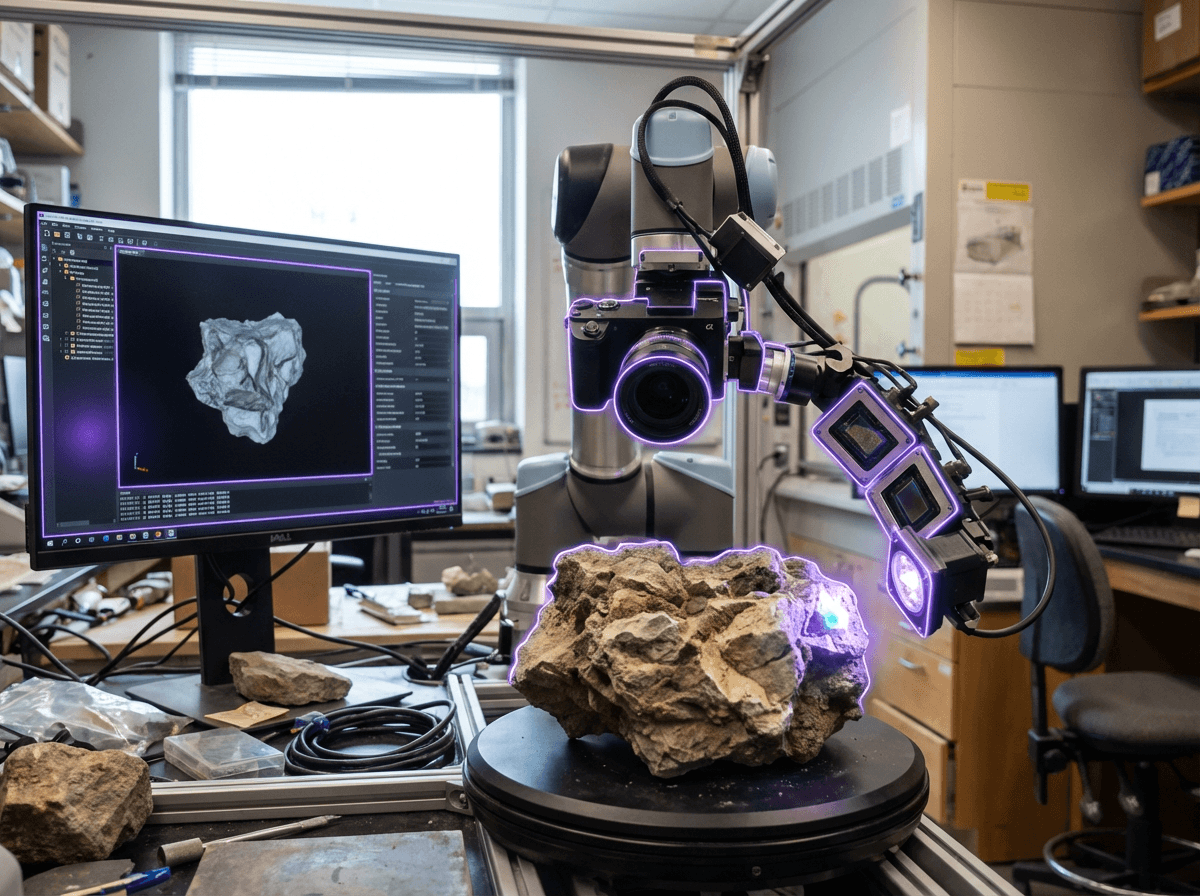

The primary challenge this technology addresses is the labor-intensive process of creating photorealistic digital content that accurately represents real-world environments. Traditional methods for building digital twins or augmented reality experiences require extensive manual work—artists must painstakingly recreate materials, measure lighting conditions with specialized equipment, and ensure that virtual objects match the physical properties of their surroundings. Inverse rendering automates much of this workflow, dramatically reducing the time and expertise needed to achieve convincing results. For industries working with spatial computing and mixed reality applications, this capability solves the persistent problem of visual coherence between real and virtual elements. When virtual objects are inserted into real scenes without accurate material and lighting information, they appear disconnected and artificial, breaking the sense of immersion. By automatically extracting these properties from camera feeds, inverse rendering enables virtual content to cast realistic shadows, reflect surrounding environments correctly, and respond to lighting changes in real-time, creating seamless integration that was previously achievable only through extensive manual effort.

Research institutions and technology companies have begun deploying inverse rendering in applications ranging from architectural visualization to film production and industrial design. Early implementations focus on controlled environments where the technology can reliably extract material properties for quality inspection, virtual prototyping, and remote collaboration scenarios. The approach shows particular promise in augmented reality systems, where maintaining visual consistency between physical and digital elements is critical for user acceptance. As computational capabilities increase and training datasets expand, inverse rendering is becoming integral to the broader vision of persistent spatial computing environments—spaces where digital information seamlessly coexists with physical reality. This trajectory aligns with growing industry emphasis on reducing the friction between capturing real-world environments and creating interactive digital experiences, positioning inverse rendering as a foundational technology for next-generation mixed reality platforms and automated content creation pipelines.

Related Organizations

The originators of the original NeRF paper and developers of MultiNeRF and immersive view technologies for Maps.

Creators of Dream Machine, a high-quality video generation model, and 3D capture technology.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

Developers of Unreal Engine 5, which features Lumen, a fully dynamic global illumination and reflection system designed for next-gen consoles and PC.

Home to the BAIR lab and researchers like Angjoo Kanazawa who pioneered NeRF technologies.

Software giant and founder of the Content Authenticity Initiative (CAI).

CSM.ai

United States · Startup

Common Sense Machines builds AI that translates 2D images into 3D assets.

AR platform company that develops the Lightship ARDK and owns Scaniverse, a 3D scanning app leveraging LiDAR.

Developer of Redshift and Cinema 4D, utilizing AI for denoising and material handling to speed up the 3D motion graphics pipeline.