Photonic Accelerators

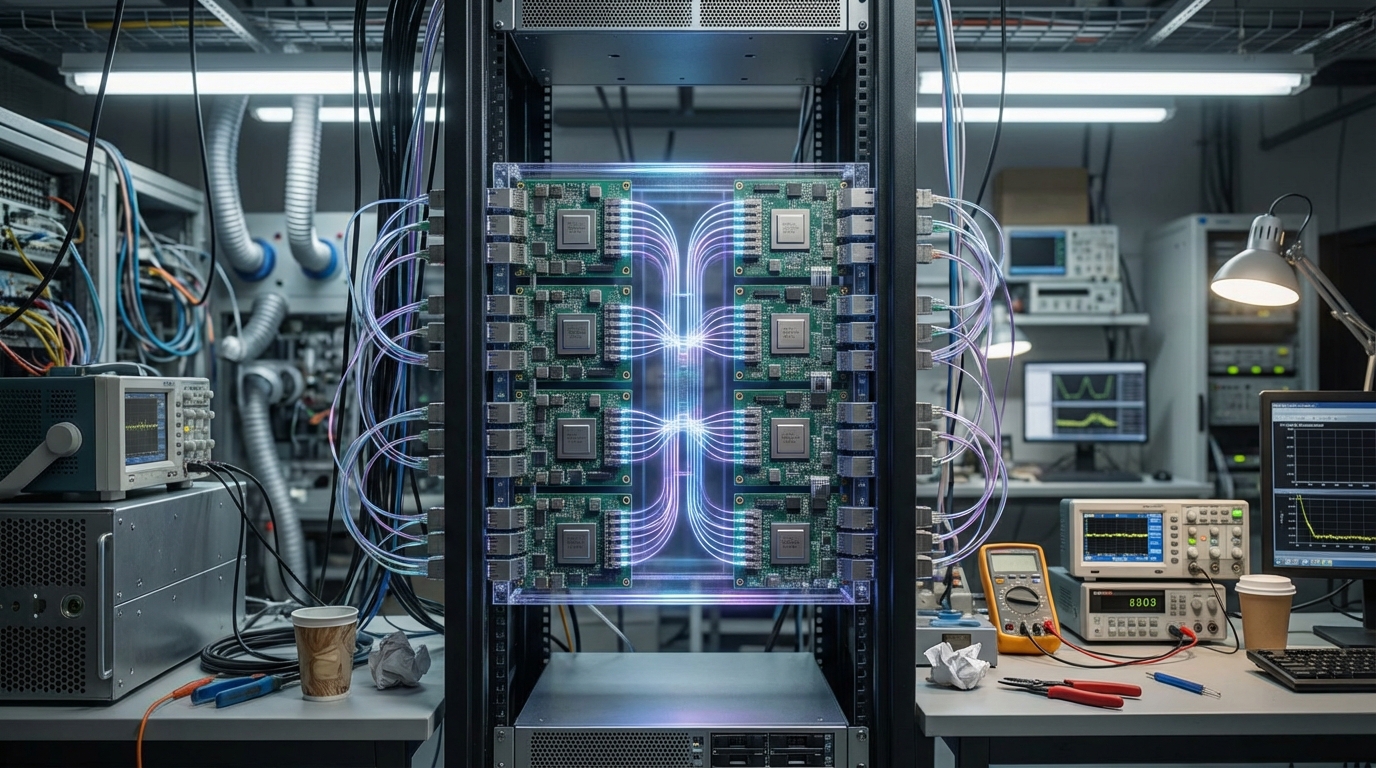

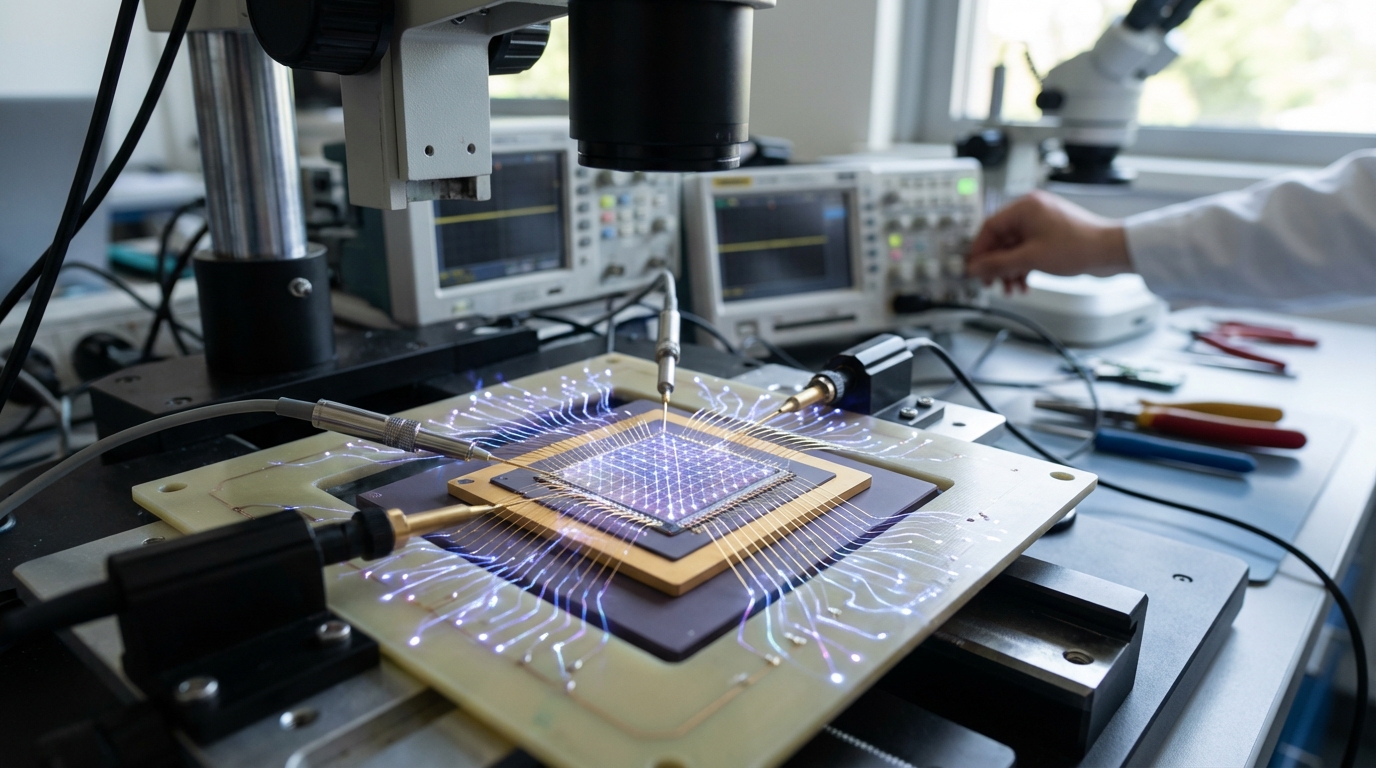

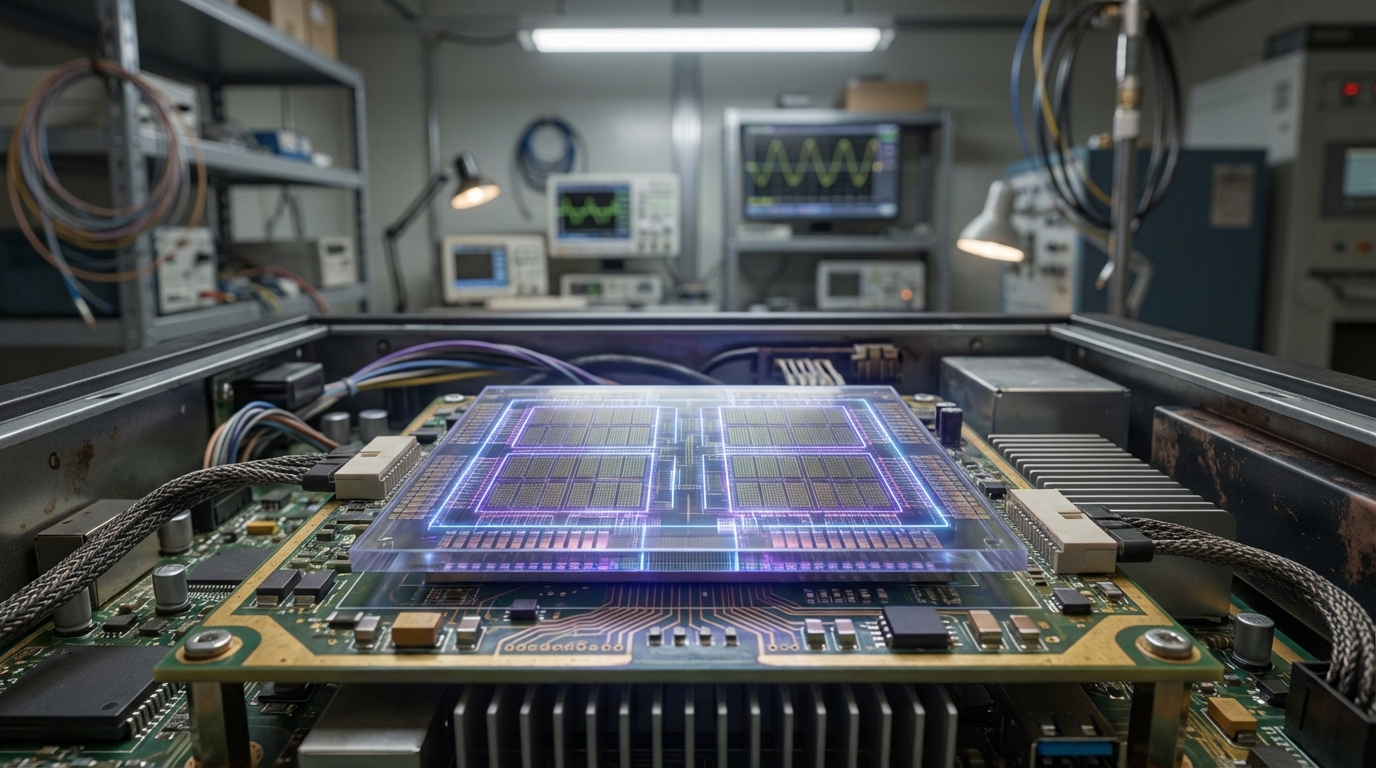

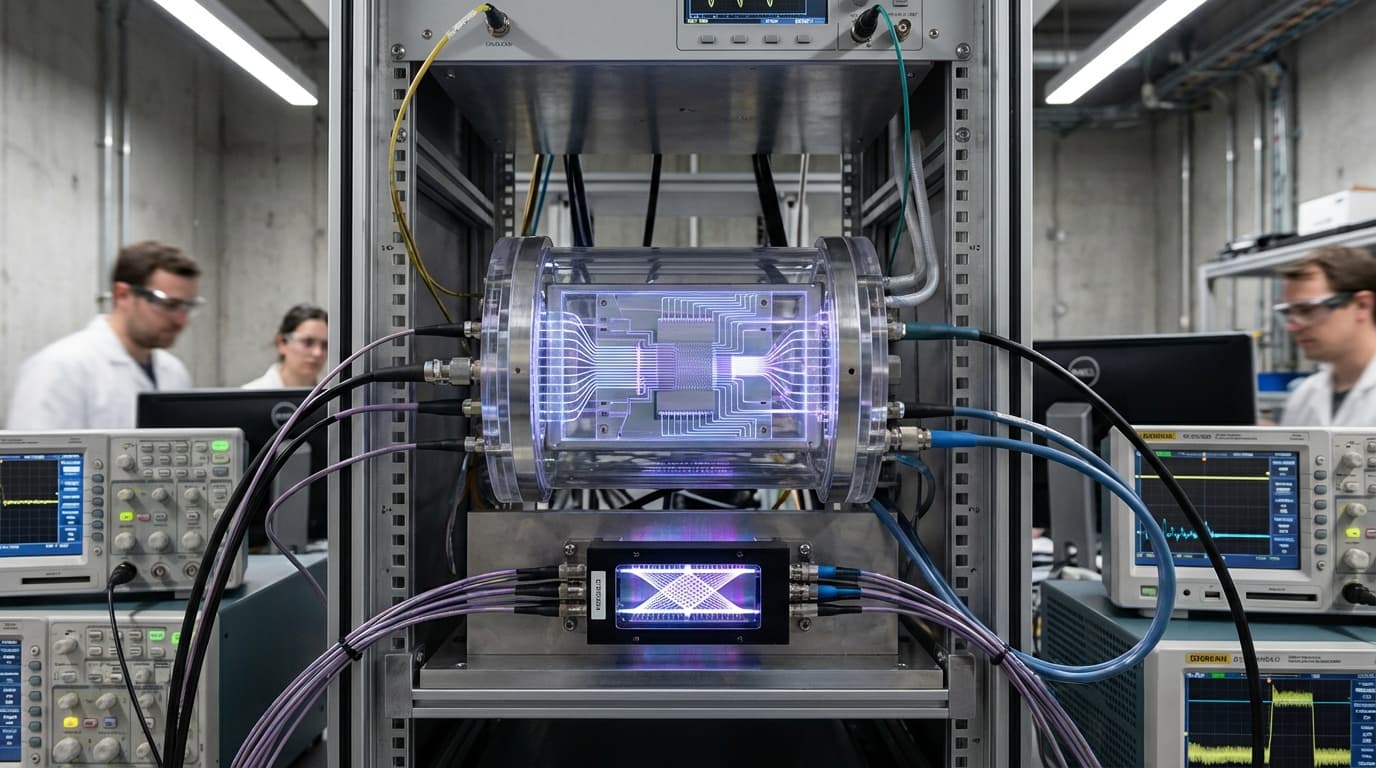

Photonic accelerators use light instead of electrons to perform matrix multiplications, the core computation in neural networks, enabling operations at femtosecond timescales—orders of magnitude faster than electronic processors. These systems encode data in light signals and use optical components like modulators, waveguides, and detectors to perform computations, bypassing the thermal and speed limitations of traditional silicon-based processors.

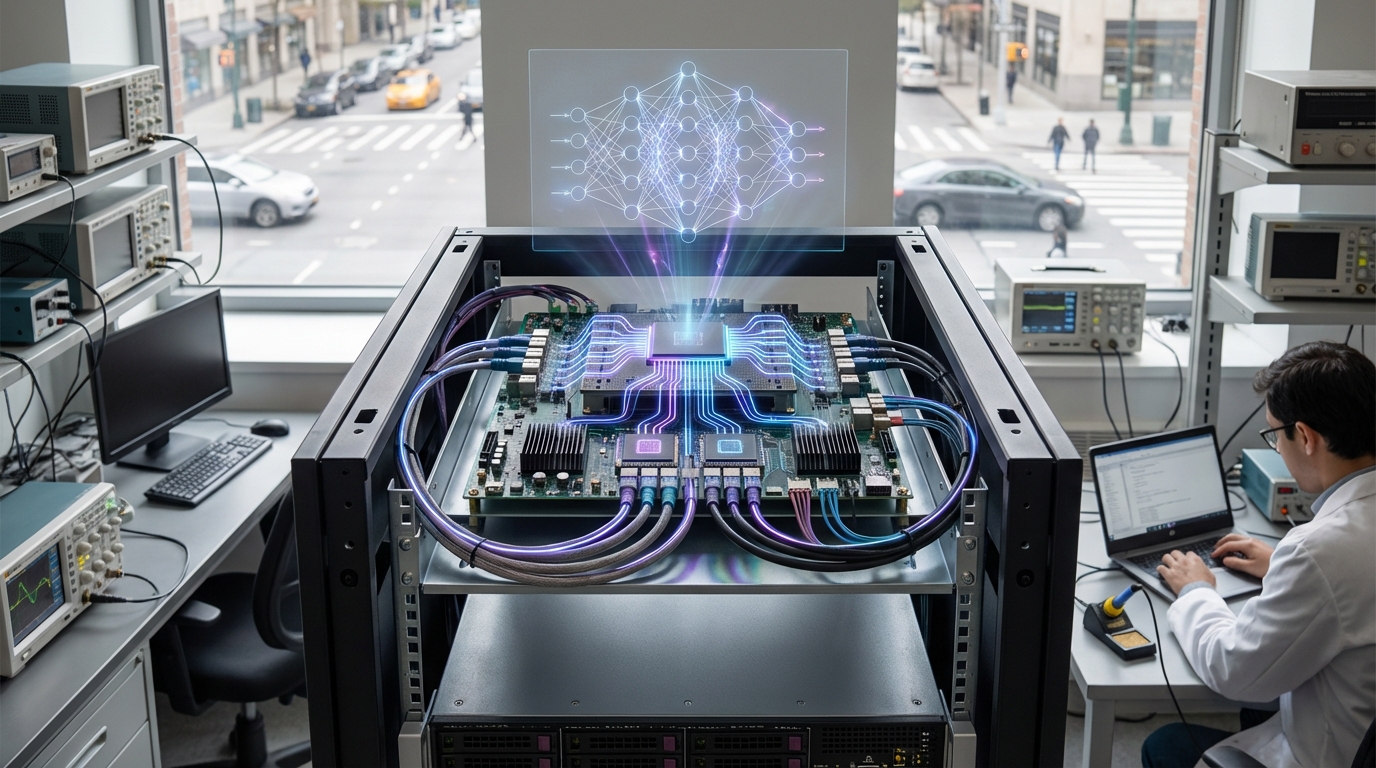

This innovation addresses the fundamental bottleneck in AI acceleration: the need for ultra-low-latency processing in real-time applications like autonomous vehicles, robotics, and interactive AI systems. By operating at the speed of light rather than electron movement, photonic accelerators can perform complex matrix operations in nanoseconds or faster, enabling real-time decision-making in safety-critical applications. Companies like Lightmatter, Lightelligence, and various research institutions are developing these technologies, with some systems already demonstrating significant speedups for specific workloads.

The technology is particularly significant for applications requiring instant response times, where even microsecond delays can be critical. As AI systems become more integrated into real-world applications requiring real-time processing, photonic accelerators offer a pathway to achieving the low-latency performance needed for truly autonomous systems. However, the technology faces challenges including integration complexity, cost, and the need for hybrid electronic-photonic systems to handle operations that don't map well to optical computing.

Related Organizations

Company developing optical computing hardware for AI workloads.

Creates photonic computing chips that use light for analog matrix multiplication.

Developing the Photonic Fabric technology platform for optical interconnects and compute.

Building hybrid photonic-electronic chips for AI acceleration.

German startup developing graphene-based photonic interconnects.

Developer of the Loihi neuromorphic research chip and Foveros 3D packaging technology.

The research arm of HPE, credited with the physical realization of the memristor and developing the Dot Product Engine.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.