Optical Interconnect Backplanes

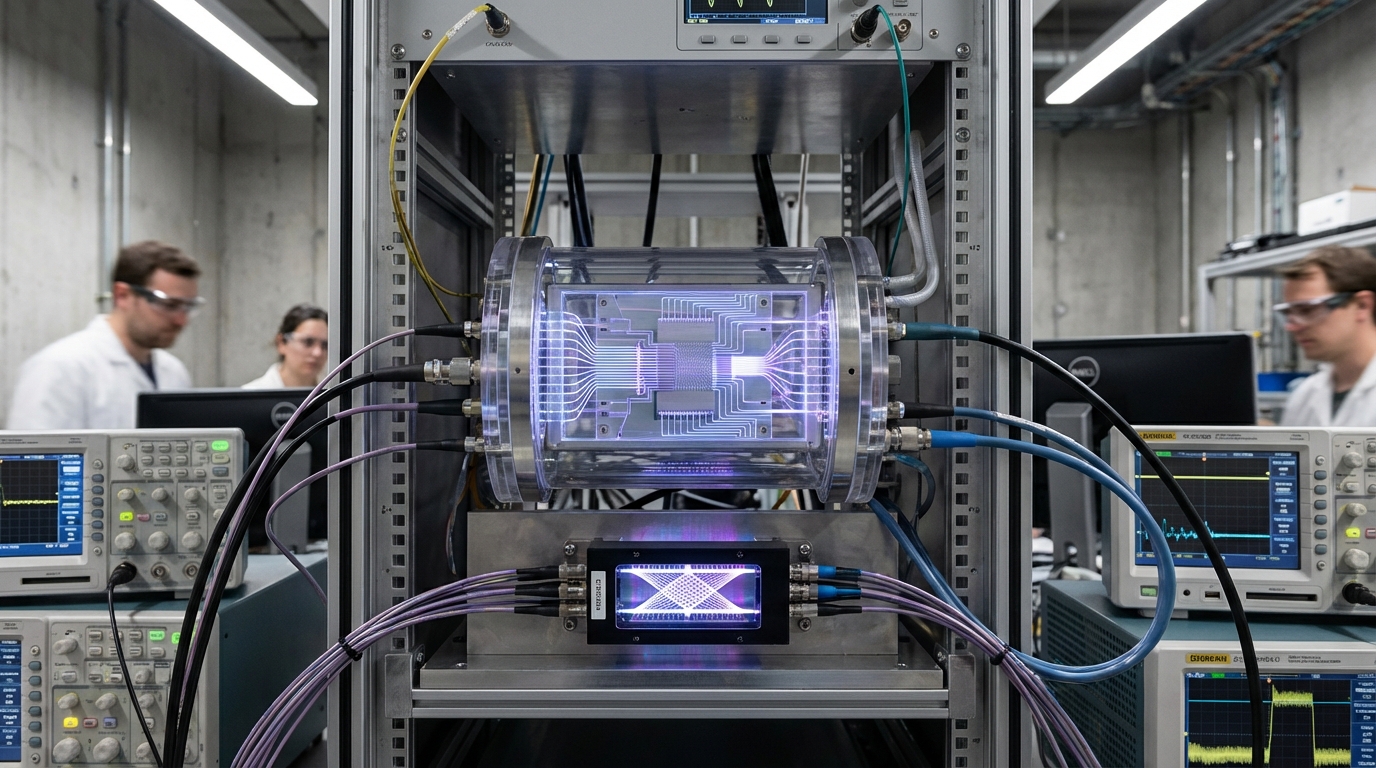

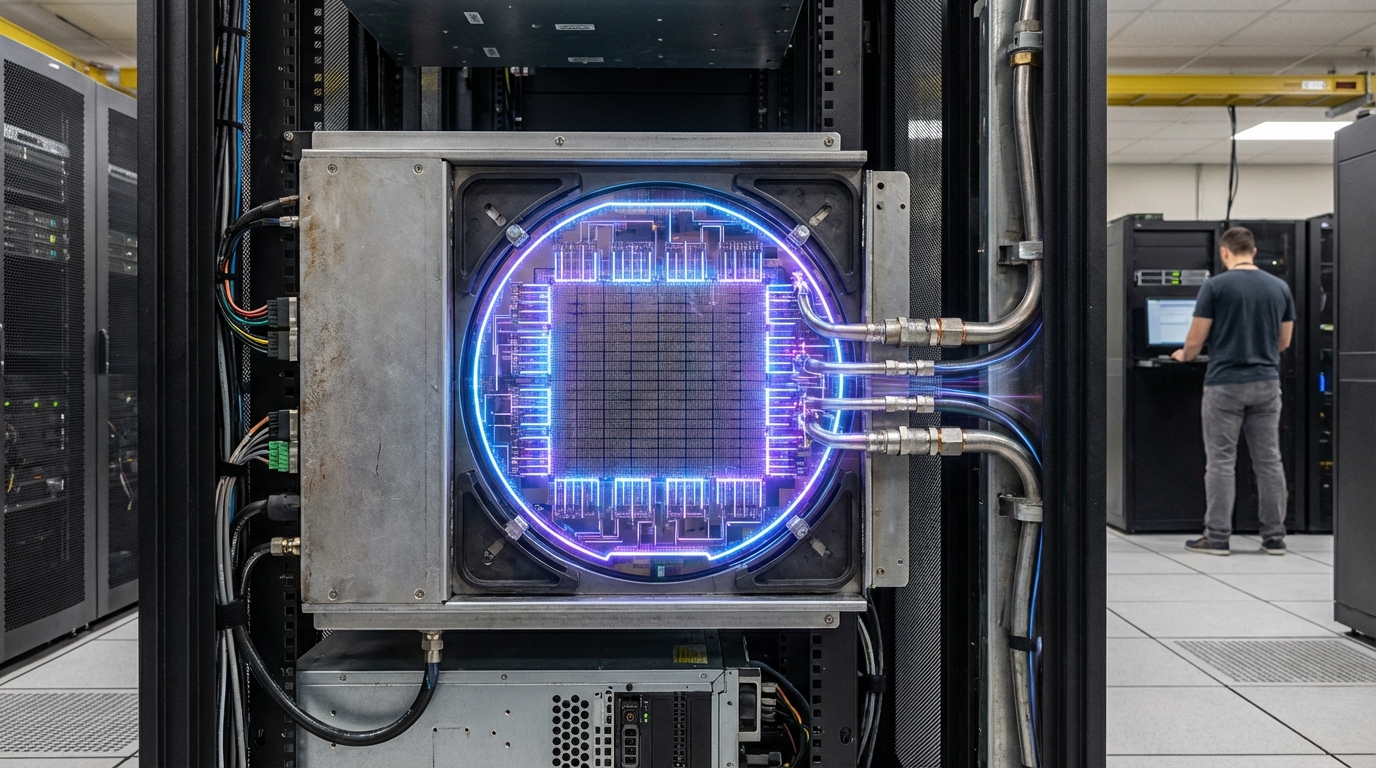

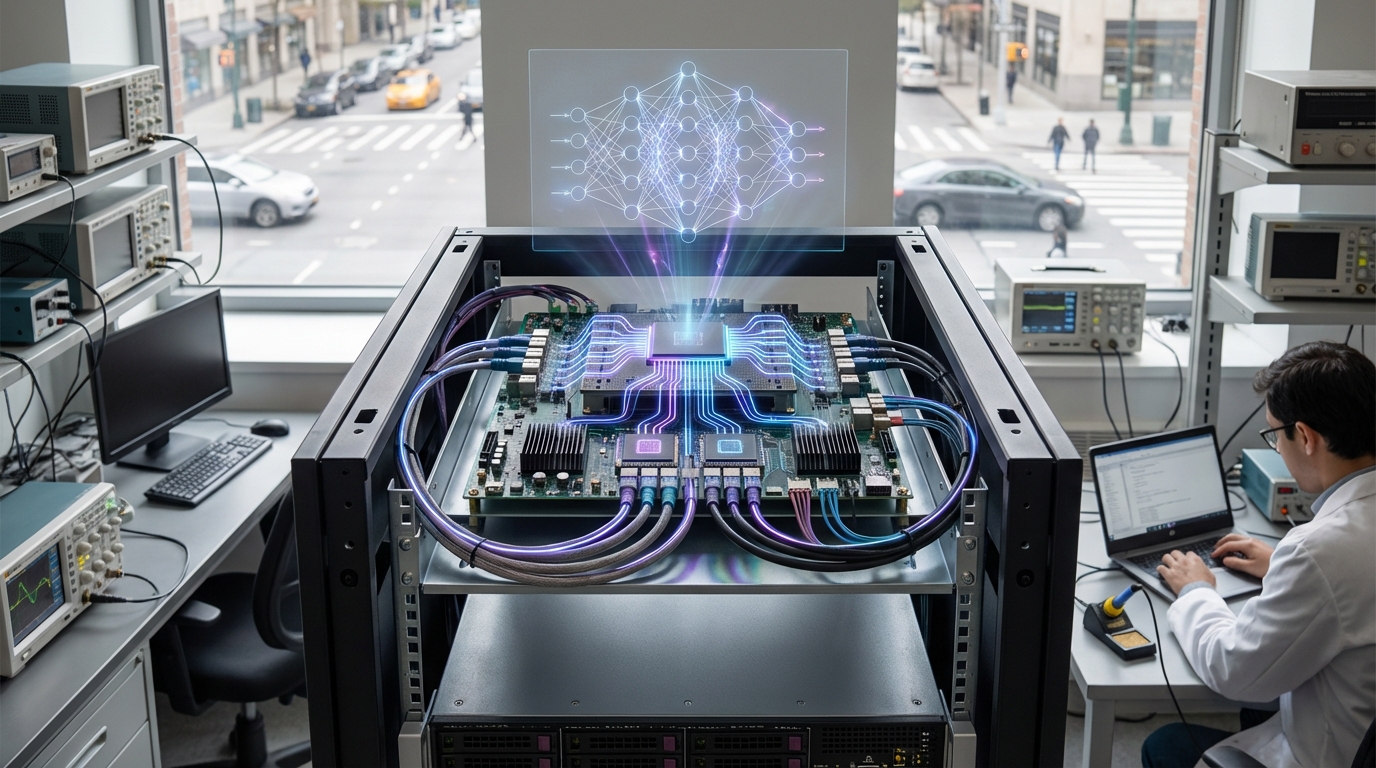

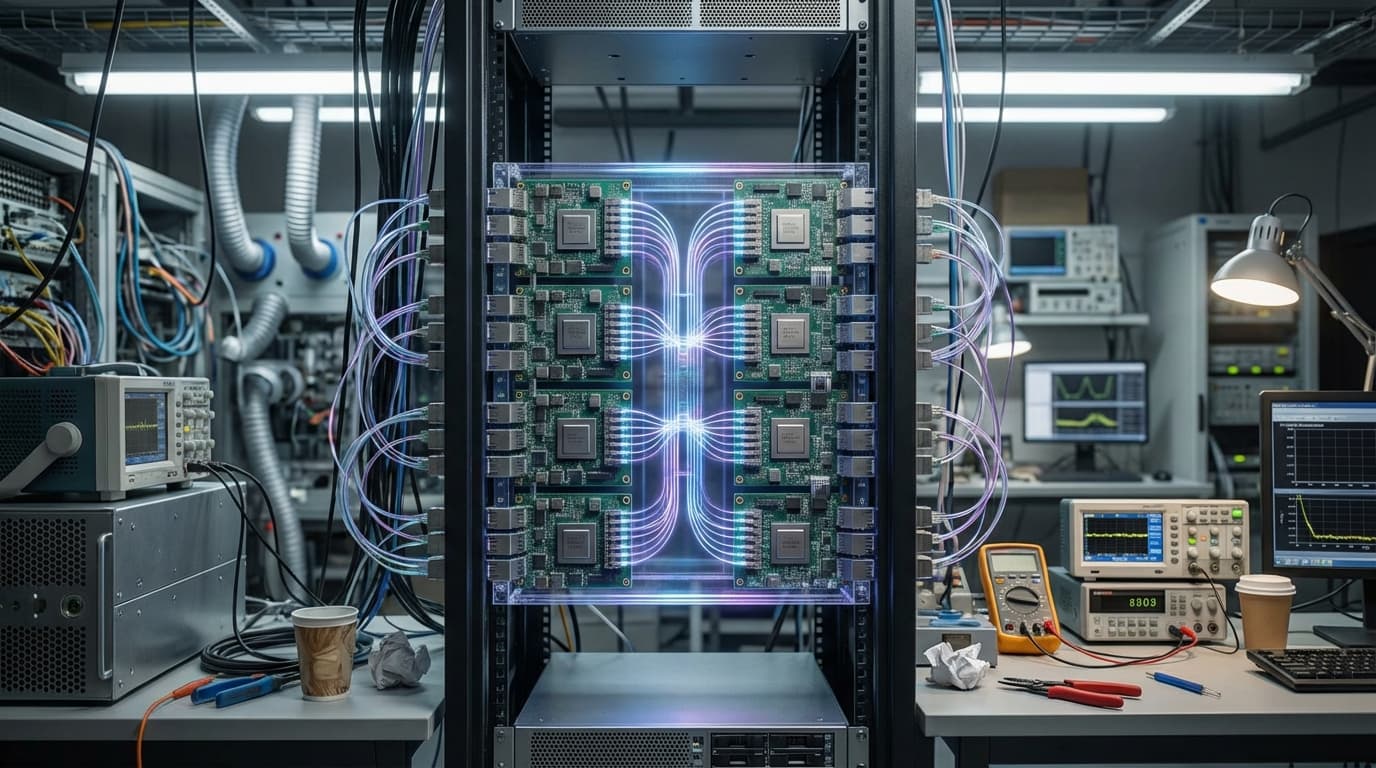

Optical interconnect backplanes use integrated photonic waveguides and optical fibers to transmit data between AI chips at terabit-per-second speeds, replacing traditional copper interconnects that become bottlenecks at scale. These systems encode data in light signals that travel through optical pathways, enabling much higher bandwidth, lower latency, and reduced power consumption compared to electrical interconnects, while also generating less heat.

This innovation addresses the communication bottleneck in large-scale AI systems, where moving data between thousands of GPUs becomes a major constraint for training trillion-parameter models. As AI clusters scale to thousands or tens of thousands of chips, traditional electrical interconnects become insufficient. Optical interconnects offer the bandwidth and efficiency needed to keep these massive systems synchronized. Hyperscale cloud providers and AI companies are deploying optical interconnects in their largest AI supercomputers.

The technology is essential for scaling AI training to ever-larger models, where communication between processors can dominate training time. As frontier AI models continue to grow, optical interconnects provide the high-bandwidth, low-latency communication fabric needed to coordinate massive parallel computation. However, the technology faces challenges including integration complexity, cost, and the need for hybrid optical-electrical systems, as not all operations can be efficiently handled optically.

Related Organizations

Developing the Photonic Fabric technology platform for optical interconnects and compute.

Creates photonic computing chips that use light for analog matrix multiplication.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

Taiwan Semiconductor Manufacturing Company (TSMC)

Taiwan · Company

Global semiconductor foundry leader providing the advanced manufacturing and packaging processes required for wafer-scale integration.

United States · Startup

Integrating multi-wavelength lasers directly onto silicon photonic chips.

Canada · Company

Provides Odin optical engines for co-packaged optics applications.

German startup developing graphene-based photonic interconnects.

Major supplier of Co-Packaged Optics (CPO) switches and optical interconnect components.