Neuro-Rights Frameworks

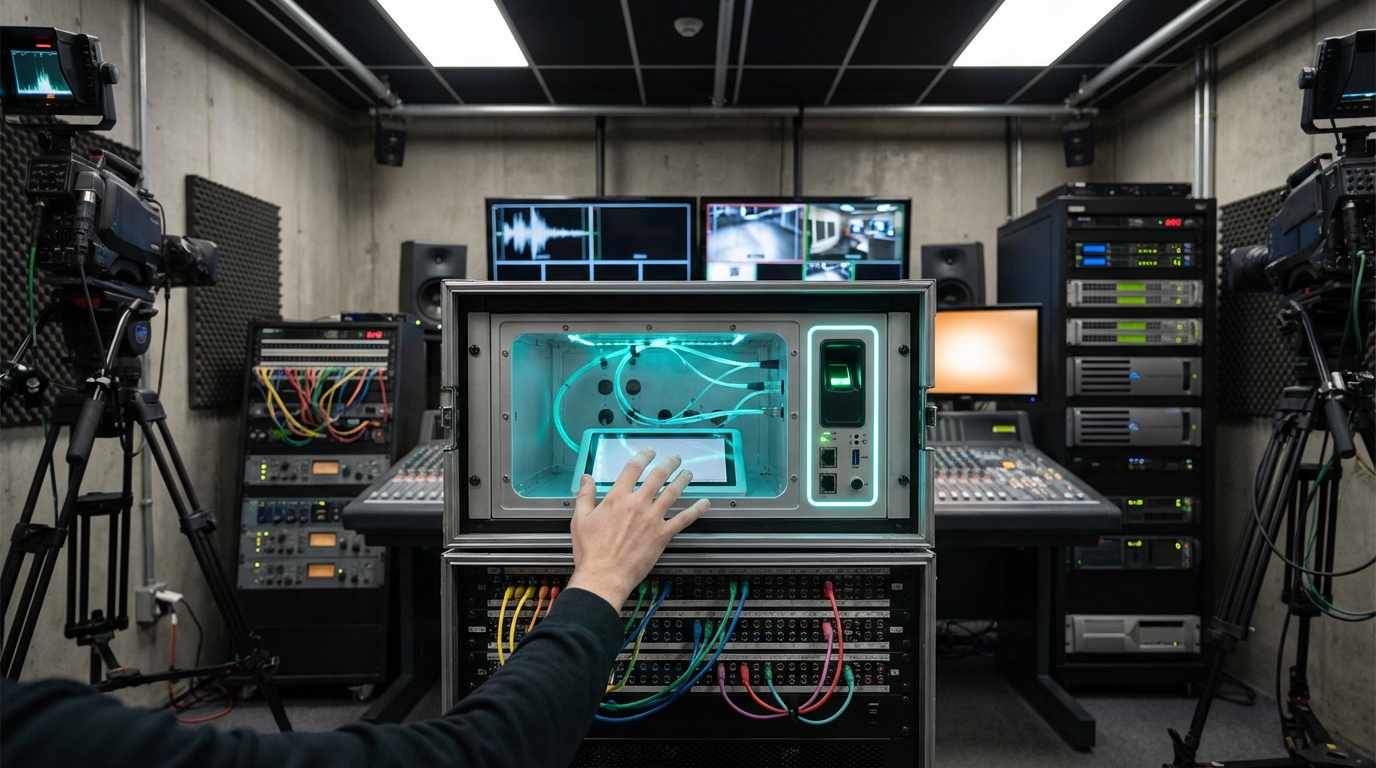

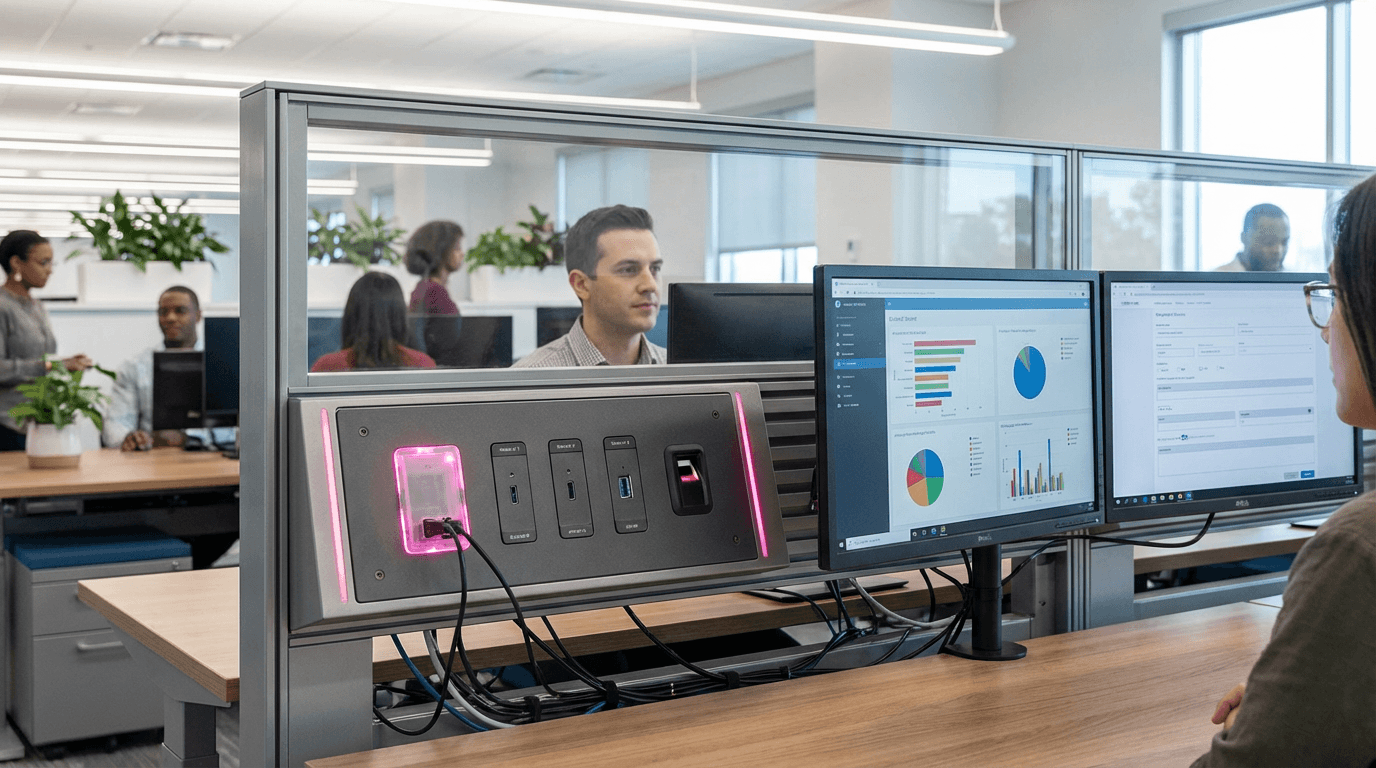

The rapid advancement of neurotechnology has introduced unprecedented capabilities for monitoring and interpreting human brain activity, creating profound implications for workplace privacy and autonomy. Brain-computer interfaces, EEG headsets, and attention-tracking systems are increasingly being deployed in professional settings to measure productivity, detect fatigue, or enhance training programs. However, these technologies also generate intimate neural data that can reveal cognitive states, emotional responses, and even unconscious biases. Neuro-rights frameworks emerge as essential governance structures that establish technical and legal boundaries around the collection, analysis, and use of this neural information. These frameworks typically encompass both protective technologies—such as encryption protocols for neural data, anonymization algorithms, and consent management systems—and legal standards that define what constitutes permissible versus invasive cognitive monitoring. At their core, they seek to preserve what researchers term "cognitive liberty": the fundamental right to mental self-determination and freedom from unauthorized access to one's thoughts and mental processes.

The workplace presents particularly complex challenges for neural privacy, as the power dynamics between employers and employees can create pressure to adopt monitoring technologies that might otherwise be considered intrusive. Organizations seeking competitive advantages through productivity optimization may be tempted to deploy neurotechnology without adequate safeguards, potentially accessing information about workers' stress levels, attention patterns, or even subconscious reactions to workplace stimuli. Neuro-rights frameworks address these concerns by establishing clear protocols for informed consent, limiting the scope of permissible data collection, and ensuring that employees retain ownership and control over their neural information. These standards also tackle the problem of "function creep," where technologies initially deployed for benign purposes gradually expand into more invasive applications. By creating technical barriers—such as data minimization requirements and purpose limitation protocols—these frameworks prevent neural monitoring systems from evolving into comprehensive surveillance apparatus that could undermine worker autonomy and dignity.

Early implementations of neuro-rights protections are emerging across various jurisdictions and industries, with some regions beginning to incorporate cognitive liberty into constitutional frameworks and labor regulations. Research institutions and technology companies developing workplace neurotechnology are increasingly adopting voluntary standards that include neural data encryption, transparent disclosure of monitoring capabilities, and employee opt-out provisions. Industry observers note growing recognition that sustainable adoption of workplace neurotechnology requires robust privacy protections that maintain trust between employers and workers. As these technologies become more sophisticated and accessible, the development of comprehensive neuro-rights frameworks will likely accelerate, driven by both regulatory pressure and ethical considerations. The trajectory suggests a future where workplace neurotechnology coexists with strong protections for mental privacy, ensuring that productivity enhancement does not come at the cost of cognitive autonomy. This balance will be crucial as organizations navigate the tension between leveraging neurotechnology's potential benefits and respecting the fundamental human right to mental self-determination.

Related Organizations

Advocacy group led by Rafael Yuste promoting the five ethical neurorights in international law.

The legislative body that passed the world's first constitutional amendment protecting neurorights.

A research initiative dedicated to developing human rights frameworks for neurotechnology.

OECD

France · Government Agency

Adopted the 'Recommendation on Responsible Innovation in Neurotechnology' to guide governments and companies.

The UN agency responsible for the 'Recommendation on the Ethics of Artificial Intelligence'.

Produces 'Ethically Aligned Design' standards, addressing the legal and ethical implications of autonomous systems.

Council of Europe

France · Government Agency

Oversees the Oviedo Convention, the only international legally binding instrument prohibiting the use of genetic engineering on the human germline.

The UK's independent regulator for data rights, providing specific guidance on AI and data protection.

A professional society promoting the development and responsible application of neuroscience.

A think tank dedicated to the ethical, legal, and social implications of neuroscience.