Physiological Computing Sensors

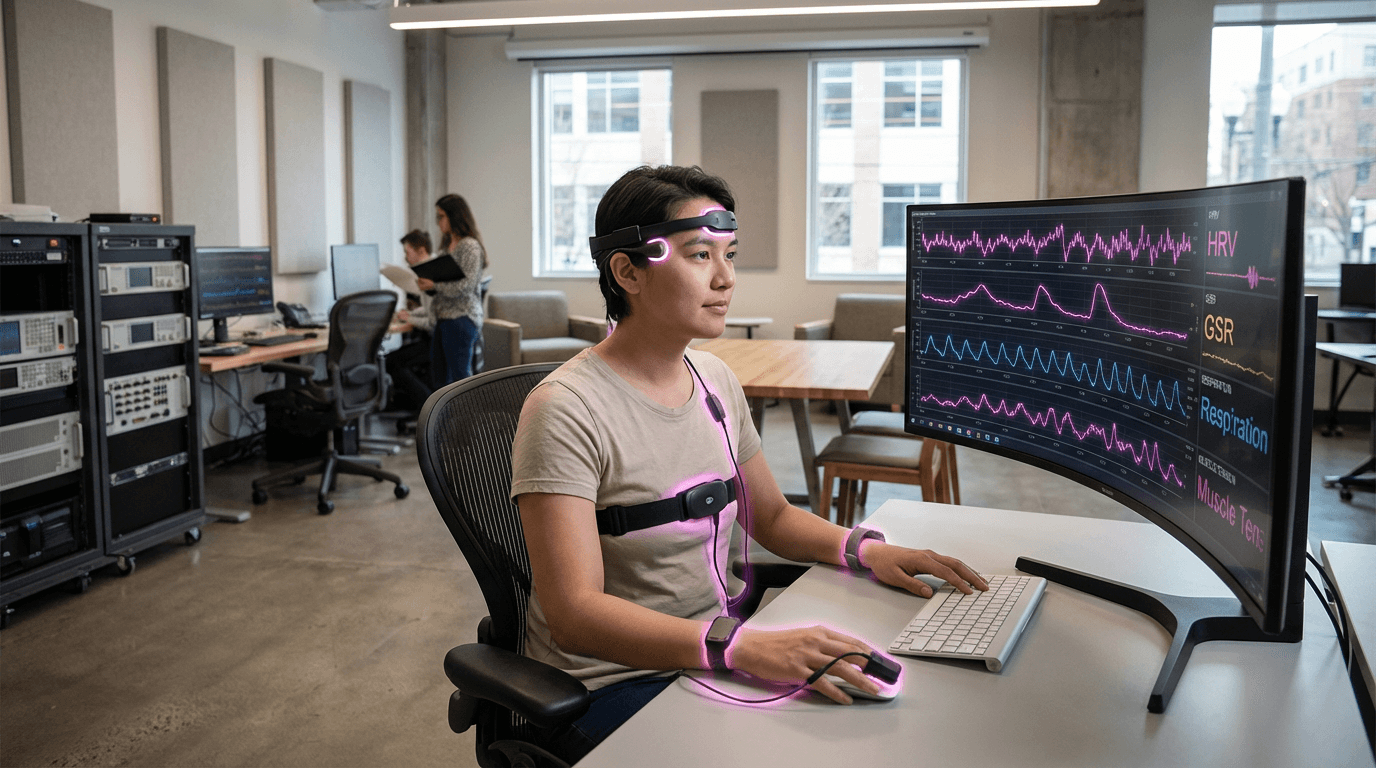

Physiological computing sensors represent a convergence of biomedical engineering and human-computer interaction, designed to capture and interpret the body's involuntary signals as a means of understanding internal states. These devices employ multiple sensing modalities simultaneously—electrocardiography electrodes to detect heart rate variability (HRV), galvanic skin response (GSR) sensors that measure minute changes in skin conductance, respiratory bands or thermal sensors to monitor breathing patterns, and electromyography (EMG) electrodes that track muscle tension. Unlike traditional biometric systems that simply identify individuals, physiological computing sensors continuously analyse these biosignals to infer cognitive and emotional states in real time. The technology relies on established psychophysiological principles: increased skin conductance correlates with emotional arousal, HRV patterns reflect autonomic nervous system balance and stress responses, respiratory rate changes indicate cognitive load, and muscle tension often signals physical or psychological strain. By fusing data from these multiple channels, the systems can distinguish between different affective states with greater accuracy than single-parameter approaches.

The fundamental challenge these sensors address is the opacity of human internal experience in interactive systems. Traditional interfaces require explicit user input, creating friction and cognitive overhead while failing to capture the user's actual state of engagement, frustration, or cognitive overload. This limitation has profound implications across industries where human performance and wellbeing are critical. In high-stakes environments such as aviation, healthcare, and industrial operations, operators may experience dangerous levels of stress or fatigue without external indicators until performance degrades. Similarly, in educational technology and workplace productivity tools, systems cannot adapt to learners or workers who are struggling, disengaged, or overwhelmed. Physiological computing sensors enable what researchers call "affective computing" or "implicit interaction"—systems that respond to users' states without requiring conscious input. This capability transforms the human-machine relationship from one of command-and-control to one of mutual adaptation, where technology adjusts its behaviour based on physiological feedback.

Current implementations span from research prototypes to commercially available wearables, with adoption accelerating across multiple sectors. In automotive safety, some manufacturers are exploring driver monitoring systems that combine physiological sensors with computer vision to detect drowsiness or distraction. Educational platforms are piloting adaptive learning systems that adjust difficulty or provide breaks based on detected cognitive load. Mental health applications use continuous physiological monitoring to help users recognise stress patterns and trigger intervention strategies. The workplace wellness sector has seen growing interest in desk-embedded or wearable sensors that prompt posture changes, breathing exercises, or breaks when sustained tension is detected. As sensor miniaturisation continues and machine learning algorithms improve at pattern recognition across diverse populations, physiological computing is positioned to become a foundational element of responsive environments—spaces and systems that continuously sense and adapt to human needs. This trajectory aligns with broader movements toward personalised medicine, human-centred AI, and the quantified self, suggesting a future where our technologies understand not just what we do, but how we feel while doing it.

Related Organizations

Develops medical-grade wearables (Embrace) monitoring EDA and physiological signals.

Creates open-source brain-computer interface tools and the Galea headset (integrating with VR) for researching physiological responses.

Produces EEG headsets and the BCI-OS platform, allowing developers to build applications that respond to cognitive stress and facial expressions.

Developer of the Muse brain-sensing headband used in meditation and wellness retreats.

BIOPAC Systems

United States · Company

Provides data acquisition systems and software for life science research and education.

Develops high-performance BCI hardware, including the 'Unicorn' hybrid black interface for developers.

Develops BCI-enabled headphones that detect focus and intent to control digital experiences.

Develops semi-dry and dry EEG wearable devices for human behavior research and neurotechnology applications.

A leading provider of wearable wireless sensor products and solutions for research and clinical trials.

The global leader in eye-tracking technology, providing the sensor stack required for dynamic foveated rendering.