Expressive Androids

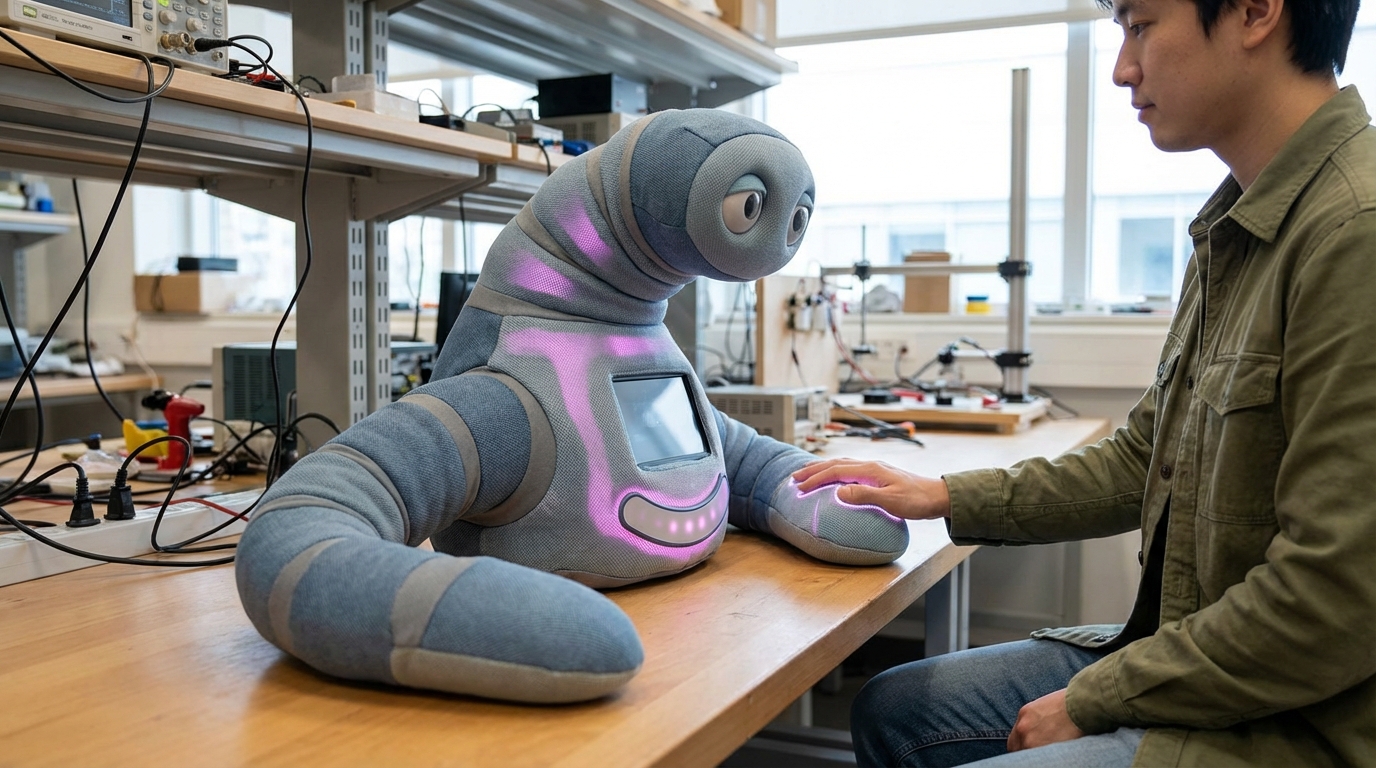

Expressive androids represent a convergence of robotics, materials science, and affective computing designed to bridge the uncanny valley through sophisticated biomimetic design. These humanoid robots employ multi-layered silicone skin embedded with networks of micro-actuators that enable subtle facial movements—from the raising of an eyebrow to the formation of a genuine-seeming smile. The underlying architecture typically combines pneumatic or electric actuators with advanced servo systems that control dozens of independent facial regions, allowing for expressions that mirror the complexity of human emotion. Beyond the face, these systems integrate fluid motion control throughout the body, coordinating gestures, posture shifts, and gait patterns that align with emotional states. Machine learning algorithms process real-time social cues from human interaction partners, adjusting expressive outputs to maintain appropriate emotional resonance and timing in conversations.

The development of expressive androids addresses a fundamental challenge in human-robot interaction: the need for intuitive, emotionally legible communication in contexts where traditional interfaces fall short. In healthcare settings, particularly elder care and autism therapy, research suggests that robots capable of displaying empathy through facial expressions and body language can establish rapport more effectively than purely functional machines. Educational applications benefit from androids that can model emotional regulation and social skills for children, while hospitality and customer service sectors explore their potential to provide culturally sensitive interactions that adapt to individual preferences. The technology also enables new forms of telepresence, where remote operators can project their emotional state through android avatars, creating a sense of co-presence that video conferencing cannot match.

Early deployments of expressive androids have appeared in Japanese hotels, museums, and research facilities, where they serve as receptionists, guides, and research platforms for studying human social cognition. Companies developing these systems continue to refine the balance between realism and comfort, as overly lifelike appearances can trigger discomfort while insufficient expressiveness limits effectiveness. Industry analysts note growing interest from healthcare providers seeking non-pharmaceutical interventions for loneliness and cognitive decline in aging populations. As the technology matures, expressive androids are likely to become more prevalent in scenarios requiring sustained social engagement, particularly where human labor shortages intersect with populations needing consistent emotional support. The trajectory points toward increasingly sophisticated affective systems that can read and respond to human emotional states with nuance, potentially reshaping how we think about companionship, care, and the boundaries between human and machine social partners.

Related Organizations

A research group at Osaka University and ATR led by Hiroshi Ishiguro, famous for creating the Geminoid series of ultra-realistic androids.

Designers of the Ameca and RoboThespian robots, used primarily for entertainment and interaction in science centers and museums.

Creators of Sophia, focusing on high-fidelity facial expressions and AI for deep social engagement.

Creators of a social robotics platform featuring a back-projected face capable of advanced conversational AI and social cues.

Investigates soft robotics for safe human-robot interaction and expressive animatronics.

Manufacturer of autonomous service robots for business, capable of recognizing faces and answering questions in museums and centers.

Creators of the Harmony AI system and robotic heads with modular faces, focusing on companionship and adult applications.

Developing biomimetic androids with hydraulic artificial muscles that mimic human anatomy and strength.

Backed by OpenAI, developing the 'Eve' (wheeled) and 'Neo' (bipedal) androids for labor markets.

Developing general-purpose humanoid robots (Phoenix) powered by Carbon, their AI control system.