Spatial Foundation Models

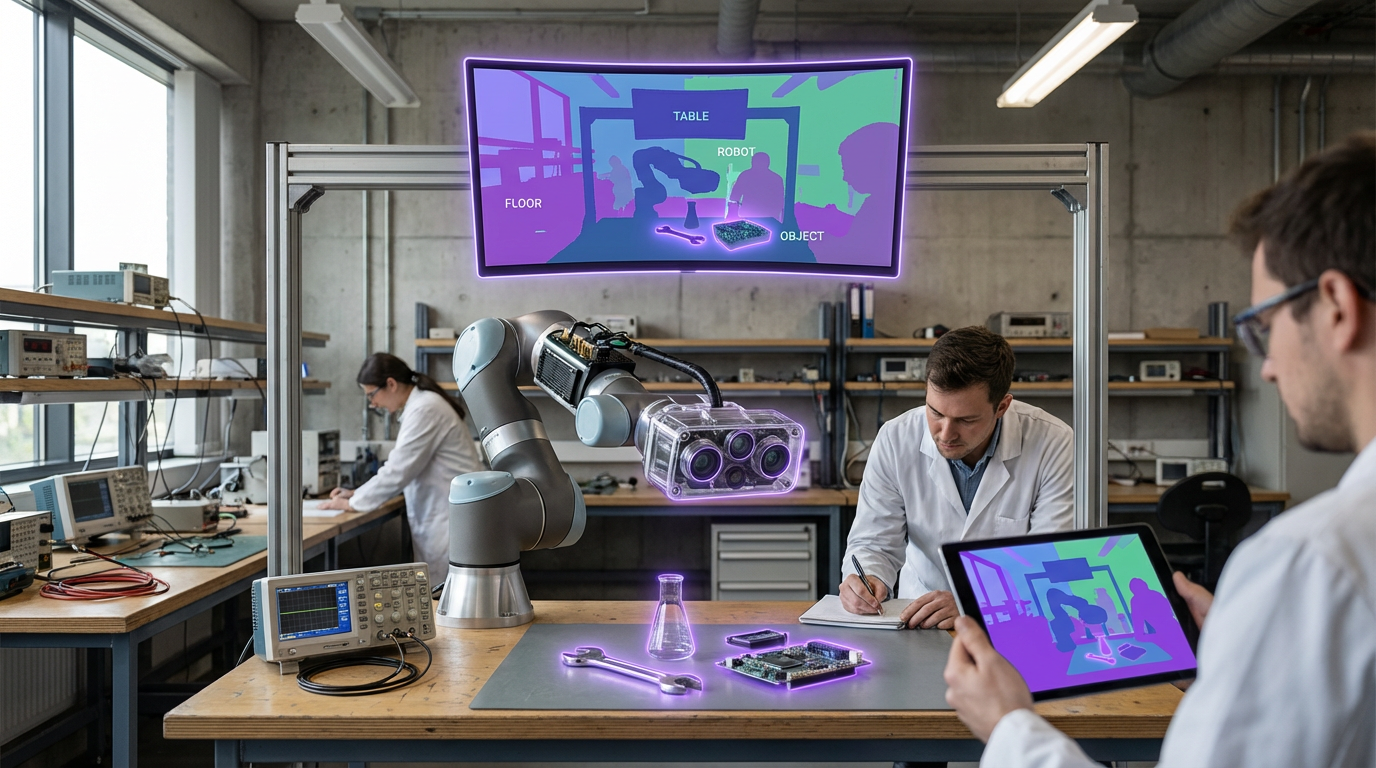

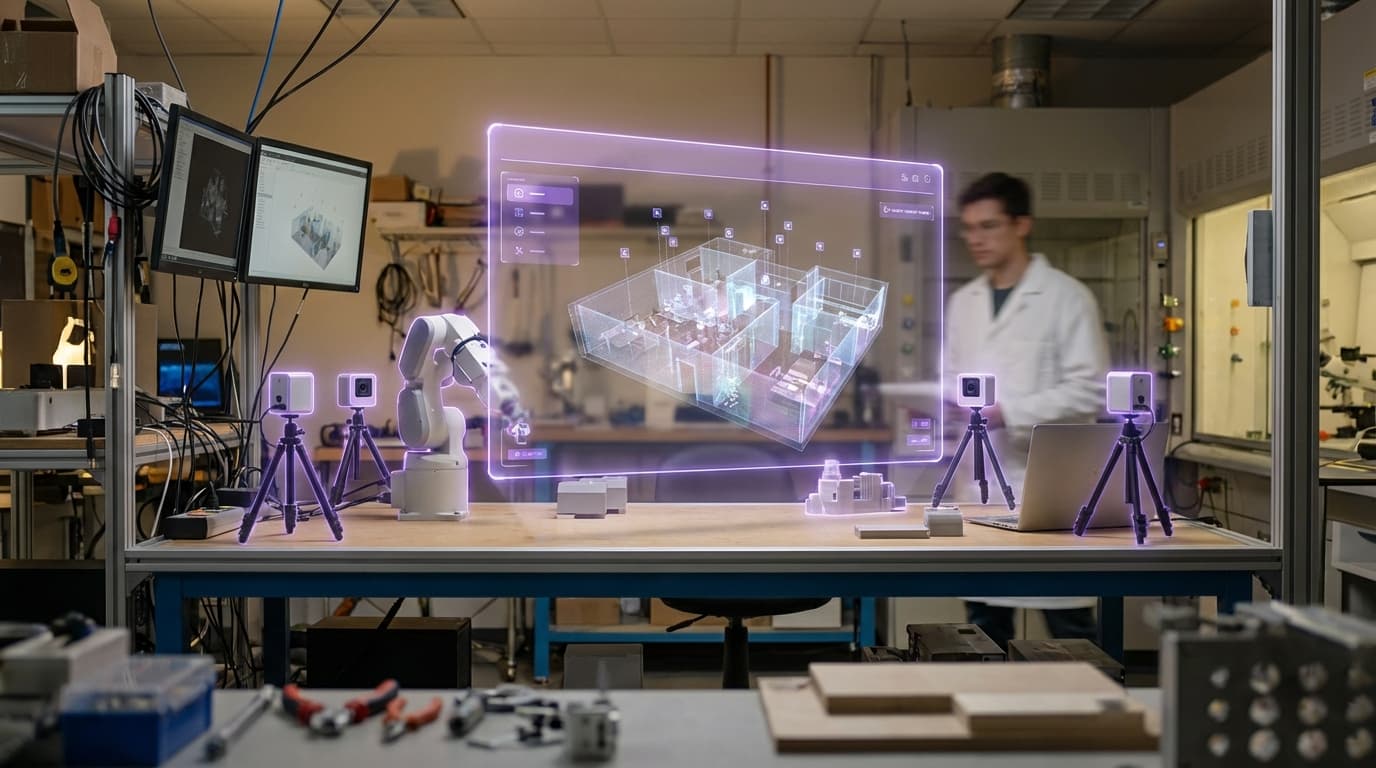

Spatial Foundation Models represent a significant evolution in artificial intelligence, extending the capabilities of large language models (LLMs) beyond text and 2D imagery into the realm of three-dimensional understanding. Unlike traditional language models that process information in a flat, sequential manner, these systems are trained on multimodal datasets that include 3D spatial relationships, physical dynamics, depth information, and embodied interaction data captured from real-world environments. The technical architecture typically combines transformer-based models with specialized encoders that can process point clouds, mesh data, depth maps, and spatial scene graphs. By learning from vast datasets of 3D scans, robotic manipulation sequences, and spatial navigation patterns, these models develop an intrinsic understanding of how objects relate to one another in physical space, how forces and physics govern movement, and how humans naturally interact with their surroundings. This spatial reasoning capability enables the models to predict occluded geometry, understand affordances (what actions objects enable), and generate coherent instructions for tasks that require physical manipulation or navigation.

The emergence of Spatial Foundation Models addresses a critical gap in the development of extended reality (XR) systems, autonomous robotics, and spatial computing applications. Traditional AI systems struggle with tasks that require understanding the physical world's three-dimensional nature—challenges like determining whether a virtual object will fit in a real space, predicting how items should be arranged for optimal accessibility, or generating natural movement paths through cluttered environments. These limitations have constrained the development of truly intelligent AR/VR experiences and embodied AI agents. By incorporating spatial reasoning at the foundational level, these models enable new capabilities such as automatic scene understanding for mixed reality applications, intelligent content placement that respects real-world constraints, and natural language interfaces that can translate verbal instructions into precise spatial actions. This technology also supports more intuitive human-robot collaboration, where systems can understand commands like "place this on the shelf" without requiring explicit coordinate programming.

Early research implementations have demonstrated promising results in applications ranging from AR content generation to robotic task planning. Pilot programs in industrial settings suggest these models can significantly reduce the time required to configure spatial computing applications, automatically adapting virtual interfaces to physical workspace layouts. In the consumer space, spatial foundation models are beginning to enable more natural interactions with smart home devices and AR navigation systems that understand contextual placement rather than just GPS coordinates. The technology aligns with broader industry trends toward embodied AI and the convergence of physical and digital experiences. As spatial computing platforms mature and 3D sensing becomes ubiquitous through devices like smartphones and AR glasses, the training data available for these models will expand dramatically. This virtuous cycle suggests that spatial foundation models will become increasingly sophisticated, eventually enabling AI systems that can reason about and interact with the physical world with human-like spatial intelligence, fundamentally transforming how we design and experience immersive technologies.

Related Organizations

Developers of the Gemini family of models, which are trained from the start to be multimodal across text, images, video, and audio.

OpenAI

United States · Company

Creator of GPT-4o, a natively multimodal model capable of reasoning across audio, vision, and text in real-time.

Creator of Semantic Scholar and various open-source models for scientific text processing.

CSM.ai

United States · Startup

Common Sense Machines builds AI that translates 2D images into 3D assets.

Creators of Dream Machine, a high-quality video generation model, and 3D capture technology.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

Human-Centered AI Institute conducting research on BEHAVIOR benchmark.

Applied AI research company shaping the next era of art, entertainment and human creativity.

Open source generative AI company, creators of Stable Audio.