Spatial Operating Systems

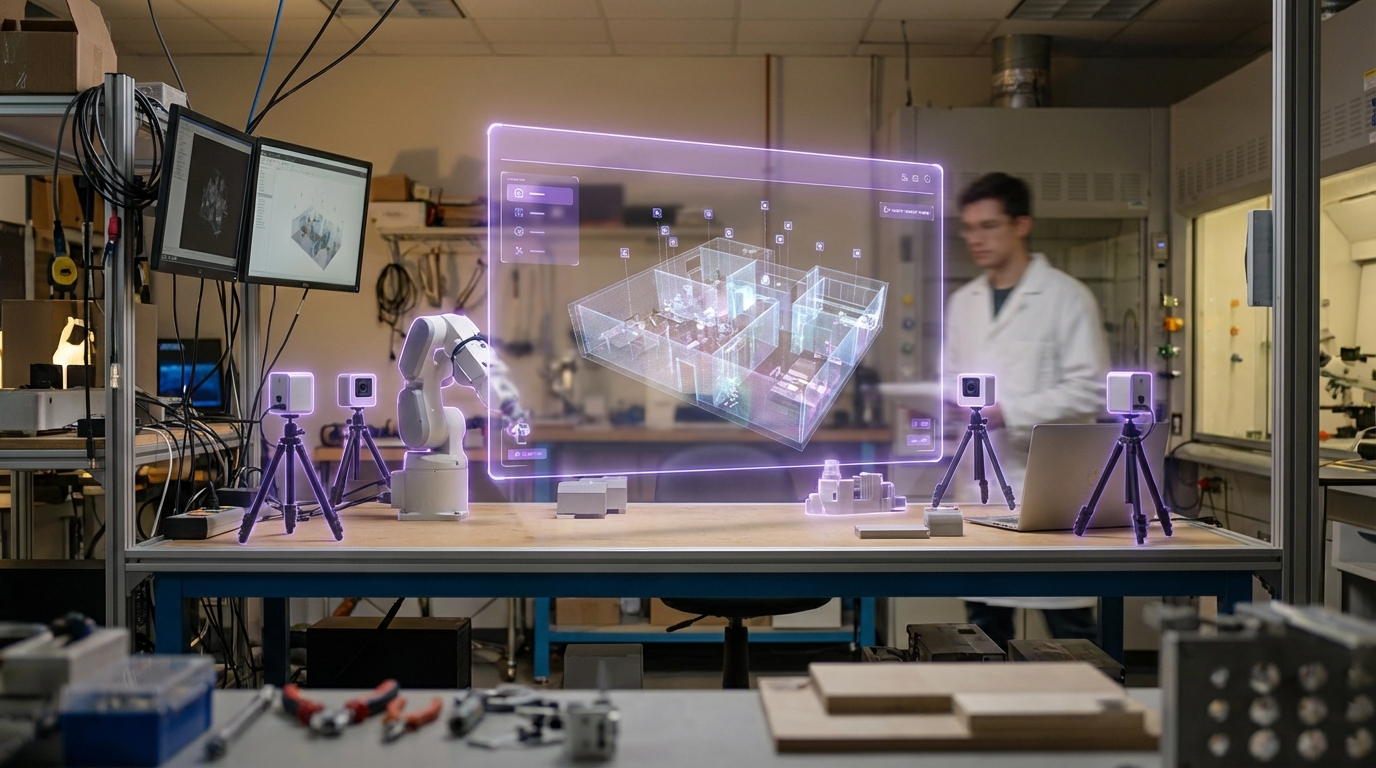

Spatial Operating Systems represent a fundamental reimagining of how humans interact with computing environments, moving beyond the flat, screen-bound paradigm that has dominated personal computing for decades. Unlike traditional operating systems that organize information within rectangular windows on two-dimensional displays, these architectures treat three-dimensional space itself as the primary interface canvas. The core technical innovation lies in their ability to maintain persistent spatial anchors—digital objects that remain fixed to specific locations in the physical world across sessions and devices. This requires sophisticated real-time environment mapping, precise localization systems, and distributed state management to ensure that virtual elements appear consistently positioned for all users. The system must continuously process inputs from multiple sensor arrays including depth cameras, inertial measurement units, and environmental scanners, while simultaneously managing novel input modalities such as eye tracking for selection, hand gestures for manipulation, and voice commands for system-level operations. The computational architecture differs markedly from conventional operating systems, prioritizing spatial indexing, occlusion rendering, and physics simulation over traditional file hierarchies and application windows.

The emergence of spatial operating systems addresses critical limitations in how current computing paradigms handle increasingly complex mixed reality workflows. Traditional desktop and mobile interfaces force users to context-switch between the physical and digital realms, creating cognitive overhead and limiting the naturalness of interaction. Industries ranging from manufacturing to healthcare face challenges in overlaying digital information onto physical workspaces in ways that feel intuitive and persistent. Spatial OS architectures solve this by enabling what researchers call "reality-resident computing"—where digital tools and information exist as stable, manipulable objects within the user's physical environment rather than confined to separate screen spaces. This enables new collaborative workflows where multiple users can simultaneously interact with the same spatial data, seeing each other's gestures and modifications in real-time. The technology also unlocks novel application models, such as persistent spatial annotations that remain attached to physical objects across time, or computational agents that inhabit specific locations and respond to user presence.

While fully-realized spatial operating systems remain largely in research and development phases, early implementations are beginning to emerge from major technology platforms investing in mixed reality infrastructure. Prototype deployments in industrial settings demonstrate applications such as spatial maintenance guides that overlay repair instructions directly onto machinery, or collaborative design environments where architects manipulate building models at full scale in physical space. The technology shows particular promise in training scenarios, where spatial persistence allows learners to practice procedures with virtual equipment that remains consistently positioned in their workspace. As hardware capabilities advance—particularly in areas of field-of-view, resolution, and battery life for wearable devices—spatial operating systems are positioned to become the foundational layer for ambient computing environments. This trajectory aligns with broader industry movement toward dissolving the boundaries between physical and digital spaces, suggesting that spatial OS architectures may eventually complement or even supplant traditional windowing systems for certain categories of work and interaction, particularly those requiring spatial reasoning or physical-digital integration.

Related Organizations

Offers the AI Stack which includes tools for hardware-aware model efficiency and architecture search.

A major contributor to Monado, the open-source XR runtime for Linux.

Produces VR headsets and actively partners with BCI companies (like OpenBCI and MyndPlay) to integrate brain-sensing into their hardware ecosystem.

Specializes in AR glasses and AI, producing the Rokid Max and Station.

Manufacturer of consumer AR glasses (Air, Light) that tether to phones or computing pucks.

Developing a standalone VR headset running a Linux-based VR window manager (SimulaOS).

VR productivity software company developing the 'Visor' hardware.

Creators of the Lynx R-1, a standalone Mixed Reality headset.

Develops desktop and large-format holographic displays that generate 45-100 views simultaneously for glasses-free 3D.

The world leader in mid-air haptics and hand tracking, formed from the merger of Ultrahaptics and Leap Motion.