Semantic Scene Understanding

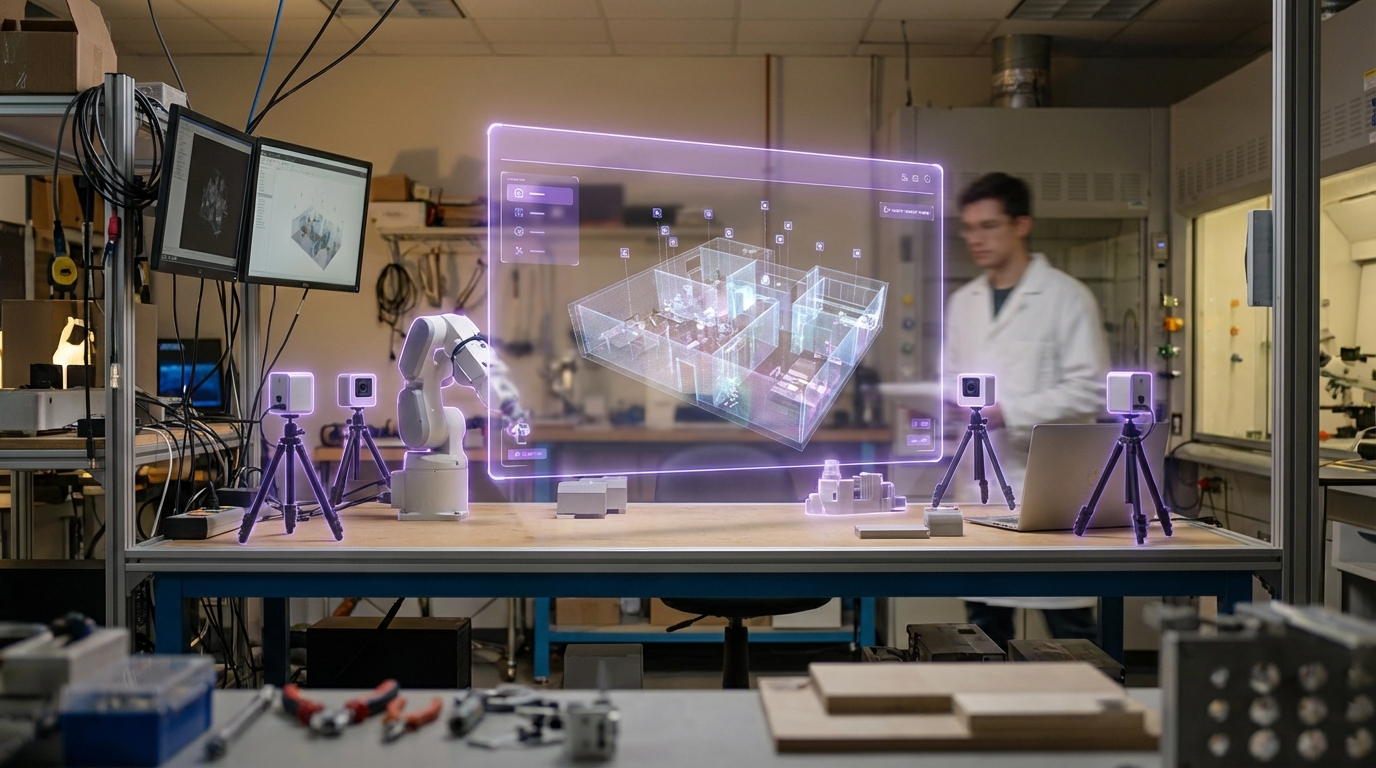

Semantic scene understanding represents a convergence of computer vision, machine learning, and spatial computing that enables systems to comprehend three-dimensional environments in real time. Unlike traditional object detection, which merely identifies isolated items in a frame, this technology constructs a holistic interpretation of physical spaces by recognising rooms, surfaces, objects, and their functional relationships—what researchers call "affordances." The system employs deep neural networks trained on vast datasets of annotated scenes to perform simultaneous localisation, segmentation, and classification. Advanced implementations use depth sensors and LiDAR in combination with RGB cameras to build persistent spatial maps that track not just what objects are present, but where they are, how they relate to one another, and what actions they support. For instance, the system doesn't simply detect a chair; it understands that the chair affords sitting, that it occupies a specific position relative to a table, and that it exists within a dining room context.

The fundamental challenge this technology addresses is the brittleness of traditional augmented reality and spatial computing systems, which have historically struggled to respond intelligently to their surroundings. Without semantic understanding, AR applications can only anchor digital content to arbitrary points in space, leading to inappropriate or unsafe placements—virtual objects floating through walls, instructional overlays appearing in contexts where they're irrelevant, or entertainment content activating in hazardous areas. By inferring the functional properties of spaces and objects, semantic scene understanding enables context-aware computing that adapts to its environment. This capability unlocks new possibilities for industrial training, where step-by-step instructions can automatically attach themselves to the specific machine a worker is operating, or for accessibility applications that can identify navigable paths and potential obstacles for users with mobility constraints. The technology also supports dynamic safety systems that recognise hazardous zones—staircases, moving machinery, traffic areas—and automatically suppress distracting content or trigger warnings.

Early commercial deployments are emerging across manufacturing, logistics, and enterprise training environments, where the ability to automatically contextualise digital information within physical workflows offers measurable productivity gains. Research prototypes have demonstrated systems that can distinguish between dozens of room types and hundreds of object categories with high accuracy, even in previously unseen environments. As the technology matures, it is expected to become foundational infrastructure for the next generation of spatial computing platforms, enabling truly ambient interfaces that understand not just where users are, but what they're doing and what support they might need. This progression aligns with broader industry movement toward pervasive computing environments that fade into the background, responding intelligently to human activity rather than demanding explicit interaction. The convergence of semantic scene understanding with other spatial technologies promises to transform how digital and physical realities interweave in everyday life.

Related Organizations

Spatial data company that integrated mobile LiDAR support into their capture app, democratizing real estate digital twins.

AR platform company that develops the Lightship ARDK and owns Scaniverse, a 3D scanning app leveraging LiDAR.

Developing 'Apple Intelligence', a personal intelligence system integrated into iOS/macOS that uses on-device context to mediate tasks and information.

Automotive and energy company developing custom AI silicon for autonomous driving.

AR headset manufacturer utilizing dynamic dimming and eye-tracking for optimized rendering.

US drone manufacturer specializing in autonomous flight and 3D scan software.

Provides synthetic data generation for training computer vision models in semantic segmentation.

Robotic arm manufacturer using vision and semantic understanding to manipulate objects without rigid programming.

Smart Data Capture platform that turns smart devices into ID scanners with local processing capabilities.