Embodied AI Agents

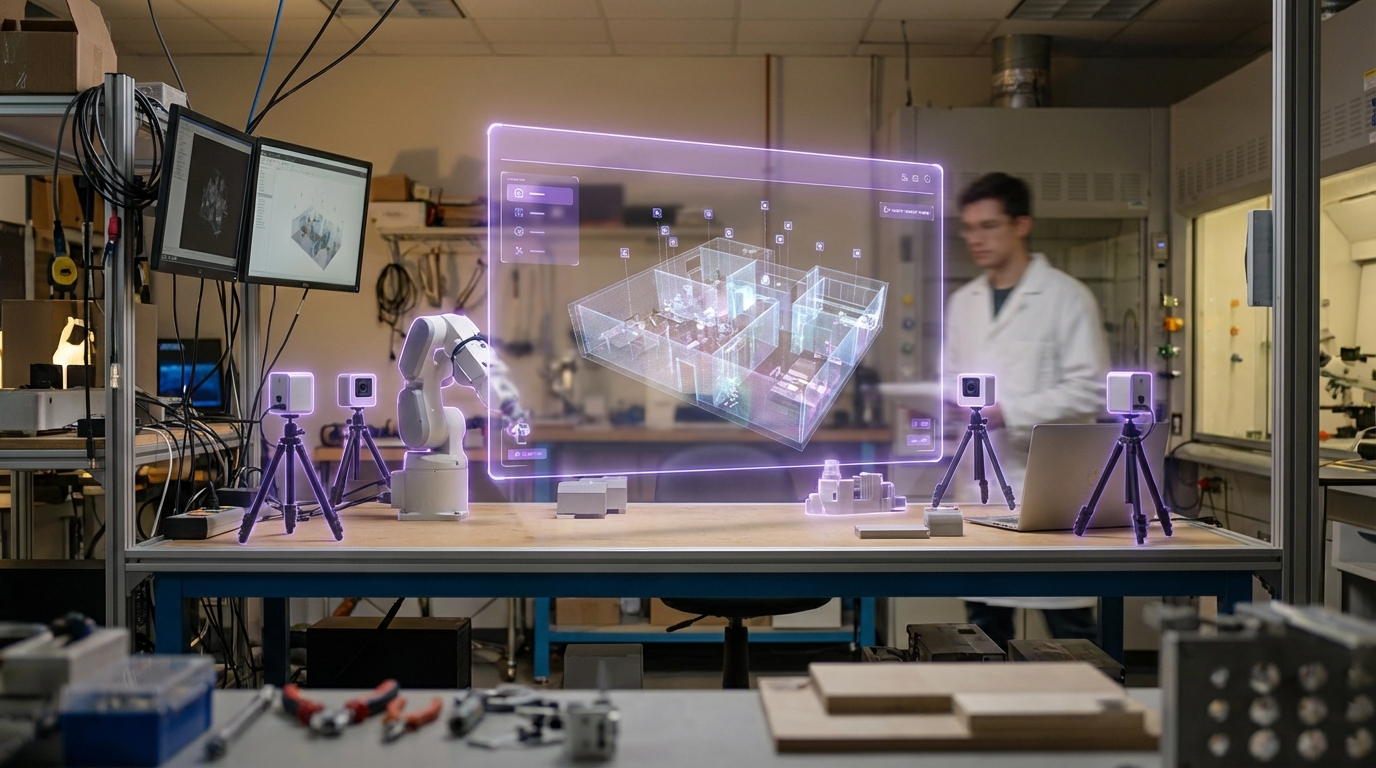

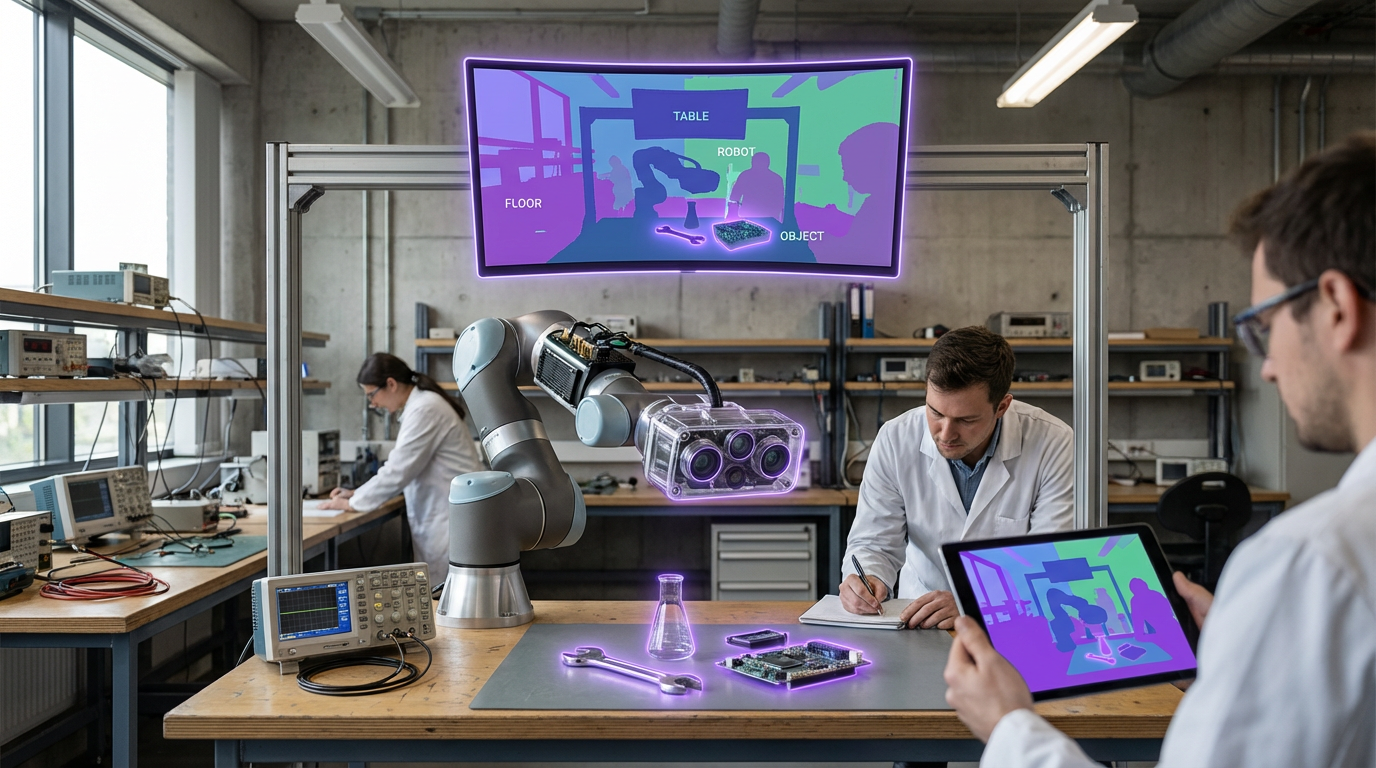

Embodied AI agents represent a significant evolution in artificial intelligence, moving beyond text-based chatbots and voice assistants to create autonomous virtual entities capable of perceiving, reasoning about, and acting within three-dimensional environments. Unlike traditional AI systems that process information in abstract digital spaces, these agents possess spatial awareness and can navigate physical or virtual worlds with an understanding of geometry, object relationships, and environmental constraints. The technology combines advances in computer vision, spatial mapping, natural language processing, and reinforcement learning to create AI entities that can perceive their surroundings through sensors or virtual cameras, build mental models of 3D spaces, and execute physical or simulated actions. These agents employ sophisticated algorithms for path planning, obstacle avoidance, and task execution, while maintaining persistent memory of their environment and interactions. The embodiment aspect is crucial—these AI systems are designed to occupy and interact with space in ways that mirror physical presence, whether manifested as holographic figures in augmented reality, avatars in virtual environments, or digital representations overlaid on real-world spaces through mixed reality displays.

The emergence of embodied AI agents addresses several critical challenges in human-computer interaction and spatial computing adoption. Traditional interfaces for navigating complex 3D environments or managing smart spaces often require users to master unintuitive controls or abstract menu systems. Embodied agents solve this by providing natural, conversational interfaces that can guide users through physical locations, demonstrate procedures through gesture and movement, and serve as persistent assistants that understand spatial context. In enterprise settings, these agents enable more intuitive training simulations, where virtual instructors can demonstrate equipment operation or safety procedures in realistic spatial contexts. For consumer applications, they transform how people interact with smart homes and mixed reality entertainment, offering companions or guides that can move through spaces, point out features, and respond to both verbal commands and physical gestures. This technology also enables new forms of remote collaboration, where AI agents can represent absent team members or provide intelligent facilitation in virtual meeting spaces, understanding social dynamics and spatial arrangements.

Early deployments of embodied AI agents are emerging across multiple sectors, from retail environments where virtual assistants help customers navigate stores and locate products, to healthcare settings where AI companions provide guidance to patients in rehabilitation exercises. Research institutions are developing increasingly sophisticated agents capable of complex spatial reasoning, with some systems demonstrating the ability to learn new environments through exploration and adapt their behaviour based on user preferences and interaction patterns. The technology is particularly promising for accessibility applications, where embodied agents can serve as persistent guides for individuals with visual or cognitive impairments, providing spatial orientation and navigation assistance in both physical and virtual spaces. As mixed reality headsets become more prevalent and spatial computing platforms mature, industry observers note a trajectory toward agents that can seamlessly transition between purely virtual existence and augmented reality manifestations, maintaining continuity of relationship and knowledge across different spatial contexts. This evolution suggests a future where AI assistance becomes fundamentally spatial rather than screen-bound, with virtual entities that inhabit our environments as persistent, helpful presences rather than tools we must explicitly invoke.

Related Organizations

Creator of Semantic Scholar and various open-source models for scientific text processing.

Developers of the Gemini family of models, which are trained from the start to be multimodal across text, images, video, and audio.

A platform for creating AI characters with distinct personalities, memories, and contextual awareness for games and virtual worlds.

Human-Centered AI Institute conducting research on BEHAVIOR benchmark.

A research organization founded by Boston Dynamics, focusing on solving the most difficult problems in robotics and embodied AI.

Provides conversational AI for virtual worlds, enabling NPCs to have voice-based interactions with players.

AI robotics company building a universal AI brain for robots.

Developing general-purpose humanoid robots (Phoenix) powered by Carbon, their AI control system.

Creators of Moxie, a companion robot that uses machine learning to perceive, process, and respond to natural conversation and eye contact to help children with social development.