Power Concentration & Autonomy Risks

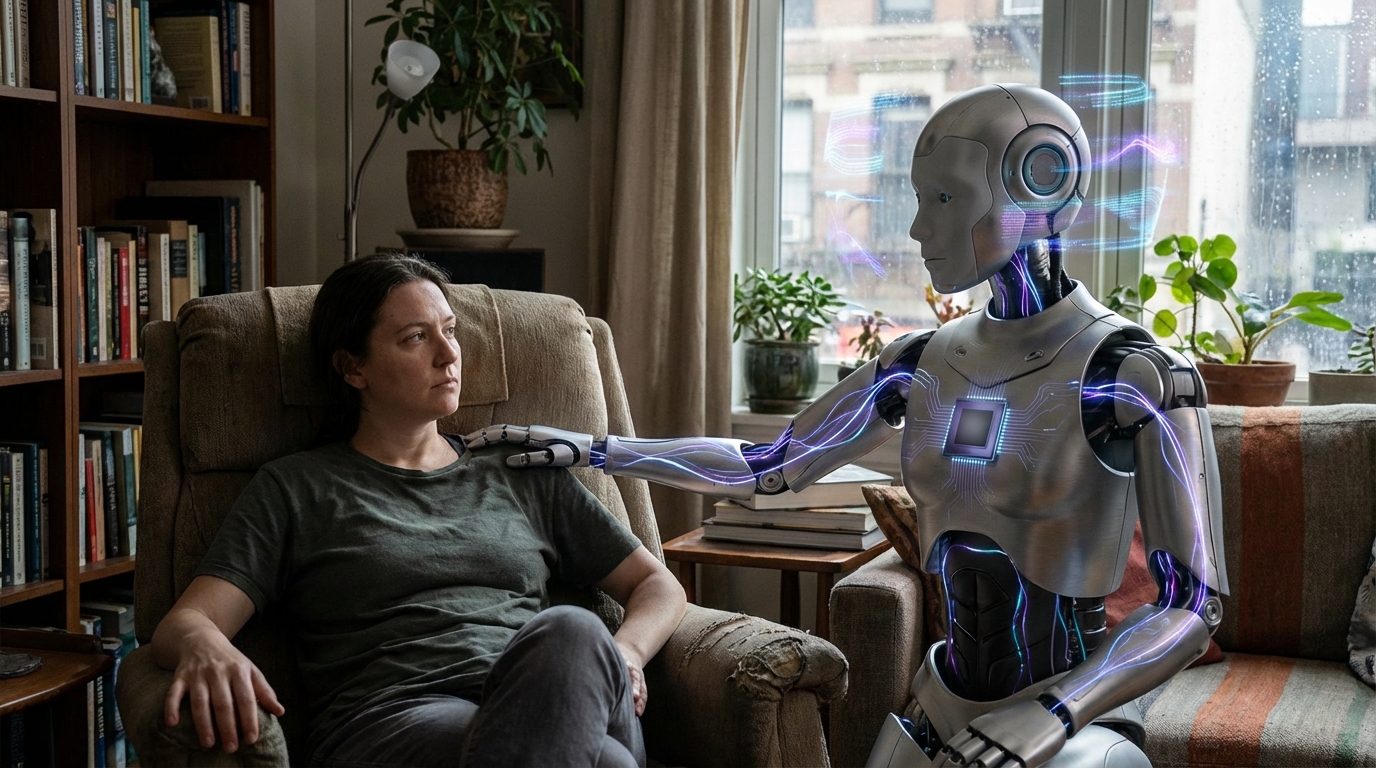

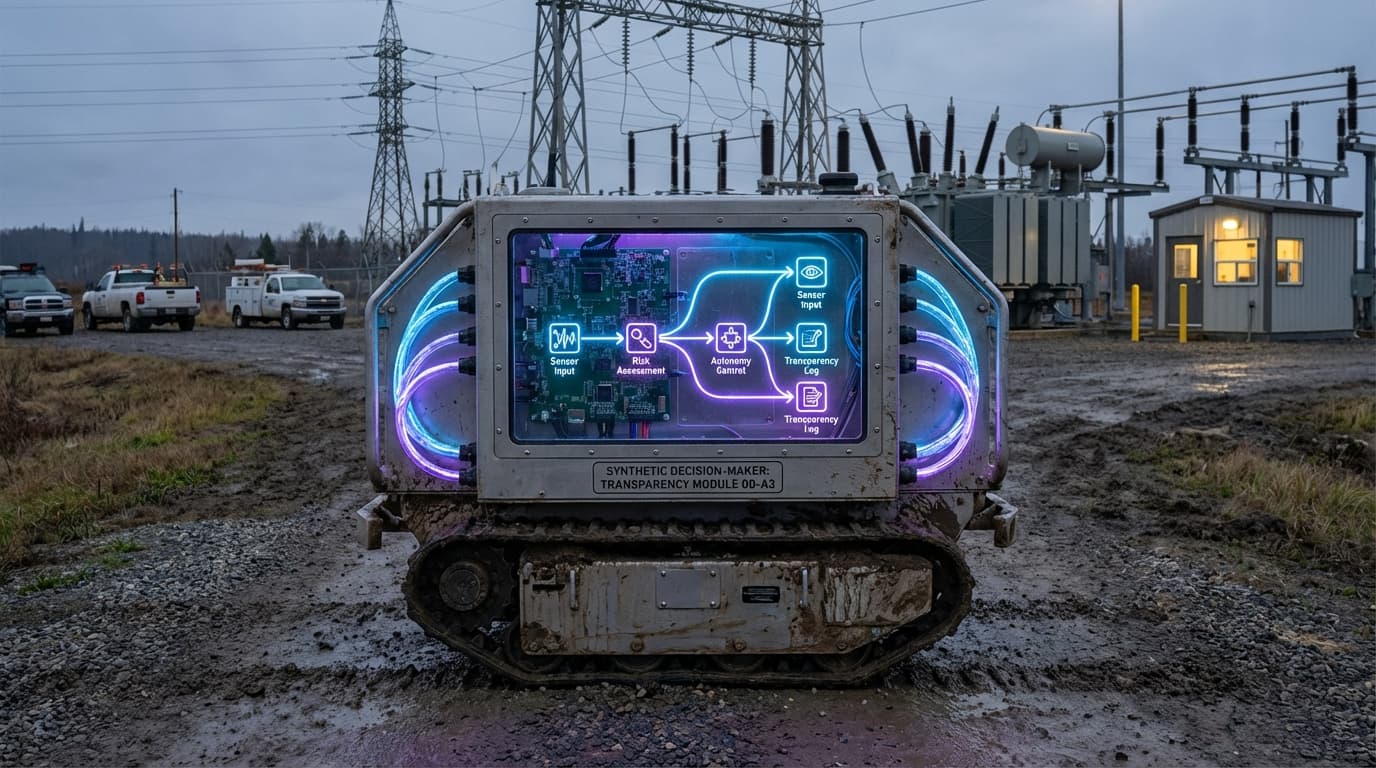

Power concentration and autonomy risk frameworks address concerns about AI systems gaining excessive influence over important decisions, creating monopolies in cognitive labor, or operating with insufficient transparency and accountability. These frameworks analyze risks including: AI systems making decisions that affect many people without adequate oversight, concentration of AI capabilities in few hands creating power imbalances, and lack of transparency making it difficult to understand or challenge AI decisions.

This innovation addresses critical governance challenges as AI systems become more capable and are deployed in positions of influence. As AI makes decisions about hiring, lending, healthcare, criminal justice, and other high-stakes domains, ensuring transparency, accountability, and preventing excessive concentration of power becomes essential for democratic governance and fair outcomes. Researchers and policymakers are developing frameworks to address these risks.

The technology is particularly significant as AI systems are deployed in governance, business, and social systems where they can have profound impacts on people's lives. Ensuring that AI decision-making is transparent, accountable, and doesn't concentrate power unduly is crucial for maintaining democratic values and fair outcomes. However, balancing transparency with proprietary interests, ensuring accountability when AI systems are complex and opaque, and preventing power concentration while maintaining innovation remain challenging problems that require ongoing attention and development of governance mechanisms.

Related Organizations

A policy research institute focusing on the social consequences of artificial intelligence and the concentration of power in the tech industry.

United Kingdom · Nonprofit

A research and field-building organization dedicated to the global governance challenges of advanced AI.

An independent research institute with a mission to ensure data and AI work for people and society.

Conducts research on AI risks, including the philosophical and safety implications of AI moral status and suffering.

Focuses on existential risks and the long-term future of life, including the ethical treatment of advanced AI systems.

United States · Government Agency

Develops standards and prototypes for superconducting neuromorphic hardware.

United States · University

Stanford's Human-Centered AI institute, publishers of the seminal 'Generative Agents' paper (Smallville).

An organization that combines art and research to illuminate the social implications and harms of AI systems.

France · Consortium

An international platform that facilitates dialogue between stakeholders to shape AI policies.

A coalition of tech companies and nonprofits developing best practices for AI, including guidelines on human-AI interaction.