Social Signal Processing

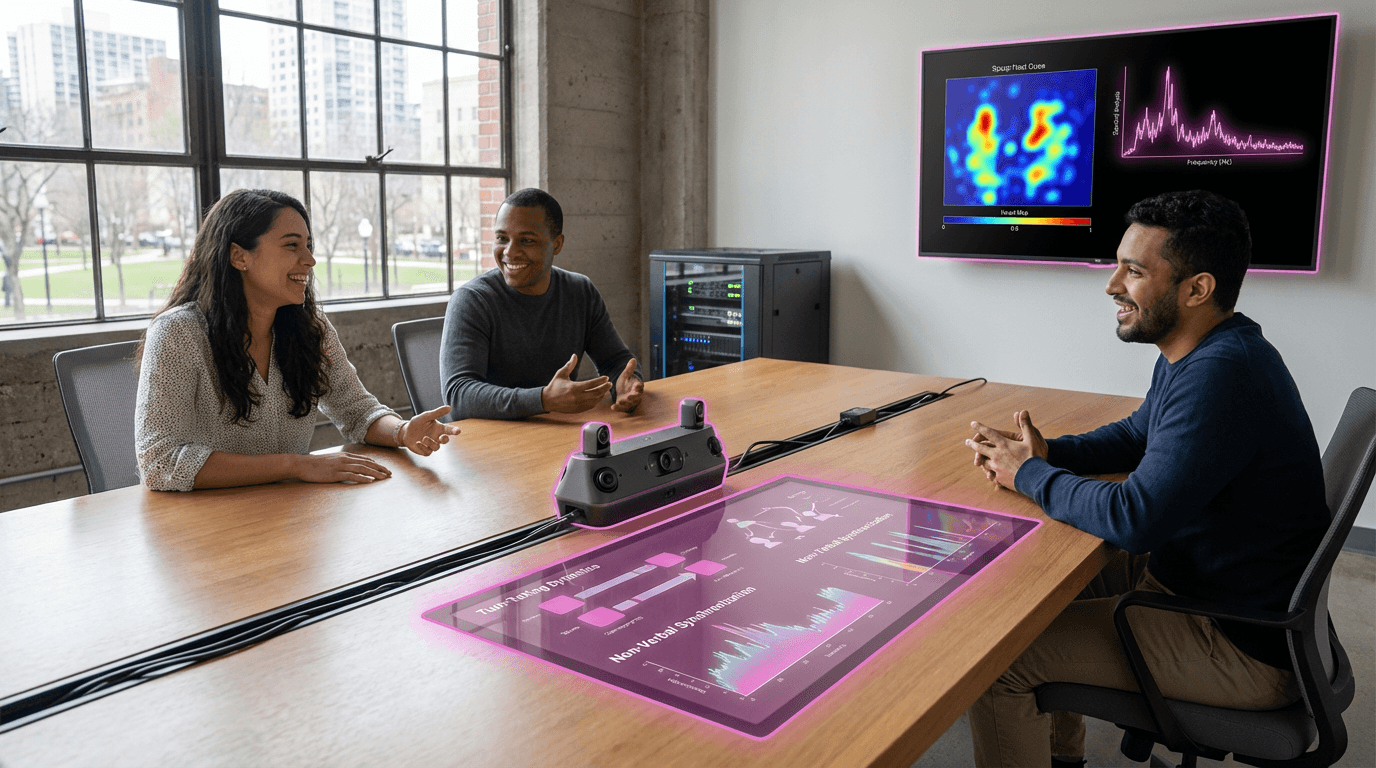

Social Signal Processing represents a computational approach to understanding the subtle, often unconscious cues that shape human interaction. Unlike traditional communication analysis that focuses primarily on verbal content, this technology examines the rich tapestry of non-verbal behaviors that occur during social exchanges. The system employs advanced sensors—including microphones, cameras, wearable devices, and depth sensors—to capture behavioral data such as body orientation, speaking patterns, physical proximity, eye contact duration, vocal pitch and rhythm, and gestural synchronization. Machine learning algorithms then process these signals to identify patterns that reveal underlying social dynamics. For instance, the technology can detect who dominates conversations through turn-taking analysis, measure group cohesion through movement synchrony, or identify emerging leaders through patterns of attention and influence. The computational framework draws on decades of social psychology research, translating human behavioral science into quantifiable metrics that can be analyzed at scale.

In organizational contexts, understanding group dynamics has traditionally relied on subjective observation, surveys, and self-reporting—methods that are time-consuming, prone to bias, and often fail to capture the unconscious behaviors that most strongly influence outcomes. Social Signal Processing addresses these limitations by providing objective, real-time insights into team functioning. Research suggests this technology can predict meeting outcomes, identify communication breakdowns before they escalate, and reveal hidden power structures that may undermine collaboration. In hiring contexts, early deployments indicate potential for reducing interviewer bias by focusing on behavioral compatibility rather than subjective impressions. The technology also enables new approaches to training and development, allowing organizations to provide feedback on communication patterns that individuals may not recognize in themselves. Beyond corporate settings, applications extend to healthcare, where analyzing patient-provider interactions can improve care quality, and education, where understanding classroom dynamics can enhance learning environments.

Current implementations of Social Signal Processing range from research prototypes in academic settings to pilot programs in forward-thinking organizations. Some companies are exploring its use in optimizing remote collaboration, where the absence of physical presence makes non-verbal cues harder to perceive naturally. The technology shows particular promise in hybrid work environments, where understanding engagement and connection across distributed teams has become critical. However, widespread adoption faces important challenges around privacy, consent, and the ethical implications of quantifying human behavior. As workplace culture increasingly emphasizes psychological safety and inclusive practices, Social Signal Processing offers a data-driven complement to these efforts, potentially revealing unconscious biases and communication barriers that traditional methods miss. The trajectory of this technology points toward more nuanced, context-aware systems that can provide actionable insights while respecting individual privacy—a balance that will likely define its role in shaping more effective, equitable human collaboration in the years ahead.

Related Organizations

Developing an Empathic Voice Interface (EVI) that detects and responds to human emotion.

The pioneer in Emotion AI, spun out of MIT Media Lab, now part of Smart Eye.

Home to the 'Bravemind' project, a clinical VR exposure therapy tool for treating PTSD in veterans.

Provides real-time emotional intelligence coaching for contact center agents.

Uses automated speech recognition and NLP to analyze tone of voice and match agents with customers based on behavioral profiles.

Develops FaceReader, the standard software tool for automated analysis of facial expressions in scientific research.

A spin-off from TU Munich specializing in audio analysis and speech emotion recognition.

An enterprise AI company specializing in conversational service automation, using tonal analysis to detect customer sentiment and emotion.

Uses webcams to measure attention and emotion in response to video advertising.

Analytics for VR that tracks gaze and behavior to understand user engagement.