AI Alignment Protocols

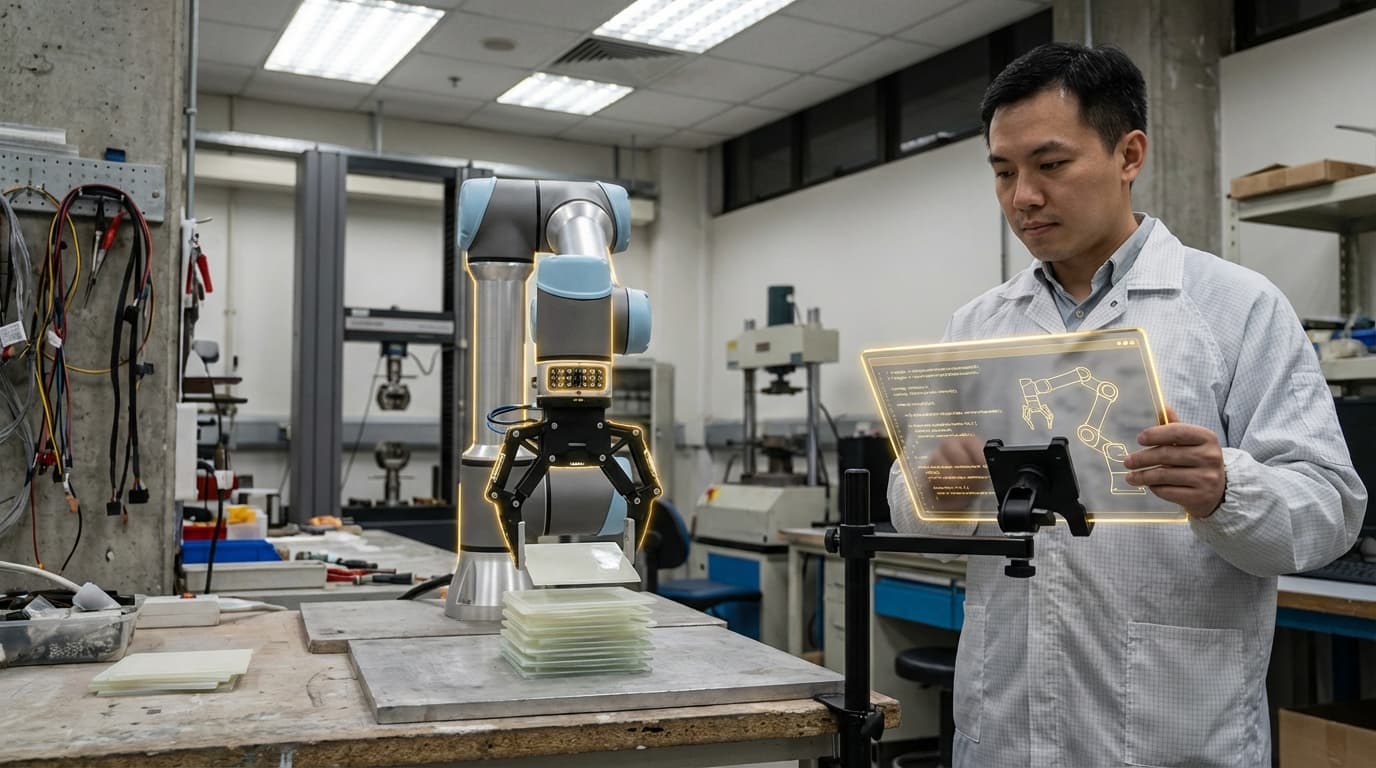

AI Alignment Protocols represent a critical safety infrastructure designed to ensure that autonomous industrial systems operate in accordance with human values, intentions, and safety requirements. These protocols combine formal verification methods, real-time monitoring systems, and constraint-based architectures to create multiple layers of protection against unintended or harmful behaviors in automated manufacturing, logistics, and process control environments. The technical foundation rests on mathematical proof systems that verify system behavior against specified safety properties before deployment, coupled with run-time monitors that continuously validate that autonomous decisions remain within acceptable boundaries. These frameworks typically employ techniques such as model checking, theorem proving, and bounded verification to establish guarantees about system behavior, while runtime components use anomaly detection, constraint satisfaction checking, and decision auditing to ensure ongoing compliance with safety specifications.

The industrial imperative for these protocols stems from the growing autonomy of manufacturing and logistics systems, where machines increasingly make consequential decisions without direct human oversight. As factories deploy more sophisticated AI-driven optimization systems, robotic coordination networks, and predictive maintenance algorithms, the potential consequences of misaligned objectives become more severe. A production optimization system might maximize throughput at the expense of worker safety, or a logistics algorithm could prioritize efficiency over environmental regulations if not properly constrained. AI Alignment Protocols address these risks by embedding explicit representations of human values and safety requirements directly into the decision-making architecture of autonomous systems. This enables industrial facilities to capture the benefits of automation and optimization while maintaining verifiable guarantees that systems will not pursue objectives that conflict with human welfare, regulatory compliance, or operational safety standards.

Early implementations of these protocols are emerging in high-stakes industrial environments where safety is paramount, including chemical processing plants, automotive manufacturing facilities, and warehouse automation systems. Research initiatives in the manufacturing sector are exploring how formal methods can be integrated with machine learning systems to create verifiable AI controllers that can adapt to changing conditions while maintaining safety guarantees. Industry analysts note growing interest in certification frameworks that could provide standardized approaches to validating AI alignment in industrial contexts, potentially becoming requirements for insurance coverage or regulatory approval. The trajectory of this technology points toward increasingly sophisticated verification methods capable of handling more complex autonomous systems, with particular emphasis on ensuring that optimization objectives remain aligned with human intentions even as systems learn and adapt over time. As industrial automation continues to advance, these protocols represent an essential foundation for maintaining human control and safety in increasingly autonomous production environments.

Related Organizations

An AI safety and research company developing Constitutional AI to align models with human values.

Conducts theoretical research and model evaluations to align future advanced AI systems.

Applied AI alignment research organization focusing on interpretability techniques like causal scrubbing.

Academic research center at UC Berkeley focused on ensuring AI systems remain beneficial to humans.

Google's AI research lab, creators of AlphaFold (protein structure) and GNoME (materials discovery).

US federal agency that sets standards for technology, including facial recognition vendor tests (FRVT).

AI alignment startup focusing on 'Cognitive Emulation' and making systems bounded and interpretable.

A non-profit AI research lab that maintains the LM Evaluation Harness, a standard benchmark suite for LLMs.

Focuses on existential risks and the long-term future of life, including the ethical treatment of advanced AI systems.

Offers the AWS Truepower suite, a leading platform for renewable energy project design and operational forecasting.