Explainable AI Tooling

Explainable AI tooling represents a critical evolution in artificial intelligence deployment, addressing the fundamental challenge of understanding how complex machine learning models arrive at their decisions. These systems provide structured frameworks for interpreting AI outputs through multiple analytical lenses, including feature importance analysis, attention mechanisms, and decision pathway visualisation. The core technical approach involves creating intermediate representation layers that translate opaque neural network activations into human-interpretable concepts, while maintaining fidelity to the underlying model's actual reasoning process. Advanced implementations incorporate uncertainty quantification methods that not only reveal what the AI decided, but also express confidence levels and identify edge cases where predictions may be unreliable. Counterfactual generation capabilities allow operators to explore "what-if" scenarios, understanding how different input conditions would alter AI recommendations.

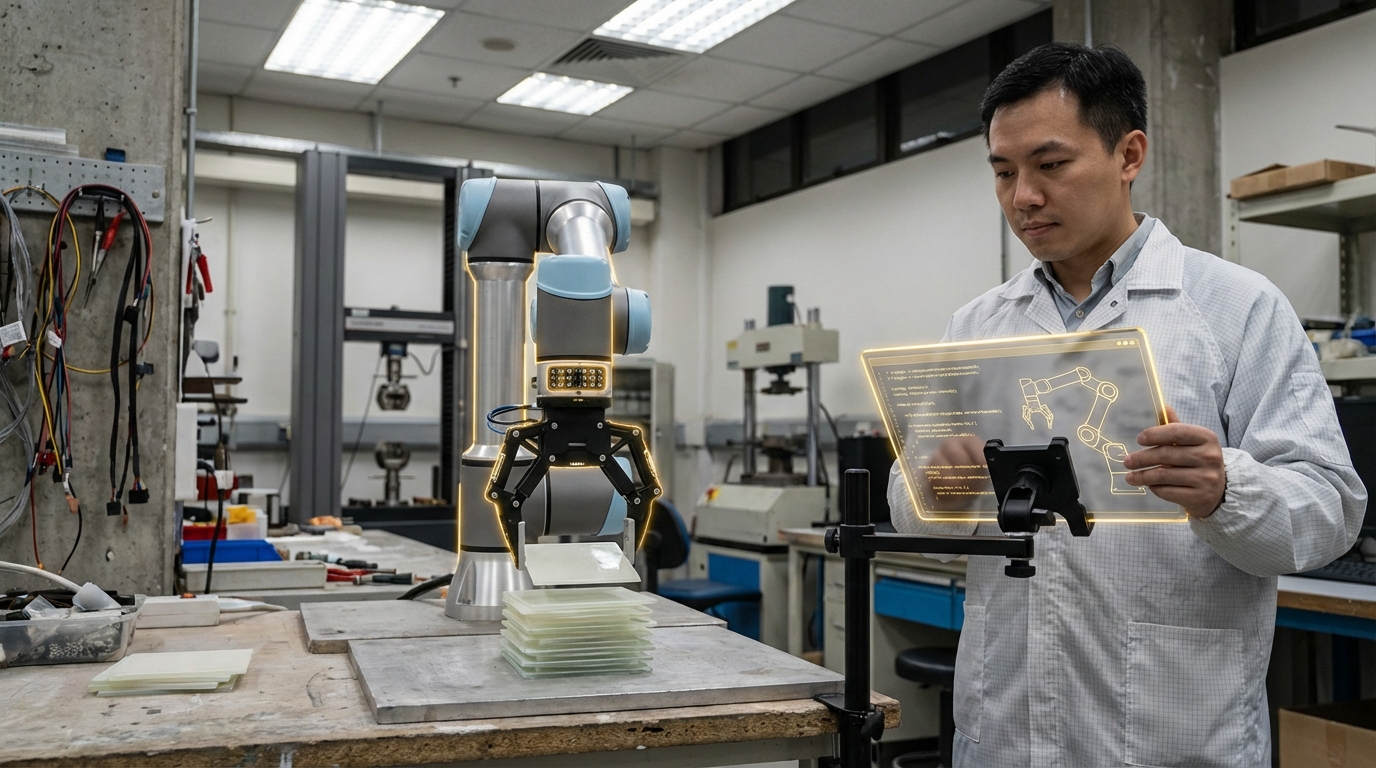

In industrial contexts governed by the Fourth Industrial Revolution, the opacity of AI decision-making has emerged as a significant barrier to adoption in safety-critical applications. Manufacturing facilities, autonomous logistics systems, and predictive maintenance operations require not just accurate predictions, but also clear justification for those predictions to satisfy regulatory requirements, maintain operator trust, and enable effective human-AI collaboration. Explainability tooling addresses this challenge by providing audit trails that document the reasoning behind automated decisions, enabling compliance with emerging AI governance frameworks and industry standards. These systems also facilitate debugging and model improvement by revealing when AI systems rely on spurious correlations or exhibit unexpected biases, allowing engineers to refine training data and model architectures. Furthermore, they enable domain experts without deep machine learning expertise to validate that AI systems are making decisions based on legitimate operational factors rather than dataset artifacts.

Research institutions and industrial technology providers have developed various explainability frameworks, with early deployments appearing in sectors where regulatory scrutiny is highest, such as pharmaceutical manufacturing and aerospace quality control. These implementations typically integrate with existing industrial control systems, providing real-time explanations alongside AI recommendations through operator interfaces. Industry analysts note growing adoption in predictive maintenance applications, where explaining why a system flagged a particular component for inspection helps maintenance teams prioritise interventions and builds confidence in automated monitoring. The trajectory of this technology points toward increasingly sophisticated governance capabilities, including automated compliance checking and explanation quality metrics that verify whether generated rationales meet industry-specific interpretability standards. As cyber-physical systems become more autonomous, explainability tooling will likely evolve from an optional enhancement into a mandatory component of industrial AI deployments, ensuring that the benefits of automation can be realised without sacrificing transparency or accountability.

Related Organizations

A model monitoring and observability platform that includes specific tools for evaluating LLM accuracy and hallucination.

A research and development agency of the United States Department of Defense.

Provides Model Performance Management (MPM) to monitor, explain, and analyze AI models in production.

An ML observability platform that helps teams detect issues, troubleshoot, and improve model performance in production.

Provides watsonx.governance for managing AI risk and compliance.

AI observability platform for monitoring data health and model performance.

Creators of CausalImpact, a package for causal inference using Bayesian structural time-series.

Enterprise AI platform offering automated machine learning including model selection and architecture optimization.

Provides Driverless AI, an AutoML platform that includes architecture search and hyperparameter tuning.