Semantic NeRF Editing

Semantic NeRF editing layers segmentation, CLIP-like embedding spaces, and diffusion priors onto neural radiance fields so creators can select and modify volumetric regions with text or brush inputs. The system maps objects within the NeRF to semantic labels, applies edits directly in the latent field, and re-optimizes only the affected rays, preserving lighting continuity. Users can swap materials, remove clutter, or animate props without exporting to mesh-based DCC tools.

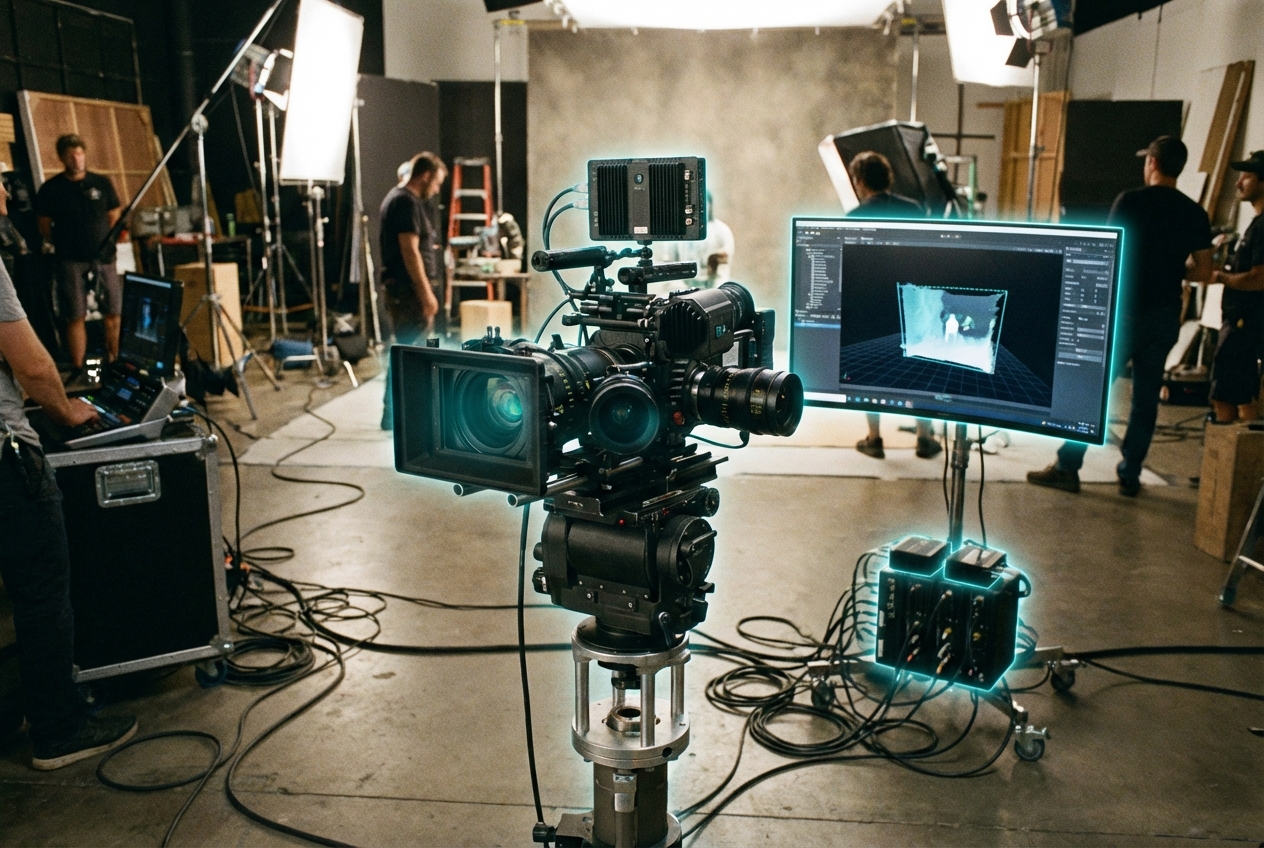

VFX houses and indie creators alike use these tools for rapid set dressing, continuity fixes, or client previews. A director can capture a location midday, then prompt the NeRF to “turn sky overcast” or “age this storefront,” seeing the changes live inside Unreal. Interactive experiences let audiences reshape volumetric worlds in real time, opening participatory storytelling formats.

Challenges include edit provenance (what changed, when) and compatibility with existing pipelines. Vendors are adding version control, USD export, and safety locks to prevent accidental edits. As NeRF standards mature and creative suites integrate semantic brushes alongside traditional sculpting, prompt-driven volumetric editing will become as common as color grading for spatial media.

Related Organizations

The originators of the original NeRF paper and developers of MultiNeRF and immersive view technologies for Maps.

Creators of Dream Machine, a high-quality video generation model, and 3D capture technology.

Home to the BAIR lab and researchers like Angjoo Kanazawa who pioneered NeRF technologies.

Specializes in NeRF workflows for Virtual Production, allowing editing of volumetric backgrounds.

The French National Institute for Research in Digital Science and Technology, heavily involved in AI research and Scikit-learn.

AR platform company that develops the Lightship ARDK and owns Scaniverse, a 3D scanning app leveraging LiDAR.

Applied AI research company shaping the next era of art, entertainment and human creativity.

Developers of Unreal Engine 5, which features Lumen, a fully dynamic global illumination and reflection system designed for next-gen consoles and PC.