Generative Physics Engines

Traditional physics engines in virtual environments rely on pre-programmed rules and rigid mathematical models to simulate how objects interact, move, and respond to forces. While effective for many applications, these systems require extensive manual configuration of material properties, collision behaviors, and environmental parameters. Generative physics engines represent a fundamental shift in this paradigm by employing machine learning models that can infer, predict, and adapt physical behaviors in real-time. Rather than relying solely on classical physics equations, these systems use neural networks trained on vast datasets of real-world physical interactions to generate plausible—or deliberately implausible—simulations. The underlying architecture typically combines physics-informed neural networks with generative models that can interpolate between different physical regimes, allowing the system to simulate materials and dynamics it has never explicitly been programmed to handle. This approach enables the engine to make educated predictions about how novel objects or unusual scenarios should behave, filling gaps in its knowledge through learned patterns rather than hard-coded rules.

For developers of immersive experiences, this technology addresses a longstanding challenge: the enormous effort required to create convincing virtual worlds with rich, responsive physics. Traditional engines demand that every material property be manually specified and every interaction carefully tuned, creating bottlenecks in content creation and limiting the spontaneity of virtual environments. Generative physics engines dramatically reduce this burden by automatically inferring appropriate behaviors based on visual and contextual cues. More significantly, they enable entirely new categories of experience that blend realism with creative expression. A virtual environment might shift seamlessly from obeying conventional physics to adopting dream-like or stylized dynamics based on narrative context, user emotion, or artistic intent. This capability is particularly valuable for therapeutic applications, artistic installations, and experimental storytelling where the malleability of physical laws becomes a creative tool rather than a constraint.

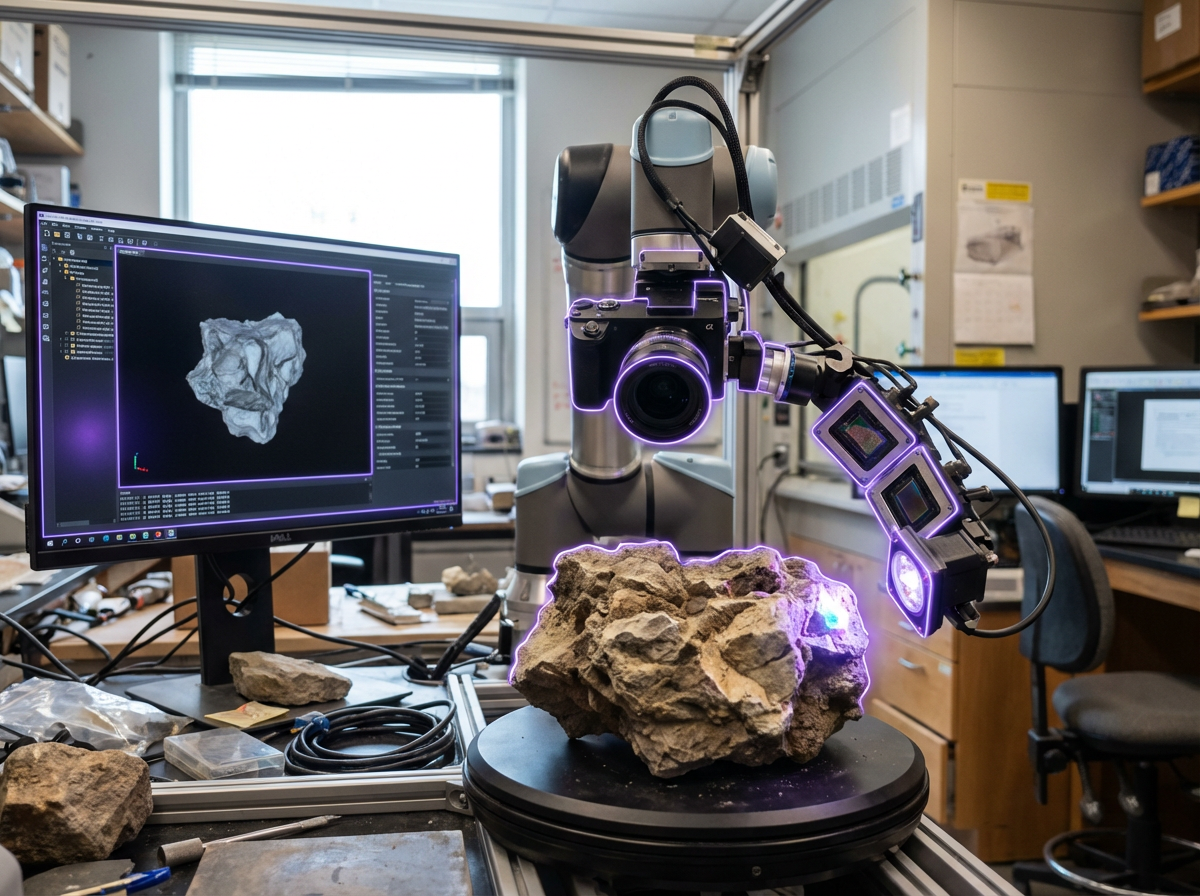

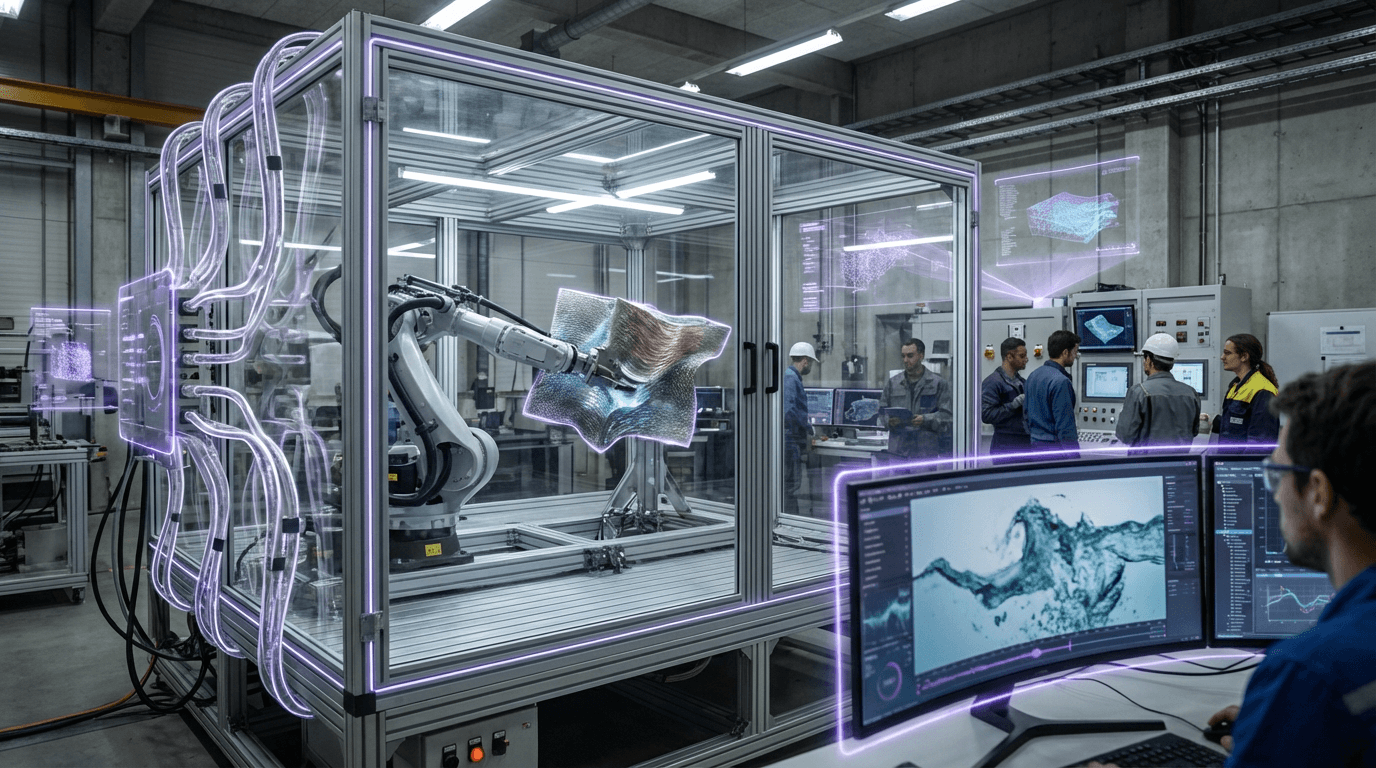

Early implementations of generative physics are emerging in research laboratories and specialized creative tools, with some game engines beginning to incorporate machine learning-assisted physics prediction for secondary effects like cloth simulation and particle systems. The technology shows particular promise in virtual production environments where directors can experiment with impossible physics for visual effects, and in training simulations where adaptive difficulty might involve subtly altering physical responses. As spatial computing platforms mature and demand grows for more responsive, believable virtual worlds, generative physics engines are positioned to become foundational infrastructure. The trajectory points toward hybrid systems that can fluidly transition between strict physical accuracy—essential for engineering simulations or scientific visualization—and expressive, context-aware dynamics that serve narrative and emotional goals. This flexibility represents a crucial evolution in how we construct and inhabit digital spaces, moving beyond the binary choice between realistic simulation and artistic abstraction toward a continuum where physical behavior itself becomes a dynamic, generative element of the experience.

Related Organizations

Developers of the Gemini family of models, which are trained from the start to be multimodal across text, images, video, and audio.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

Research lab hosting Josh Tenenbaum's Computational Cognitive Science group, a leader in probabilistic programming and neuro-symbolic models.

OpenAI

United States · Company

Creator of GPT-4o, a natively multimodal model capable of reasoning across audio, vision, and text in real-time.

Search for Extraordinary Experiences Division, researching deep learning for real-time physics and animation.

Researches differentiable physics and neural simulation for computer graphics.

Applied AI research company shaping the next era of art, entertainment and human creativity.

Ubisoft La Forge

Canada · Research Lab

The R&D branch of Ubisoft bridging academic research and game production.

Creators of the Unity Engine and the ML-Agents toolkit, which allows researchers to train intelligent agents within game environments.