Vision-Language-Action Robots

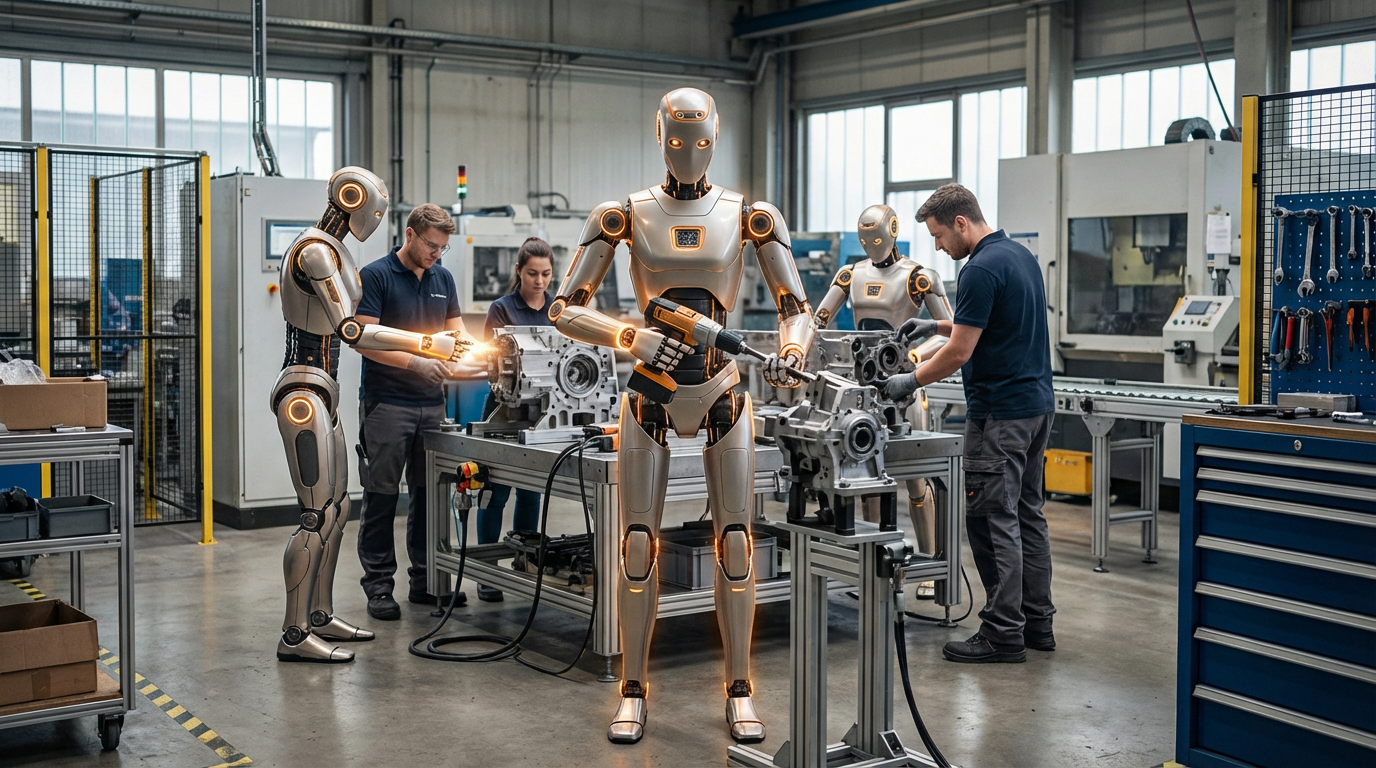

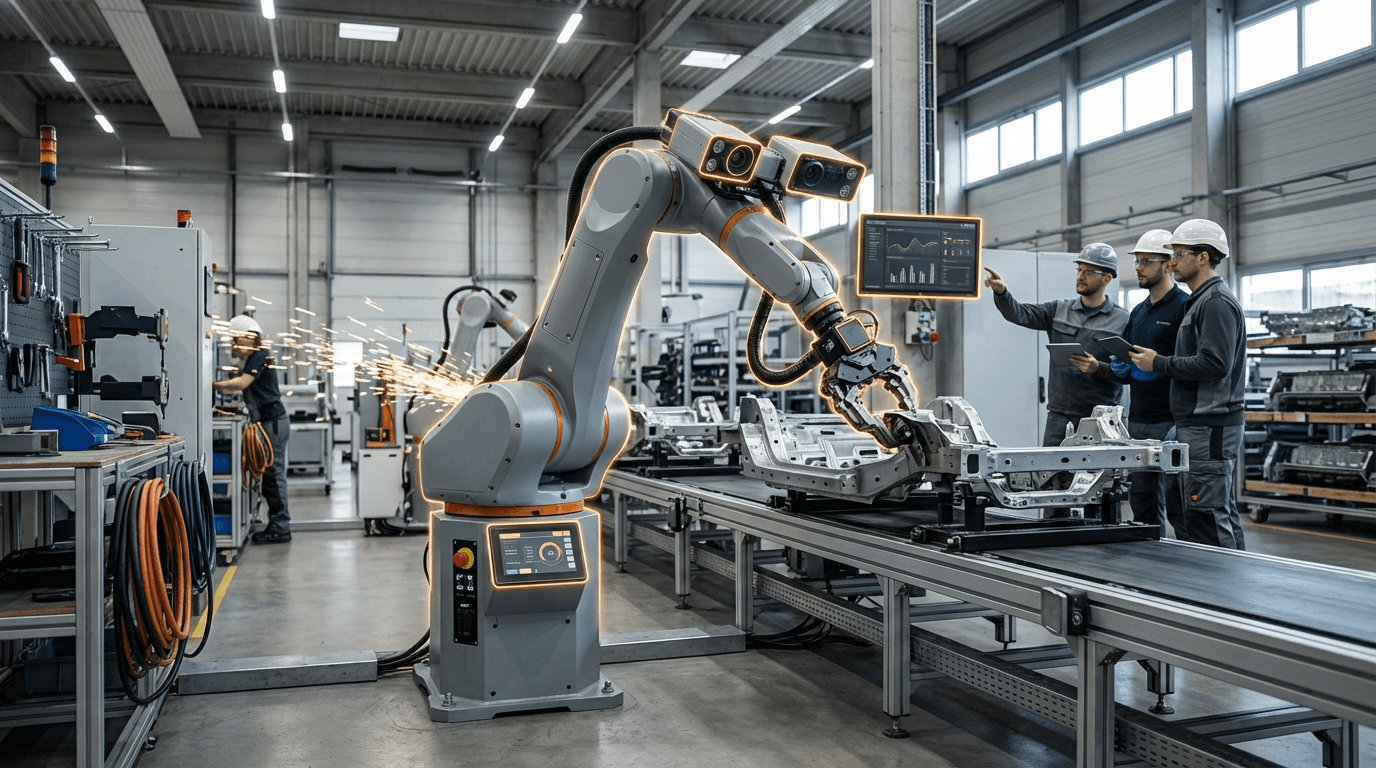

Vision-language-action robots represent a fundamental shift in industrial automation by integrating large-scale foundation models that process visual inputs, natural language commands, and physical actions within a unified computational framework. Unlike traditional industrial robots that rely on pre-programmed motion sequences and rigid task definitions, these systems leverage deep learning architectures trained on vast datasets of images, text, and robotic demonstrations to develop generalizable understanding across multiple modalities. The technical foundation rests on transformer-based models that encode visual scenes through computer vision networks, parse linguistic instructions through natural language processing, and map both to continuous action spaces that control robotic manipulators. This tri-modal integration allows a single model to reason about what it sees, understand what it's being asked to do, and determine how to physically accomplish the task—all without requiring explicit programming for each specific scenario.

The manufacturing sector has long struggled with the inflexibility of conventional automation systems, where even minor product variations or layout changes can necessitate weeks of reprogramming and system recalibration. Vision-language-action robots address this rigidity by enabling operators to communicate tasks in plain language rather than through complex programming interfaces. A factory worker can instruct a robot to "sort the defective components into the red bin" or "assemble the housing using the parts on the left workstation," and the system interprets both the semantic meaning and the visual context to execute the command. This capability dramatically reduces changeover times in mixed-model production lines and makes automation economically viable for small-batch manufacturing that previously relied on manual labor. The technology also enhances quality control processes by allowing robots to identify and respond to visual anomalies without pre-defined defect libraries, adapting to new product types and failure modes as they emerge.

Early industrial deployments indicate that vision-language-action systems are particularly valuable in electronics assembly, automotive component handling, and warehouse logistics where product diversity and task variability are high. Research laboratories and automation companies are actively developing these systems, with pilot programs demonstrating significant reductions in programming time and improved adaptability to production changes. The technology aligns with broader industry trends toward flexible manufacturing and mass customization, where production systems must accommodate frequent product updates and personalized variants. As foundation models continue to improve and training datasets expand to include more industrial scenarios, these robots are expected to become increasingly capable of handling complex assembly sequences, collaborative tasks alongside human workers, and autonomous problem-solving when encountering unexpected situations on the factory floor.

Related Organizations

Developers of the Gemini family of models, which are trained from the start to be multimodal across text, images, video, and audio.

A startup building a general-purpose brain for robots, backed by OpenAI and Thrive Capital.

AI robotics company building a universal AI brain for robots.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

Building a shared general-purpose brain for diverse robot embodiments, leveraging massive training data.

Home to the Conboy Lab (Irina and Michael Conboy).

R&D arm of Toyota Motor Corporation.

An Alphabet company building a software platform to make industrial robotics accessible and interoperable.

Through Copilot and the 'Recall' feature in Windows, Microsoft is integrating persistent memory and agentic capabilities directly into the operating system.

Developing practical collaborative robots (cobots) that leverage modern AI stacks for better interaction and task handling in logistics.