Robotic Electronic Skins (e-Skins)

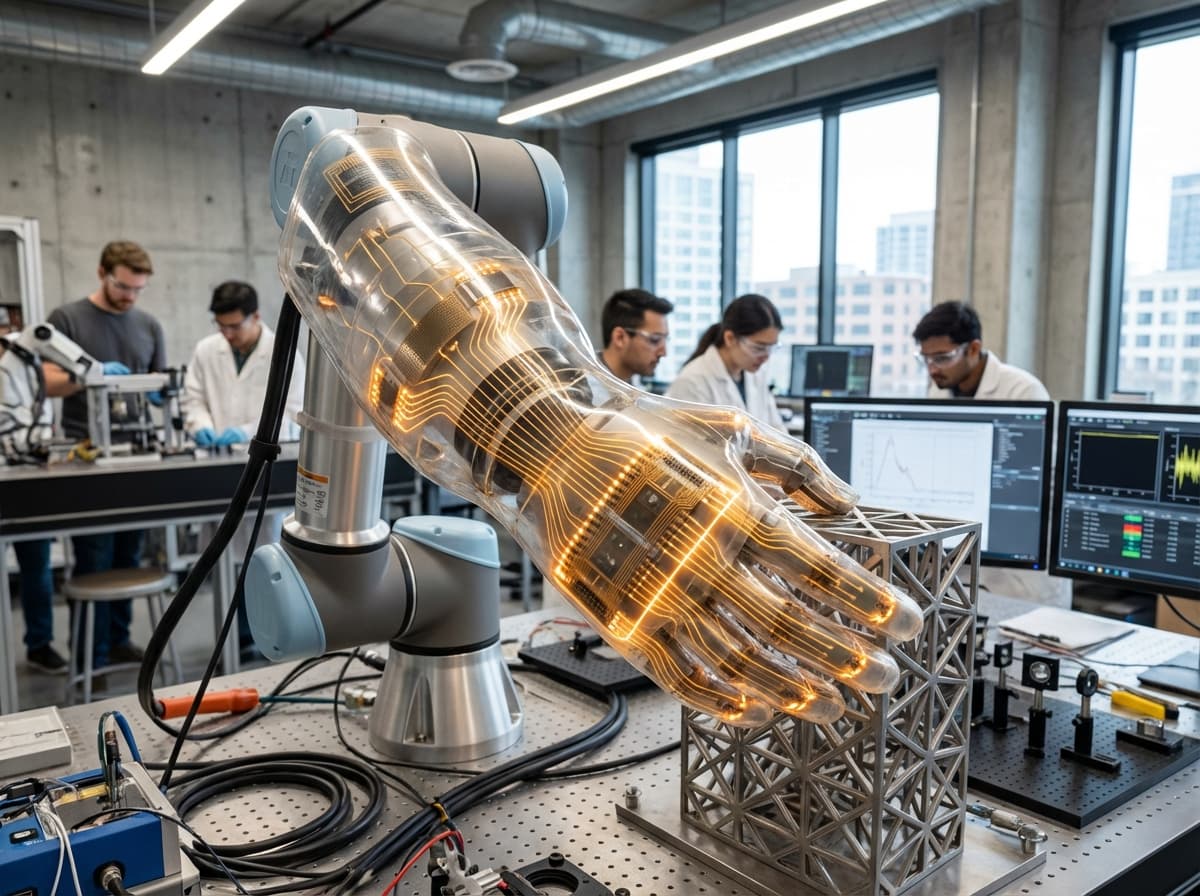

Robotic electronic skins represent a fundamental shift in how machines perceive and interact with their physical environment. Traditional industrial robots rely on discrete sensors positioned at specific points—typically force-torque sensors at joints or end-effectors—which provide only localised feedback and leave most of the robot's body effectively blind to touch. E-skins overcome this limitation by embedding dense arrays of flexible sensors across large surface areas of a robot's structure, creating a continuous sensory layer analogous to human skin. These sensor networks are fabricated using stretchable materials and conductive polymers that can conform to curved surfaces and withstand repeated deformation without losing functionality. The sensors themselves detect multiple modalities simultaneously: pressure distribution reveals contact forces and grip stability, temperature sensing prevents thermal damage to both robot and handled objects, and proximity detection enables pre-contact awareness of approaching obstacles or human workers. Advanced implementations incorporate capacitive, resistive, or piezoelectric sensing principles, often combining multiple technologies within a single skin to achieve comprehensive environmental awareness. The data from thousands of individual sensing elements are processed in real-time to create a spatial map of tactile information, giving robots a form of whole-body proprioception previously impossible with conventional sensing architectures.

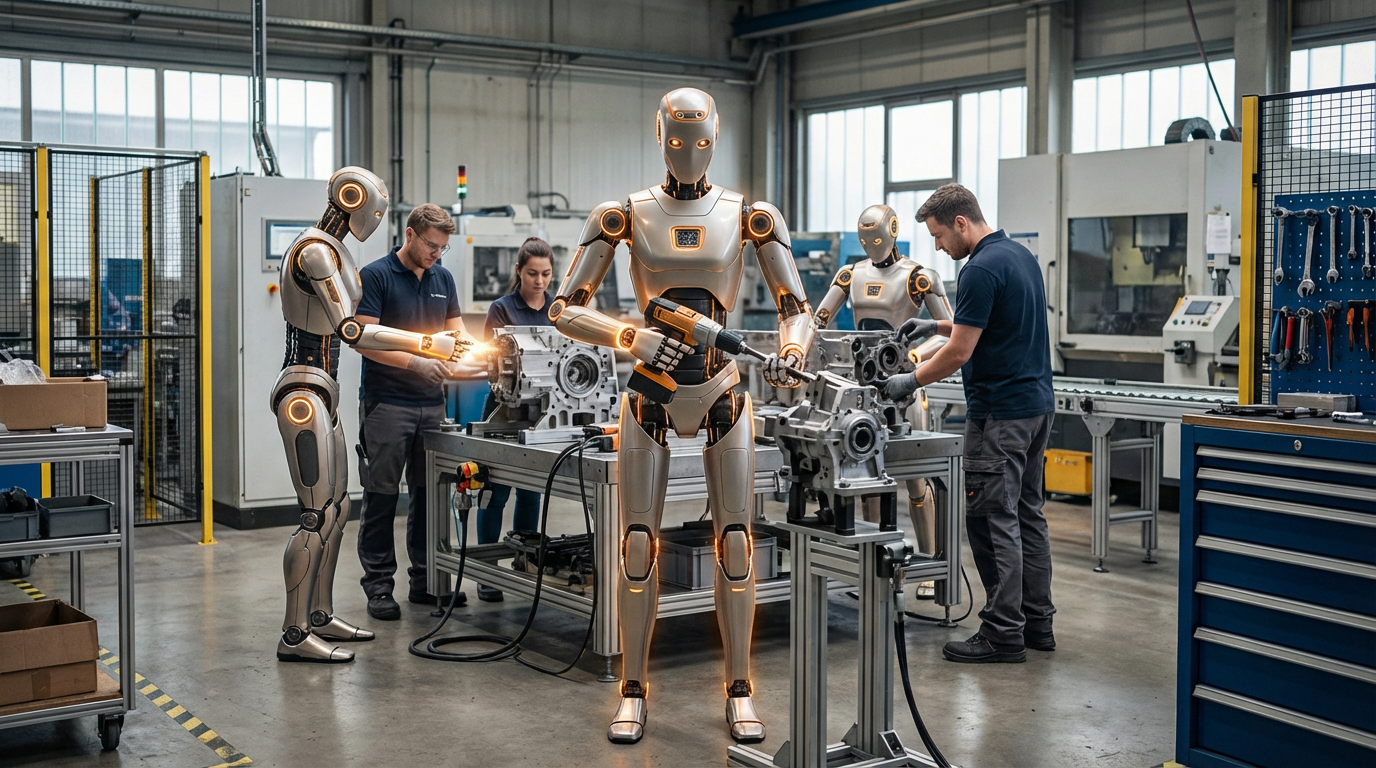

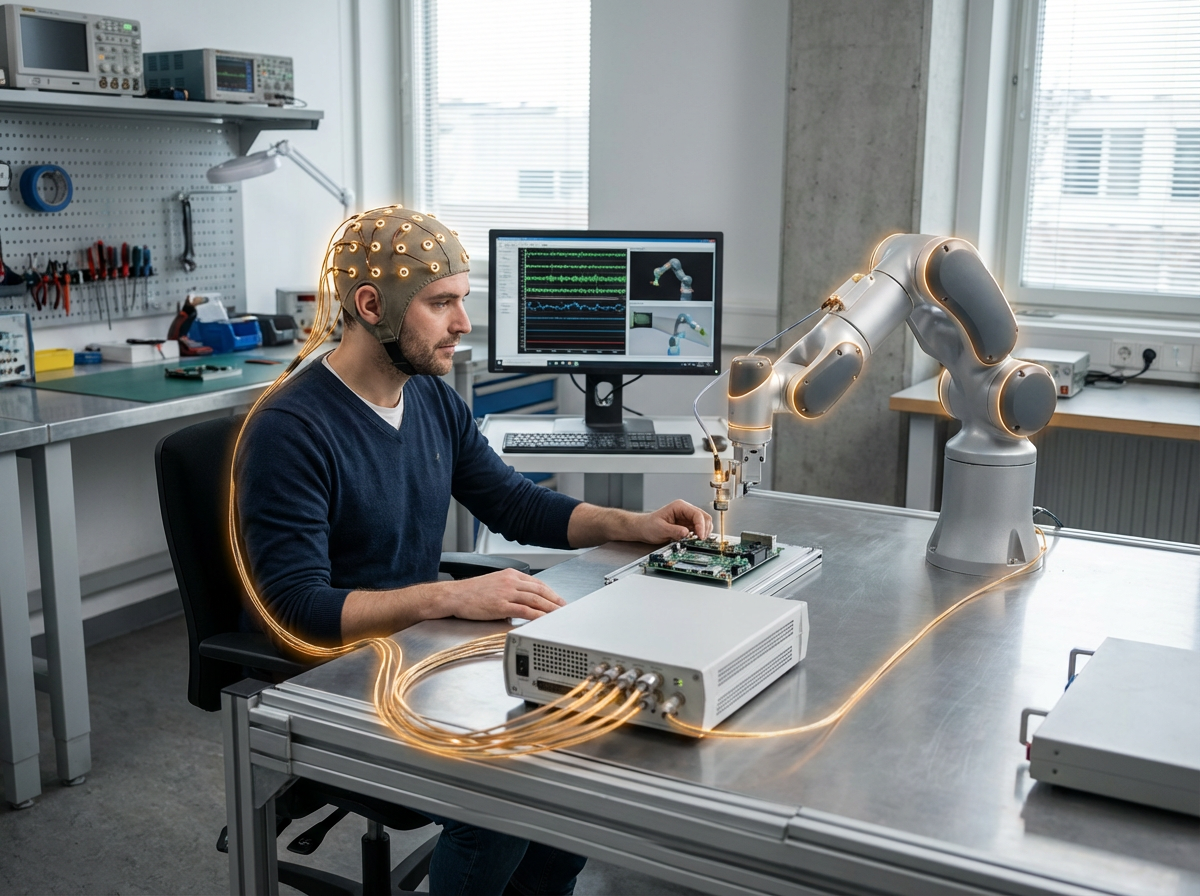

The manufacturing sector faces mounting pressure to create more flexible production environments where robots and human workers can collaborate safely without the physical barriers that have traditionally separated automated systems from people. Current safety regulations typically mandate protective caging around industrial robots, consuming valuable floor space and limiting operational flexibility. E-skins address this challenge by transforming robots into inherently safer machines capable of detecting and responding to unintended contact before harmful forces develop. When a robot covered in electronic skin makes unexpected contact with a person or object, the system can trigger immediate停止 or compliant withdrawal, reducing collision forces to safe levels within milliseconds. This capability is particularly valuable in assembly operations requiring frequent reconfiguration, where fixed safety barriers would be impractical. Beyond safety, e-skins enable entirely new categories of manipulation tasks that demand rich tactile feedback. Handling delicate or deformable objects—from agricultural produce to fabric materials—requires continuous monitoring of grip forces and contact distribution, information that whole-body sensing provides naturally. The technology also supports more sophisticated human-robot interaction paradigms, allowing workers to physically guide robots through new tasks or make real-time adjustments through intuitive touch-based commands rather than programming interfaces.

Early commercial deployments of e-skin technology have focused on collaborative robot applications in electronics assembly and automotive manufacturing, where the combination of safety enhancement and improved manipulation capabilities justifies the additional system complexity and cost. Research prototypes have demonstrated e-skins covering entire robot arms, grippers, and even mobile platforms, though current commercial products typically protect high-risk contact zones rather than providing complete body coverage. The technology aligns with broader industry trends toward flexible automation and human-robot collaboration, particularly as labour shortages and demand for customised production drive interest in more adaptable manufacturing systems. Ongoing development efforts aim to reduce the cost and complexity of e-skin fabrication while improving durability and self-healing capabilities, addressing current limitations around sensor longevity in harsh industrial environments. As manufacturing continues its evolution toward smaller batch sizes and more frequent product changeovers, the ability to deploy robots that can work safely alongside human workers without extensive safety infrastructure becomes increasingly valuable. The maturation of e-skin technology promises to accelerate this transition, enabling a future where robots possess the sensory awareness necessary to navigate the unpredictable, contact-rich environments that have traditionally required human dexterity and adaptability.

Related Organizations

Produces uSkin, a high-density tactile sensor skin for robots that is soft, durable, and capable of 3-axis force sensing.

Develops tactile sensors that give robots the sense of touch and the ability to measure friction and slip.

The Vuckovic Group develops inverse-designed photonics for quantum frequency conversion.

Runs the KROOF (Kranzberg Forest Roof) experiment, which includes CO2 enrichment components.

Develops smart fabric sensors originally designed for musical instruments, now used in VR and safety.

Develops tactile intelligence technology using elastomeric sensors to give robots the sense of touch.

Develops Carbon NanoBud (CNB) films for flexible touch sensors and heaters.

Builders of the Shadow Dexterous Hand, a modular end-effector used for advanced manipulation research.