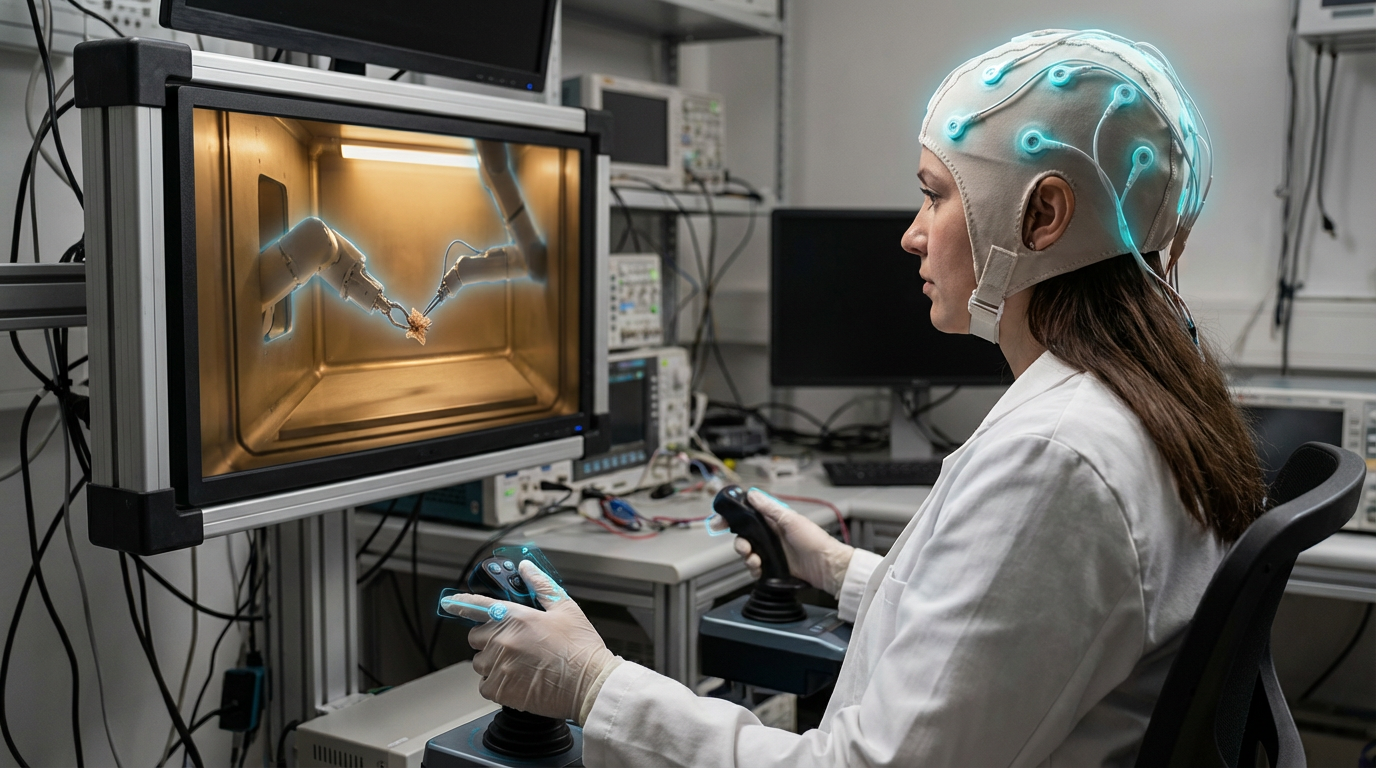

Immersive Human-Machine Co-Presence

Immersive human-machine co-presence systems are Extended Reality (XR) platforms including virtual reality (VR), augmented reality (AR), and mixed reality (MR) that are driven by direct neural intent, enabling hands-free manipulation of virtual worlds and shared mixed-reality workspaces where thoughts translate directly to actions without requiring hand controllers, gestures, or voice commands. These systems use brain-computer interfaces to read user intent and translate it into actions in virtual or augmented environments, enabling more natural and immersive interactions where users can manipulate virtual objects, navigate spaces, and interact with digital content using only their thoughts, creating shared collaborative spaces where multiple users can interact in mixed-reality environments through neural control.

This innovation addresses the limitation of current XR interfaces, where controllers or gestures can feel unnatural and limit immersion. By enabling thought-based control, these systems could create more natural and immersive experiences. Research institutions and companies are developing these technologies.

The technology is particularly significant for XR applications, where natural interaction could dramatically improve user experience. As the technology improves, it could enable new forms of collaboration and interaction. However, ensuring responsiveness, managing complexity, and achieving reliable control remain challenges. The technology represents an interesting direction for XR interfaces, but requires extensive development to achieve the performance and reliability needed for practical use. Success could enable more immersive XR experiences, but the technology must prove it can provide responsive, reliable control that enhances rather than detracts from the experience.

Related Organizations

Builds AI-powered BCI headsets with AR displays for accessibility and communication.

Creates open-source brain-computer interface tools and the Galea headset (integrating with VR) for researching physiological responses.

Develops the Quest Pro and research prototypes (Butterscotch, Starburst) focusing on foveated systems.

United States · Startup

Develops wrist-worn electroneurography (ENG) sensors for gesture control in XR environments without cameras.

Social media and camera company developing AR spectacles.

Produces EEG headsets and the BCI-OS platform, allowing developers to build applications that respond to cognitive stress and facial expressions.

Develops gamified neurorehabilitation platforms for stroke and brain injury recovery.

Produces VR headsets and actively partners with BCI companies (like OpenBCI and MyndPlay) to integrate brain-sensing into their hardware ecosystem.

Manufacturer of 'bionic display' headsets that use a high-density focus display inside a peripheral context display.