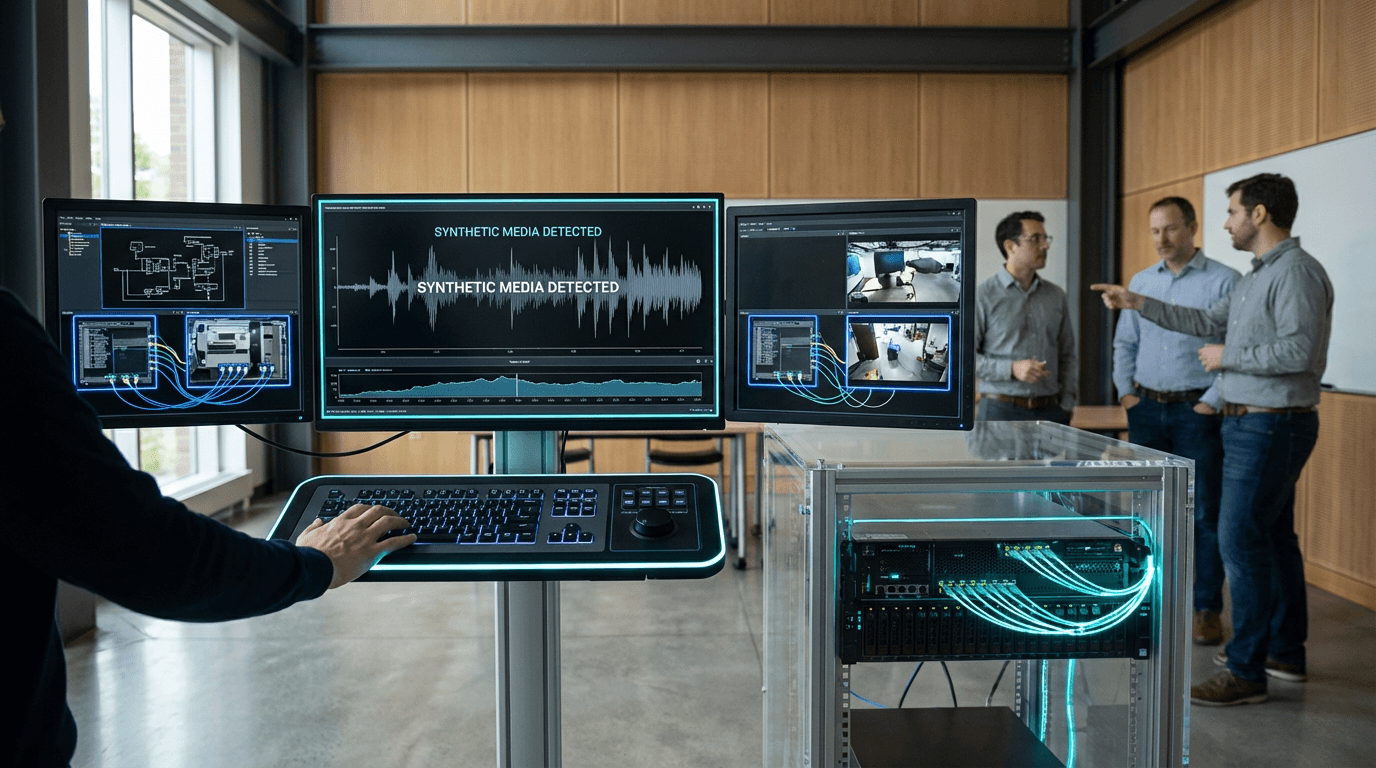

Synthetic Media Detection Systems

Synthetic Media Detection Systems represent a critical technological response to the proliferation of AI-generated and manipulated content across digital platforms. These systems employ sophisticated machine learning classifiers that analyze multiple dimensions of media files—including visual artifacts, audio inconsistencies, temporal anomalies, and metadata patterns—to determine whether content has been artificially generated or manipulated. The detection process typically involves examining subtle indicators that human observers might miss: unnatural facial movements in video, inconsistent lighting patterns, audio-visual synchronization errors, or telltale compression artifacts that emerge from generative AI processes. Advanced detection systems often combine multiple analytical approaches, including convolutional neural networks trained on vast datasets of both authentic and synthetic media, frequency domain analysis to identify digital fingerprints, and temporal coherence checks that evaluate whether sequential frames exhibit natural continuity or reveal signs of frame-by-frame manipulation.

The entertainment and streaming industry faces mounting challenges as synthetic media becomes increasingly sophisticated and accessible. Deepfake technology can now convincingly replicate actors' performances, generate entirely fictional personas, or alter existing content in ways that are difficult to distinguish from authentic material. This creates significant risks around intellectual property protection, as performers' likenesses can be appropriated without consent, and threatens the fundamental trust relationship between content creators and audiences. Detection systems address these challenges by providing verification mechanisms that can be integrated into content distribution pipelines, helping platforms identify unauthorized synthetic reproductions of copyrighted performances, flag potentially misleading content, and maintain the integrity of their media libraries. For streaming services and production companies, these tools offer a defensive capability against reputation damage and legal liability while supporting compliance with emerging regulations around synthetic media disclosure.

Current implementations of detection systems are being deployed across major streaming platforms and social media networks, though specific adoption details vary by organization. These systems typically generate trust scores indicating the likelihood that content is synthetic or manipulated, accompanied by forensic reports that highlight specific artifacts or anomalies detected during analysis. Industry analysts note that detection capabilities are engaged in an ongoing technological arms race with generative AI systems, as each improvement in synthesis techniques necessitates corresponding advances in detection methodologies. Research suggests that multi-modal approaches combining visual, audio, and metadata analysis currently offer the most robust detection capabilities. Looking forward, the integration of these systems into content authentication frameworks—potentially including blockchain-based provenance tracking and cryptographic signing—represents a broader industry trend toward establishing verifiable chains of custody for digital media. As synthetic media becomes more prevalent in entertainment production and distribution, detection systems are evolving from defensive tools into essential infrastructure for maintaining content authenticity and audience trust in an increasingly digital media landscape.

Related Organizations

An open technical standard body addressing the prevalence of misleading information online through content provenance.

Provides an enterprise platform for deepfake detection across audio, video, and image formats using multi-model analysis.

Specializes in visual threat intelligence and deepfake detection, monitoring the web for malicious synthetic media.

Develops both generative dubbing tools and deepfake detection algorithms for government use.

Focuses on image provenance and authentication, helping verify that media has not been altered (the inverse of detection).

Provides cloud-based AI models for content moderation, including detection of NSFW content, hate symbols, and AI-generated media.

Develops silicon spin qubits using advanced 300mm wafer manufacturing processes.

Provides liveness detection software to prevent identity theft via deepfakes or masks during biometric verification.