Synthetic Media Detection

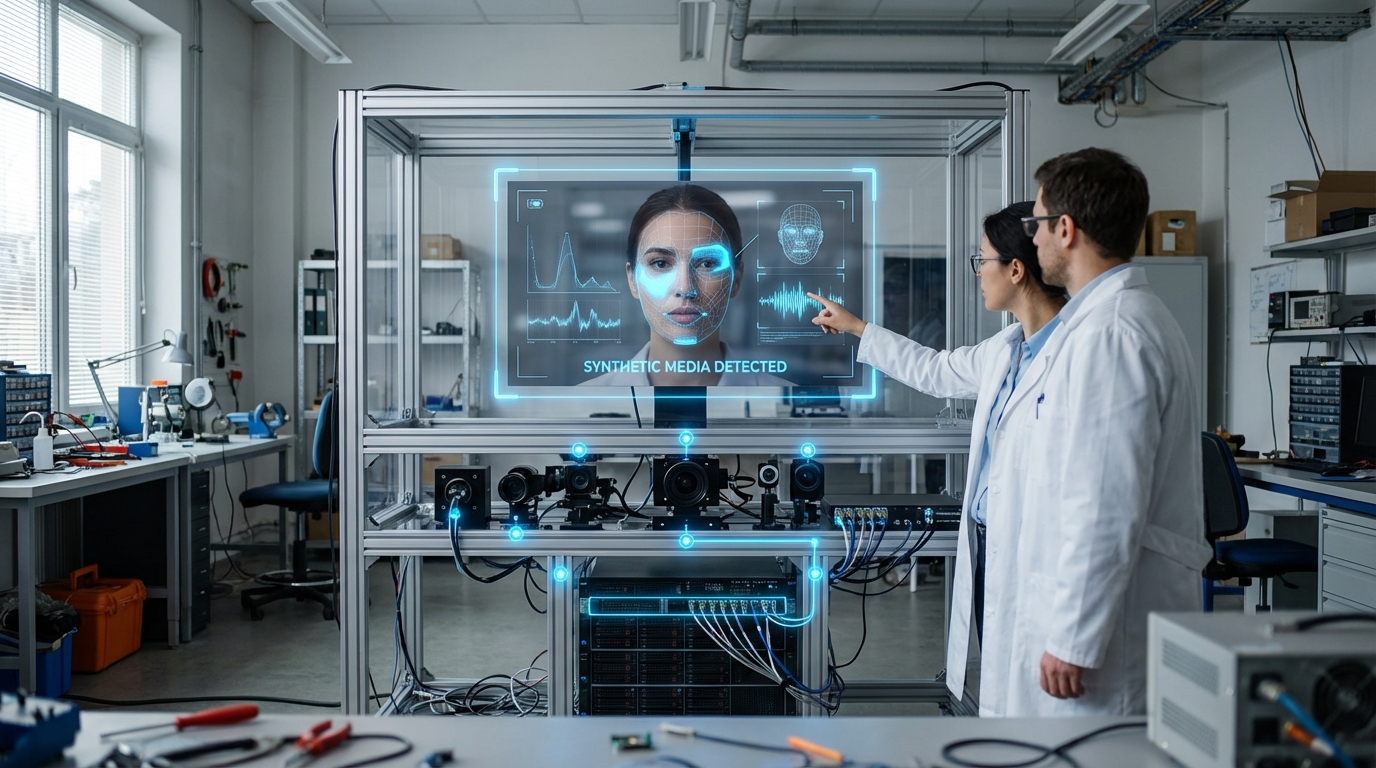

The proliferation of generative AI has created an unprecedented challenge for information integrity: distinguishing authentic media from synthetic forgeries. Synthetic media detection encompasses a suite of forensic technologies designed to identify AI-generated images, video, and audio content. These systems operate through multiple technical approaches, including deep learning classifiers trained to recognize artifacts and inconsistencies characteristic of generative models, frequency domain analysis that detects anomalies in how synthetic content represents visual or audio information, and biological signal verification that examines subtle physiological markers like pulse detection in facial video or natural breathing patterns in audio. More advanced implementations incorporate cryptographic provenance systems, such as content credentials and digital watermarking embedded at the point of capture, creating an immutable chain of custody that verifies a piece of media's origin and any subsequent modifications. These technical mechanisms work in concert, as no single detection method proves foolproof against the rapidly evolving capabilities of generative models.

The strategic imperative for synthetic media detection stems from the profound risks that convincing forgeries pose to institutional trust and geopolitical stability. Deepfake technology has lowered the barrier for creating fabricated statements from political leaders, falsified evidence of military actions, or manufactured diplomatic incidents that could trigger international crises. For intelligence agencies, military commands, and diplomatic corps, the inability to verify the authenticity of communications creates operational paralysis during time-sensitive situations. Influence operations increasingly leverage synthetic personas—entirely fabricated individuals with AI-generated faces, voices, and social media histories—to spread disinformation at scale while evading traditional attribution methods. Financial markets, already vulnerable to rumor and speculation, face new manipulation vectors when synthetic media can convincingly depict corporate executives making false statements or fabricate geopolitical events. Detection systems address these challenges by providing verification layers that help institutions maintain confidence in their information streams, enabling them to distinguish genuine communications from sophisticated forgeries before making consequential decisions.

Major technology platforms and research institutions have deployed various detection capabilities, with social media companies implementing automated screening for synthetic content and news organizations adopting verification workflows that incorporate forensic analysis. The Content Authenticity Initiative, backed by major camera manufacturers and software companies, has begun embedding cryptographic signatures in devices at the point of capture, creating a technical foundation for provable authenticity. However, the detection landscape remains locked in an adversarial race, as each improvement in detection capabilities spurs corresponding advances in generation techniques designed to evade those same systems. Early deployments indicate that hybrid approaches combining multiple detection methods with human expert review provide the most reliable results, though even these systems struggle with state-of-the-art generative models. The trajectory points toward an ecosystem where content provenance becomes standard infrastructure, with authentication built into capture devices, transmission protocols, and display systems. As synthetic media capabilities continue advancing, the geopolitical significance of detection systems will only intensify, making them essential components of information security architecture for governments, media organizations, and critical infrastructure operators navigating an environment where seeing and hearing no longer guarantee believing.

Related Organizations

An open technical standard body addressing the prevalence of misleading information online through content provenance.

Provides an enterprise platform for deepfake detection across audio, video, and image formats using multi-model analysis.

Software giant and founder of the Content Authenticity Initiative (CAI).

Specializes in visual threat intelligence and deepfake detection, monitoring the web for malicious synthetic media.

Focuses on image provenance and authentication, helping verify that media has not been altered (the inverse of detection).

Develops both generative dubbing tools and deepfake detection algorithms for government use.

Specializes in voice security and authentication, actively developing liveness detection to stop audio deepfakes.

Provides cloud-based AI models for content moderation, including detection of NSFW content, hate symbols, and AI-generated media.

Generative voice AI platform for cloning and localization.