LCARS

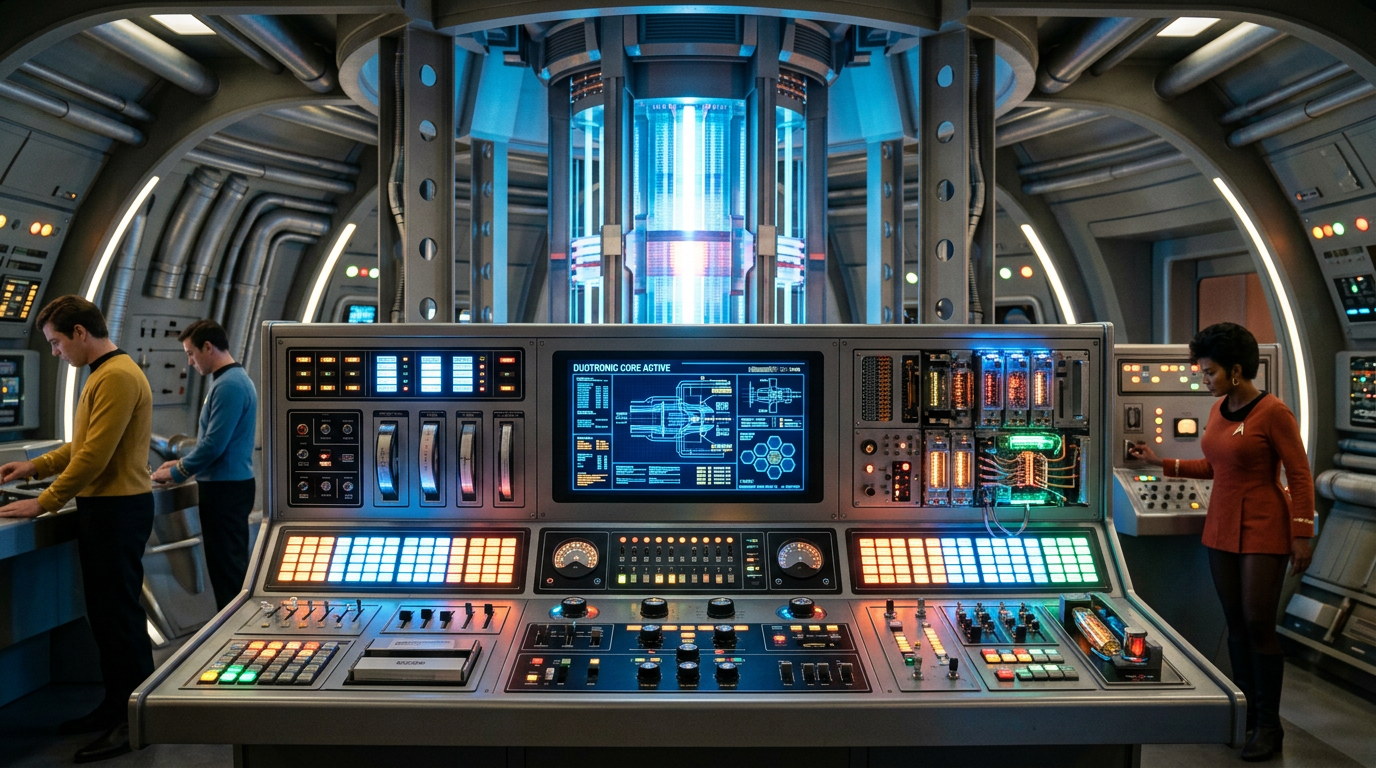

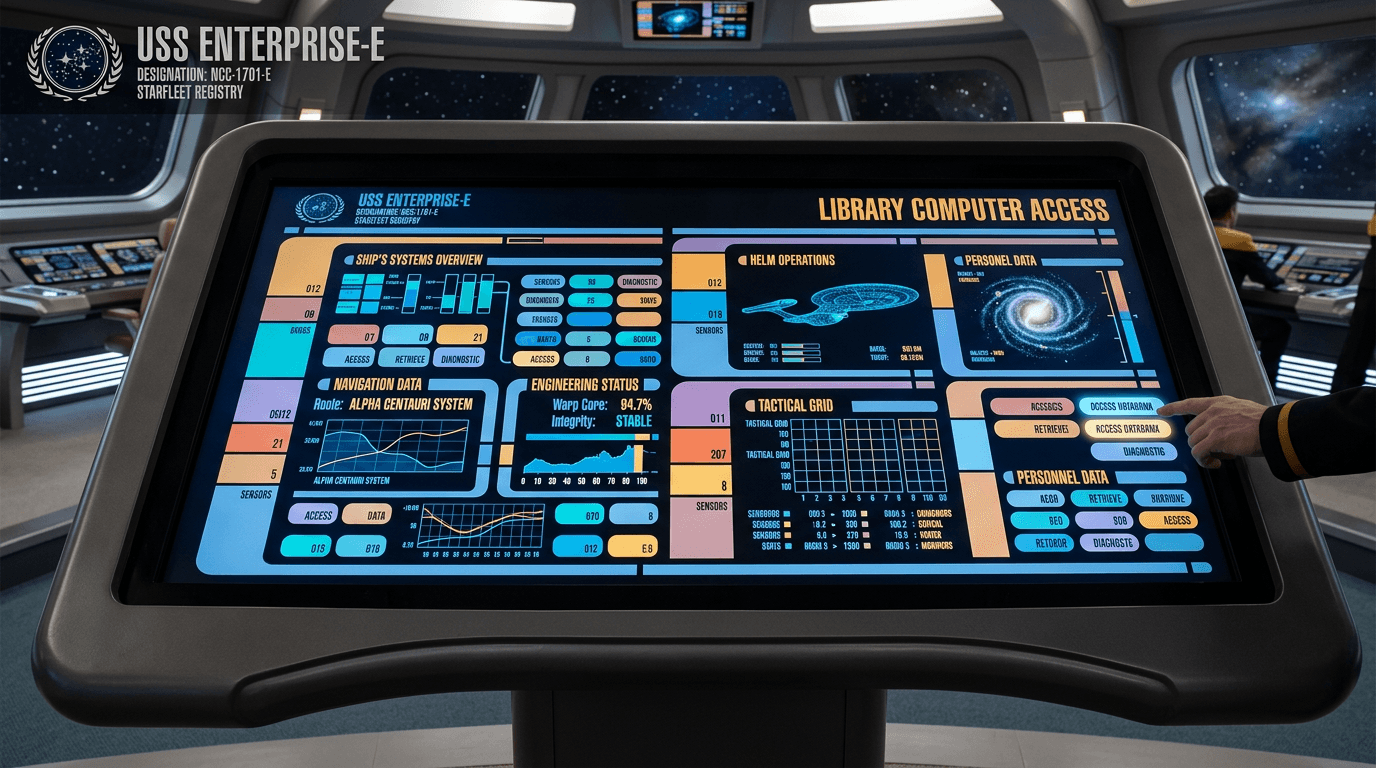

LCARS, or the Library Computer Access and Retrieval System, represents a conceptual approach to human-computer interaction that emerged from Star Trek's vision of 24th-century computing. The system is imagined as a unified interface architecture that consolidates access to all spacecraft systems—from environmental controls and navigation to tactical operations and scientific databases—through a distinctive visual language of color-coded panels, curved geometric elements, and hierarchical information displays. Unlike contemporary operating systems that separate applications and functions, LCARS is conceived as an integrated framework where every ship system speaks a common interface language, allowing crew members to transition seamlessly between controlling life support, analyzing sensor data, or accessing library archives. The fictional system incorporates multimodal interaction through touch panels, voice commands, and gesture recognition, with an underlying artificial intelligence that learns individual user preferences and adapts interface layouts accordingly. This design philosophy reflects a speculative endpoint where computing becomes ambient and intuitive rather than application-centric.

Within science fiction narratives, LCARS serves as more than mere set dressing—it embodies assumptions about how future organizations might manage information complexity and operational control. The system's ubiquity across Starfleet vessels suggests a standardized approach to interface design that prioritizes consistency and transferability of skills, allowing personnel to operate any station with minimal retraining. This concept resonates with contemporary discussions in military and aerospace contexts about reducing cognitive load through standardized human-machine interfaces, particularly as systems grow more complex. The visual design choices—high contrast colors, clear hierarchies, and persistent status indicators—reflect principles from real-world control room design and aviation cockpits, where rapid comprehension under stress is paramount. LCARS also represents an optimistic vision of artificial intelligence as assistant rather than replacement, where computers anticipate needs without overriding human judgment, a balance that remains elusive in current automation debates.

The plausibility of LCARS-like systems depends on convergence across several technological domains. Contemporary research in adaptive user interfaces, natural language processing, and context-aware computing addresses fragments of the LCARS vision, though true integration remains distant. Current voice assistants and gesture controls demonstrate feasibility of multimodal interaction, while advances in machine learning enable some degree of personalization and predictive assistance. However, the fictional system assumes solved problems that remain formidable: seamless integration across disparate systems, reliable voice recognition in noisy operational environments, and AI that genuinely understands context and intent rather than pattern-matching. The security implications of such unified access—where any terminal can control critical systems—would require authentication and authorization frameworks far more sophisticated than current implementations. As computing interfaces continue evolving toward more natural interaction paradigms, LCARS remains valuable as a design fiction that challenges developers to imagine computing that fades into the background of human activity rather than demanding constant attention and technical expertise.