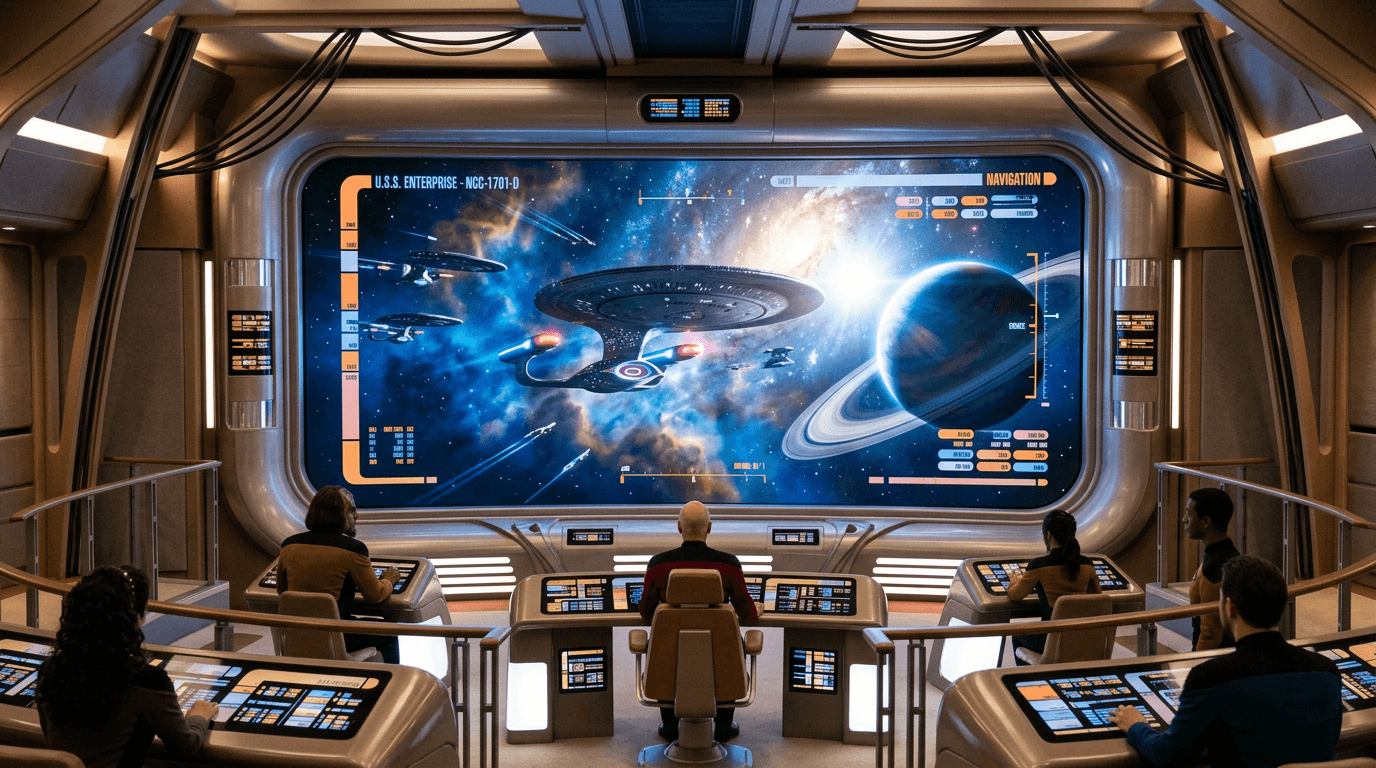

Main Viewscreen

The main viewscreen represents a conceptual integration of display technology, sensor fusion, and information architecture designed to transform raw data streams into actionable visual intelligence. In science fiction narratives, particularly space-faring scenarios, this system functions as a unified interface that consolidates inputs from multiple sensor arrays—electromagnetic spectrum scanners, gravimetric detectors, subspace communications receivers, and optical imaging systems—into a coherent visual presentation. The underlying premise assumes advanced computational systems capable of real-time synthesis of disparate data sources, translating everything from radio frequencies to hypothetical faster-than-light sensor readings into human-interpretable imagery. While contemporary military vessels and spacecraft employ sophisticated display systems that aggregate radar, sonar, satellite feeds, and camera arrays, the fictional main viewscreen extends this concept to encompass sensor modalities that remain theoretical or purely speculative, such as subspace scanning or instantaneous long-range visual acquisition across interstellar distances.

Within narrative frameworks, the main viewscreen serves as both a practical command interface and a storytelling device that externalizes decision-making processes. It allows characters to simultaneously observe external environments, review tactical situations, and communicate with other vessels or planetary installations through a single focal point. This consolidation addresses a fundamental challenge in complex operational environments: information overload and the need for rapid situational awareness. Advanced fictional implementations incorporate holographic depth rendering, creating three-dimensional tactical displays that represent spatial relationships between objects, trajectory predictions, and threat assessments in ways that two-dimensional screens cannot. This concept parallels real-world research into volumetric displays, augmented reality command centers, and multi-layered information visualization systems currently explored in military command-and-control applications and air traffic management.

The plausibility of main viewscreen technology depends heavily on which components are emphasized. Current display technology already achieves large-scale, high-resolution visualization, and sensor fusion algorithms successfully integrate multiple data streams in aerospace and naval contexts. The speculative leap occurs in the assumed sensor capabilities—particularly any that would violate known physics, such as faster-than-light detection or perfect resolution at astronomical distances—and in the seamless real-time processing of vast data volumes. Holographic and volumetric display research continues to advance, though practical implementations face constraints in brightness, viewing angles, and power consumption. The concept's evolution toward realization would require breakthroughs in computational efficiency, sensor miniaturization, and display technology, alongside resolution of fundamental physics questions regarding what information can actually be gathered across vast distances in space. As command centers increasingly adopt immersive visualization technologies and AI-assisted data interpretation, elements of the main viewscreen concept gradually transition from pure speculation toward engineered reality, though the more exotic sensor capabilities remain firmly in the realm of narrative imagination.