Affective Data Governance

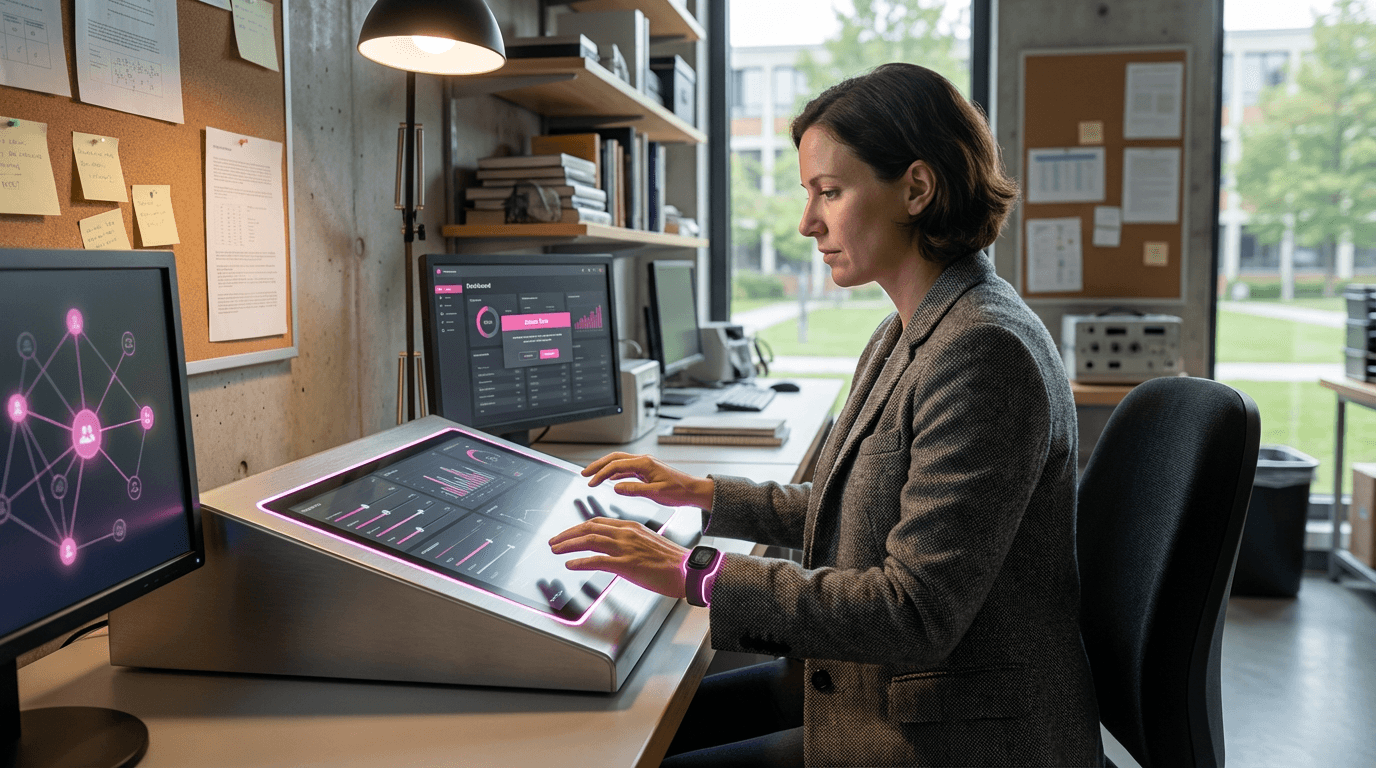

Affective data governance addresses a critical gap in contemporary data protection frameworks: the collection, analysis, and commercialization of emotional and behavioral signals. As sensors, cameras, and AI systems become increasingly adept at detecting facial expressions, vocal tone, physiological responses, and behavioral patterns, they generate vast streams of intimate data that reveal users' emotional states, psychological vulnerabilities, and unconscious reactions. Unlike traditional personal data such as names or addresses, affective data captures the involuntary and deeply personal dimensions of human experience—information that individuals may not even be consciously aware they are revealing. This technology encompasses both the policy frameworks that define acceptable use of such data and the technical infrastructure that enforces those boundaries, including consent management systems, data minimization protocols, automated deletion mechanisms, and audit trails that track how emotional signals are captured and processed across different contexts.

The commercial incentives to harvest affective data are substantial, as emotional insights enable unprecedented levels of behavioral prediction, persuasion, and personalization. Advertisers seek to identify moments of vulnerability or receptiveness, employers may attempt to monitor worker engagement or stress levels, and platforms can optimize content to maximize emotional engagement regardless of user wellbeing. Without robust governance mechanisms, this creates profound power asymmetries where organizations possess intimate knowledge of individuals' emotional lives while users lack meaningful control or even awareness of how their affective responses are being captured and exploited. Traditional data protection regulations often prove inadequate for affective data because they were designed for explicit information rather than inferred emotional states, and because the contextual nature of emotional expression makes blanket consent mechanisms insufficient—what feels appropriate to share in a healthcare setting differs fundamentally from a retail environment.

Early implementations of affective data governance are emerging across multiple sectors, with particular attention in healthcare applications where emotional monitoring may support mental health treatment, and in educational technology where student engagement tracking raises significant ethical concerns. Some jurisdictions are beginning to classify certain categories of affective data as sensitive information requiring enhanced protections, while technology providers are developing granular consent interfaces that allow users to specify which emotional signals can be collected in which contexts and for what purposes. Research institutions and advocacy organizations are working to establish norms around affective data retention limits, the right to emotional privacy, and prohibitions on certain high-risk applications such as emotion-based hiring decisions. As affective computing capabilities continue to advance, robust governance frameworks will become essential infrastructure for preserving human dignity and autonomy in an era where our emotional lives are increasingly legible to machines and the organizations that deploy them.

Related Organizations

Advocacy group led by Rafael Yuste promoting the five ethical neurorights in international law.

The legislative body that passed the world's first constitutional amendment protecting neurorights.

The UK's independent regulator for data rights, providing specific guidance on AI and data protection.

Produces EEG headsets and the BCI-OS platform, allowing developers to build applications that respond to cognitive stress and facial expressions.

Produces 'Ethically Aligned Design' standards, addressing the legal and ethical implications of autonomous systems.

Think tank and advocacy group focused on data privacy issues.

Uses webcams to measure attention and emotion in response to video advertising.

Developers of Anura, an AI platform that measures blood pressure, heart rate, and stress levels via 30-second video selfies using Transdermal Optical Imaging.

An enterprise AI company specializing in conversational service automation, using tonal analysis to detect customer sentiment and emotion.