Neuromorphic Vision Sensors

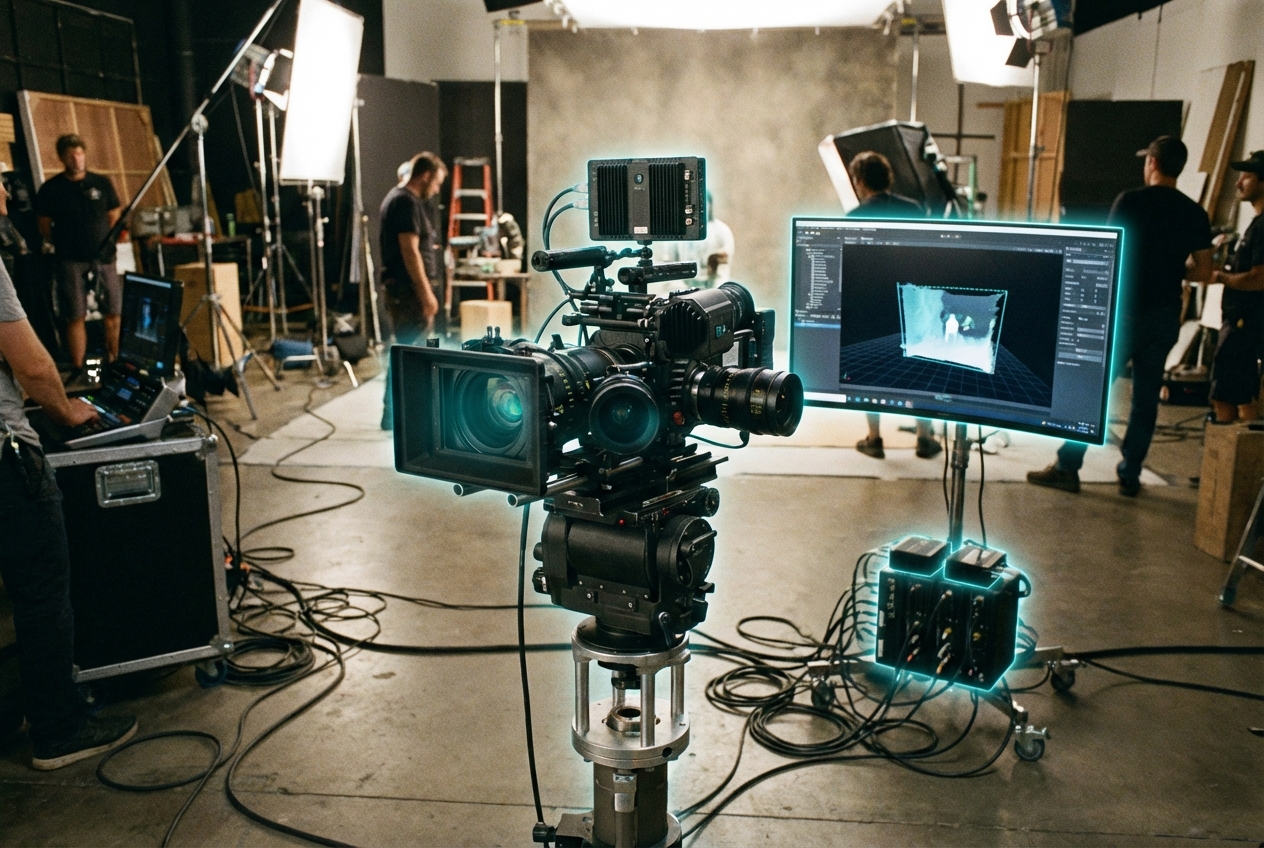

Neuromorphic vision sensors extend beyond event cameras by embedding spiking neural networks next to the photodiodes, producing edge detections, optic flow, or gesture classifications directly on-sensor. Instead of dumping raw frames to a GPU, the chip emits sparse spike trains or metadata that describe motion trajectories and salience, drastically reducing computation for downstream systems. Some architectures, such as Intel’s Loihi-based add-ons or Prophesee’s Metavision stack, let developers upload custom neuromorphic models to the sensor itself.

Media robotics teams use these sensors in autonomous camera rigs, drones, and stabilizers because they see fast motion without blur and can react before a mechanical gimbal moves. Sports broadcasters deploy them atop hoops or goalposts to detect impact points, while interactive art installations leverage their low latency for gesture-controlled projections. Since the sensors can output higher-level semantic cues, they also serve as input for adaptive live graphics that respond to performer motion in real time.

Tooling is nascent: creative coders must learn neuromorphic programming paradigms, and there is no dominant standard for spike-based data interchange. IEEE P2846 and Khronos working groups are evaluating ways to encapsulate neuromorphic outputs alongside traditional video streams, and startups are building Unreal and TouchDesigner plugins that translate spikes to MIDI-like events. With TRL 4–5 prototypes already powering labs and select broadcast experiments, neuromorphic vision will gradually complement conventional cameras wherever ultra-low latency perception unlocks new forms of responsive media.

Related Organizations

Pioneer in event-based vision sensors and associated neuromorphic processing algorithms.

Swiss company specializing in Dynamic Vision Sensors (DVS) and neuromorphic software for robotics.

Develops stacked event-based vision sensors with integrated logic layers.

Switzerland · University

Lab led by Davide Scaramuzza.

Develops ultra-low-power mixed-signal neuromorphic processors and sensors for edge AI applications.

CelePixel

China · Startup

Sensor technology company.

Developer of the Akida neuromorphic processor IP and chips.

Australia · University

Hosts the International Centre for Neuromorphic Systems (ICNS).