Brain-Computer Media Interfaces (BCMI)

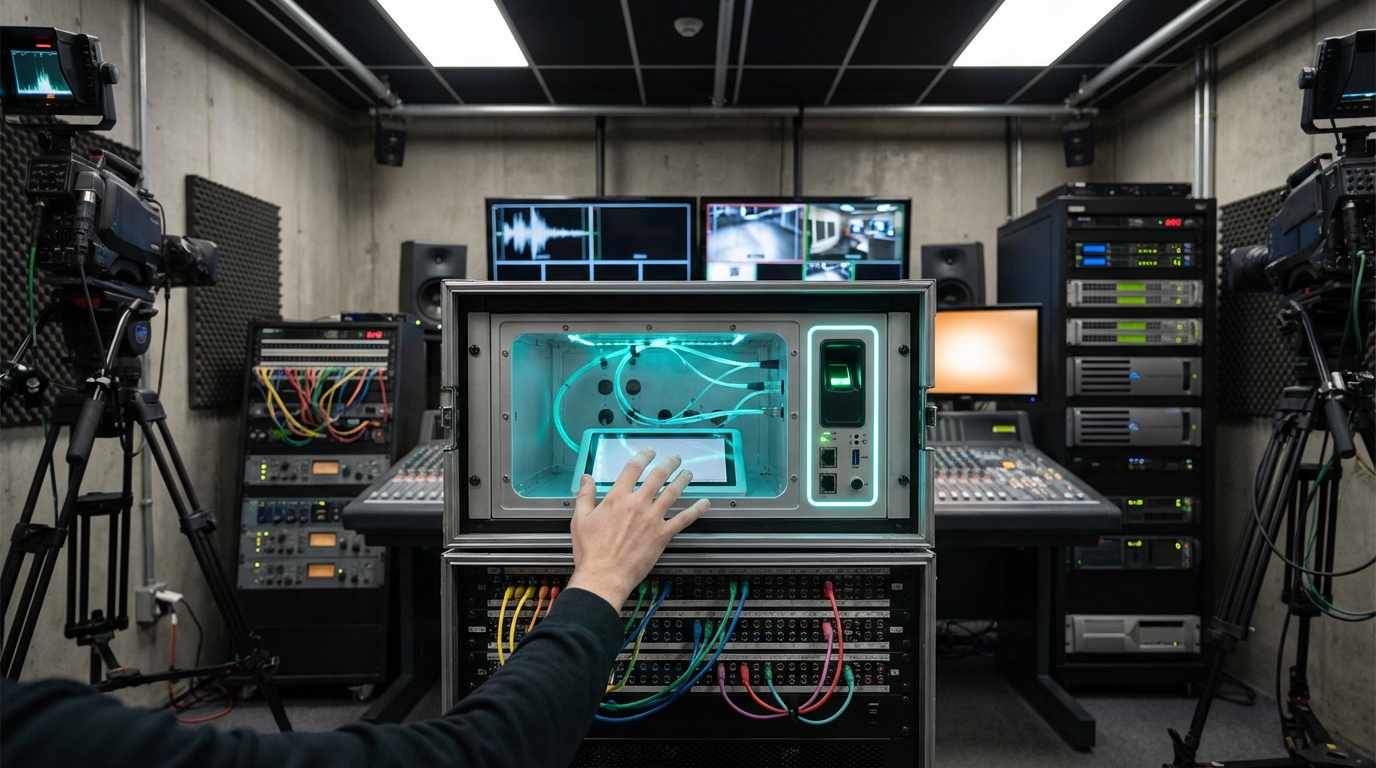

Brain-Computer Media Interfaces (BCMI) capture neural intent through non-invasive EEG caps, ear-EEG, or fNIRS headbands, then use deep learning decoders to map brain rhythms to interface primitives such as selection, navigation, or continuous control. Some labs combine neural signals with residual muscular input from facial EMG to stabilize commands, while others pair invasive BCIs with mixed reality headsets for film production experiments. The ambition is to let users steer media, edit footage, or puppeteer digital characters using cognitive focus rather than physical controllers.

Early adopters are accessibility artists and research studios—think Meta’s CTRL-Labs lineage, Razer’s Project Galea, or the Neural Impulse Actuator revival—who demonstrate silent captioning, audience participation in VR theatre, or “brain DJ” sets where neural arousal mixes samples. BCMI also intrigues animation houses who imagine directors blocking scenes merely by visualizing motion, and esports broadcasters exploring thought-driven spectator overlays. The modality blurs lines between intimate self-tracking and public performance, raising questions around mental privacy and fatigue.

The roadmap to mass media use sits at TRL 3–4 with regulatory scrutiny mounting. IEEE, UNESCO, and Chile’s neuro-rights legislation advocate cognitive liberty, forcing platform designers to store neural embeddings locally and give users hard kill switches. Hardware miniaturization, dry electrodes, and ML personalization pipelines are improving quickly, suggesting that within five years BCMI could surface as an accessible optional control layer in creative software, while invasive systems remain limited to clinical or high-end installation contexts.

Related Organizations

Develops BCI-enabled headphones that detect focus and intent to control digital experiences.

Produces EEG headsets and the BCI-OS platform, allowing developers to build applications that respond to cognitive stress and facial expressions.

Home of the Affective Computing research group led by Rosalind Picard.

Creates open-source brain-computer interface tools and the Galea headset (integrating with VR) for researching physiological responses.

Social media and camera company developing AR spectacles.

Builds AI-powered BCI headsets with AR displays for accessibility and communication.

Develops high-performance BCI hardware, including the 'Unicorn' hybrid black interface for developers.

Develops the Muse EEG headband and software platform that adapts audio soundscapes in real-time based on the user's brain state (meditation/focus).

Neuroscience company developing non-invasive brain recording technology (Flow and Flux).

Creator of SteamVR and its Motion Smoothing technology.