Neuromorphic Event Cameras

Neuromorphic event cameras mimic retinal ganglion cells: each pixel fires only when it detects a significant brightness change, encoding time-stamped “events” rather than fixed frames. That yields microsecond latency, 120+ dB dynamic range, and drastically lower data rates, and the sparse stream can be fed directly into spiking neural networks or converted into voxelized point clouds. Modern sensors from Prophesee, iniVation, and Sony integrate global shutters so creatives can blend event data with RGB footage.

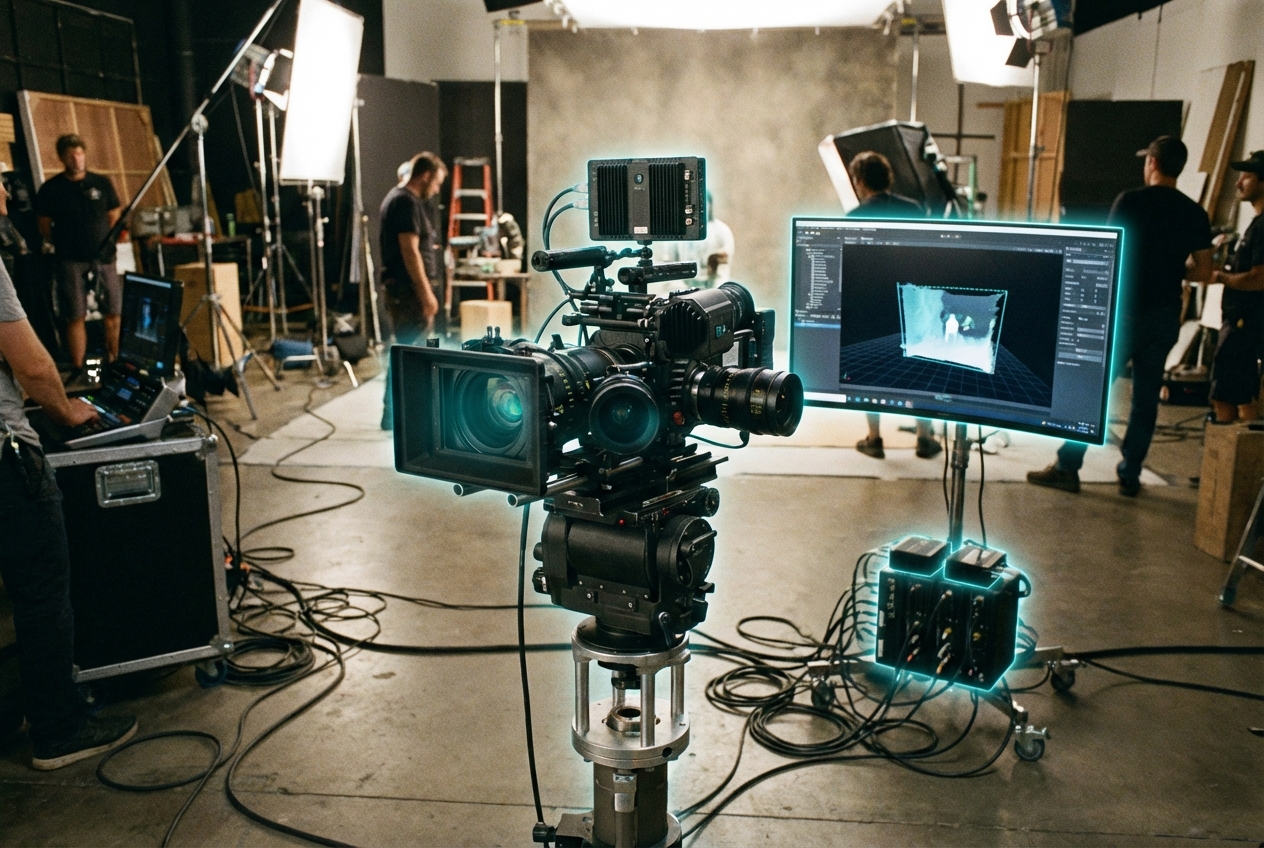

Cinematographers use event cameras to capture muzzle flashes, fireworks, or fast ball spin without rolling-shutter artifacts, while sports analytics teams derive trajectory metadata in real time for augmented broadcast graphics. VFX houses blend event streams with volumetric captures to produce stylized motion streaks, and robotics-heavy productions rely on them for reliable tracking under strobe lighting. Because the data inherently encodes motion, editors gain new descriptors—vectors, dwell time, per-pixel motion energy—that enable adaptive storytelling.

Tooling remains early: pipelines must translate asynchronous events into formats creative suites understand, and calibrating event-RGB rigs is nontrivial. Research groups are building NeRF-like reconstructions driven by event data, and Khronos is evaluating extensions to glTF for sparse temporal data. As silicon costs drop and standard SDKs ship with Unreal plugins, neuromorphic event capture will graduate from research labs to on-set specialty cameras, giving directors a new sensor modality for kinetic narratives.

Related Organizations

Pioneer in event-based vision sensors and associated neuromorphic processing algorithms.

Swiss company specializing in Dynamic Vision Sensors (DVS) and neuromorphic software for robotics.

Home to the Robotics and Perception Group (RPG).

Develops stacked event-based vision sensors with integrated logic layers.

Develops ultra-low-power mixed-signal neuromorphic processors and sensors for edge AI applications.

Australia · University

Hosts the International Centre for Neuromorphic Systems (ICNS).

United States · Company

Leading developer of advanced digital imaging solutions.

Develops silicon spin qubits using advanced 300mm wafer manufacturing processes.

Developers of EELS (Exobiology Extant Life Surveyor), a snake-like modular robot designed for diverse terrains.