Dream-to-Video Decoders

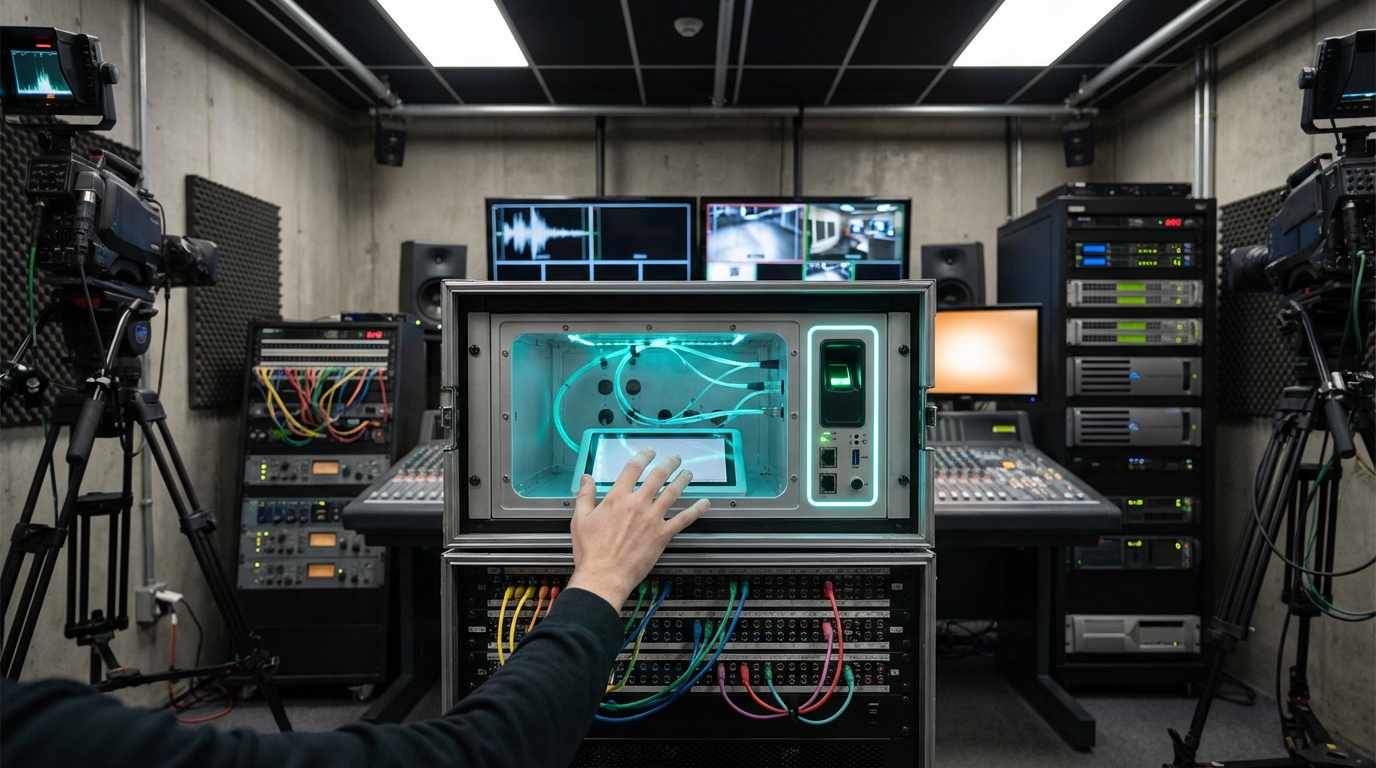

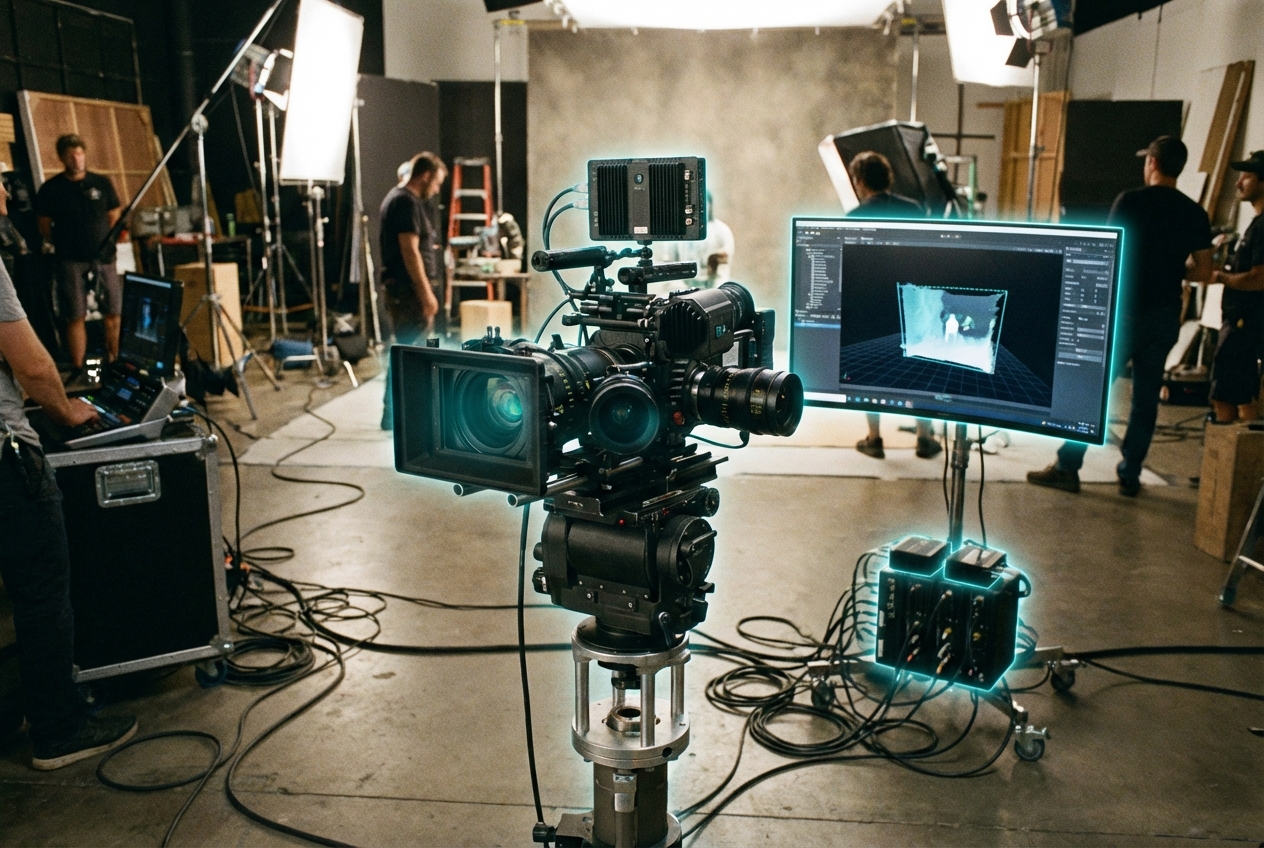

Dream-to-video decoders align brain imaging data—fMRI voxels, MEG, or dense EEG—with visual latent spaces so they can reconstruct coarse video of what a subject sees or imagines. Training requires hours of paired data per participant: subjects watch clips while networks learn mappings between neural activity patterns and latent image tokens. During recall, the system samples from the learned distribution to generate impressionistic clips that reflect color, motion, and gist.

Although fidelity is low, the technology hints at new storytelling forms: scientists visualizing dreams, therapists externalizing traumatic memories, or artists collaborating with their subconscious. Media labs explore legal, ethical, and consent frameworks, imagining future “neuro-cinemas” where audiences share cognitive content directly.

The field is firmly TRL 2, limited to labs with MRI machines. Ethical concerns (privacy, coercion) dominate regulatory discussions, with neuro-rights advocates pushing for explicit protections before commercialization. Advances in non-invasive sensors and shared decoders may eventually bring the tech into creative toolkits, but for now it remains a provocative glimpse at direct imagination capture.

Related Organizations

Singapore's flagship university.

United States · University

Neuroscience lab at UC Berkeley led by Jack Gallant.

Netherlands · University

Leading research centre for cognitive neuroscience.

United States · University

Major public research university.

Neuroscience company developing non-invasive brain recording technology (Flow and Flux).

Neurotechnology company developing implantable brain-machine interfaces.

Social media and camera company developing AR spectacles.