Consumer LiDAR Sensors

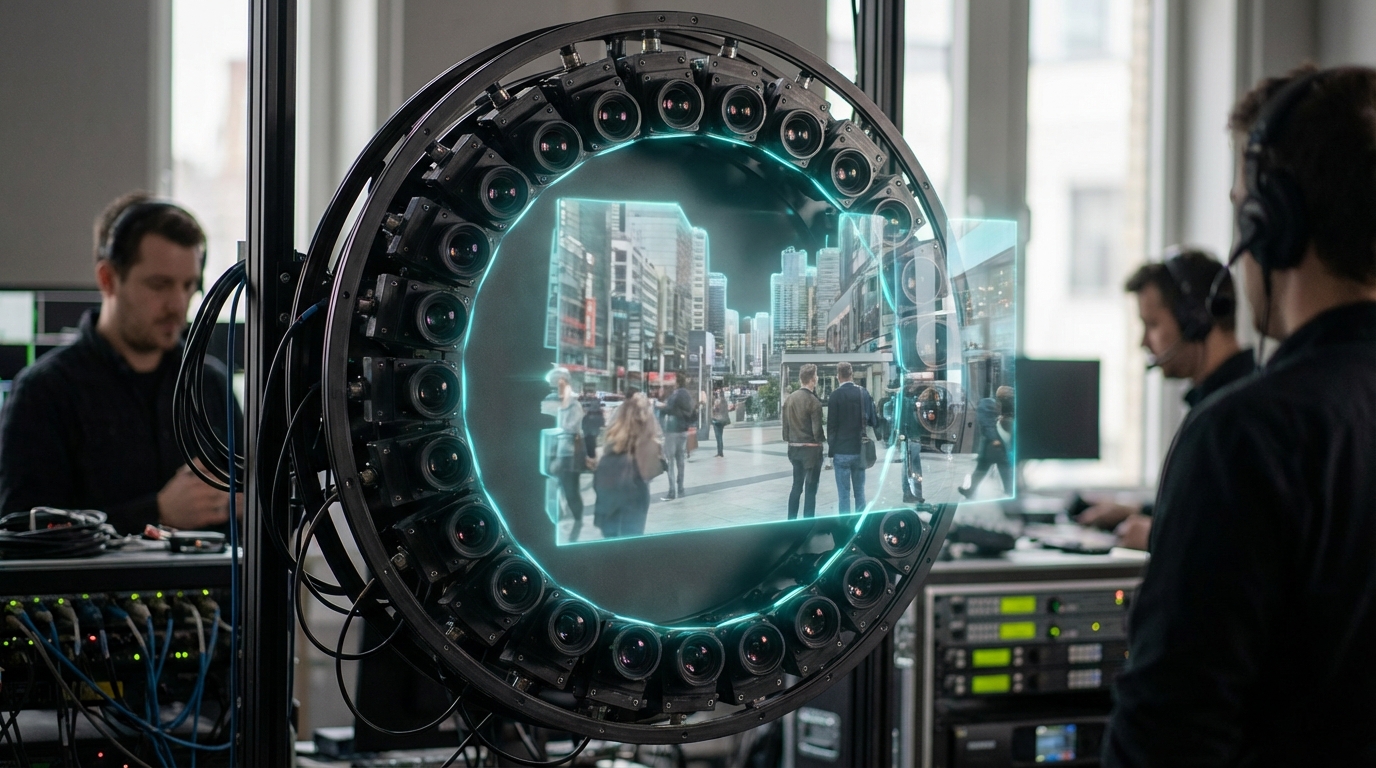

Consumer LiDAR sensors, now embedded in flagship phones, tablets, and creator cams, combine VCSEL emitters, SPAD detectors, and beam-steering optics to deliver centimeter-accurate point clouds on demand. Firmware fuses the asynchronous depth samples with inertial data and RGB frames, producing watertight meshes that can be exported as USDZ or glTF assets without round-tripping through desktops. Because the sensors are eye-safe and low power, location scouts, streamers, and hobbyists can scan spaces repeatedly throughout a shoot.

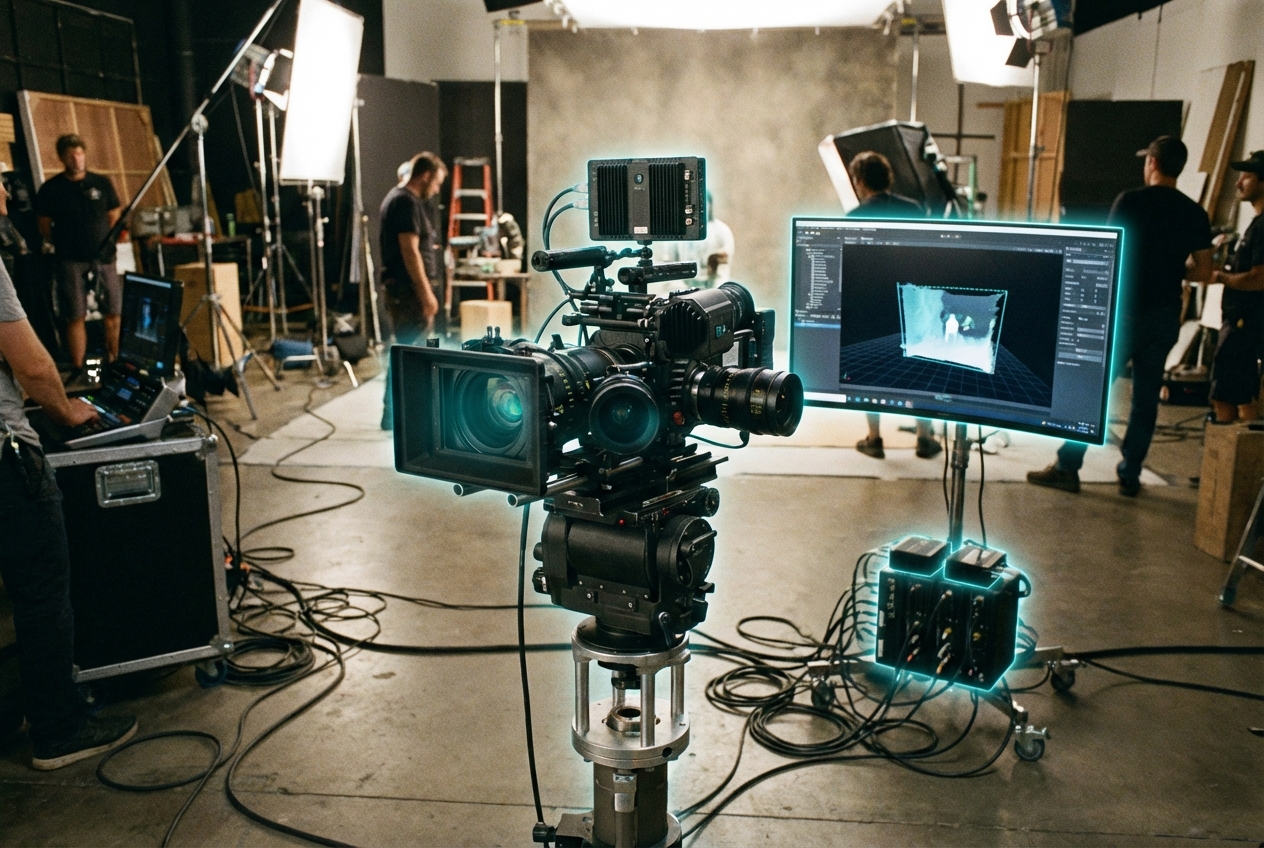

For media workflows that means instant previz, set extension, and AR occlusion maps without hiring a surveying crew. TikTok creators capture bedrooms to anchor volumetric stories; virtual production crews scan LED stages each morning to confirm geometry alignment; live shopping apps use depth to size furniture in a shopper’s apartment with confidence. Spatial advertisers and theme parks rely on consumer-grade LiDAR to personalize experiences based on the viewer’s actual environment while preserving latency budgets.

Challenges include limited range outdoors, multipath noise on reflective surfaces, and privacy debates about crowd scanning. Even so, TRL 8 adoption is accelerating: USDZ, RealityKit, and ARCore all ingest LiDAR depth layers natively, while MPEG’s Immersive Video group works on depth codecs optimized for mobile capture. Expect next-gen devices to pair LiDAR with neural reconstruction so anyone with a phone can contribute production-quality assets to volumetric story pipelines.

Related Organizations

Developing 'Apple Intelligence', a personal intelligence system integrated into iOS/macOS that uses on-device context to mediate tasks and information.

United States · Company

A primary supplier of VCSEL (Vertical-Cavity Surface-Emitting Laser) arrays used for 3D sensing and LiDAR.

Develops stacked event-based vision sensors with integrated logic layers.

Creator of FlightSense time-of-flight (ToF) sensors widely used in Android smartphones for depth sensing.

A major manufacturer of engineered materials and optoelectronic components, including VCSELs for 3D sensing (formerly II-VI).

AR platform company that develops the Lightship ARDK and owns Scaniverse, a 3D scanning app leveraging LiDAR.

Spatial data company that integrated mobile LiDAR support into their capture app, democratizing real estate digital twins.

Pioneers in mobile computer vision and depth sensing (Structure Sensor), now focused on SDKs for depth.

An app developer focusing on volumetric video recording using iOS LiDAR sensors.