Gaussian Splatting Rendering

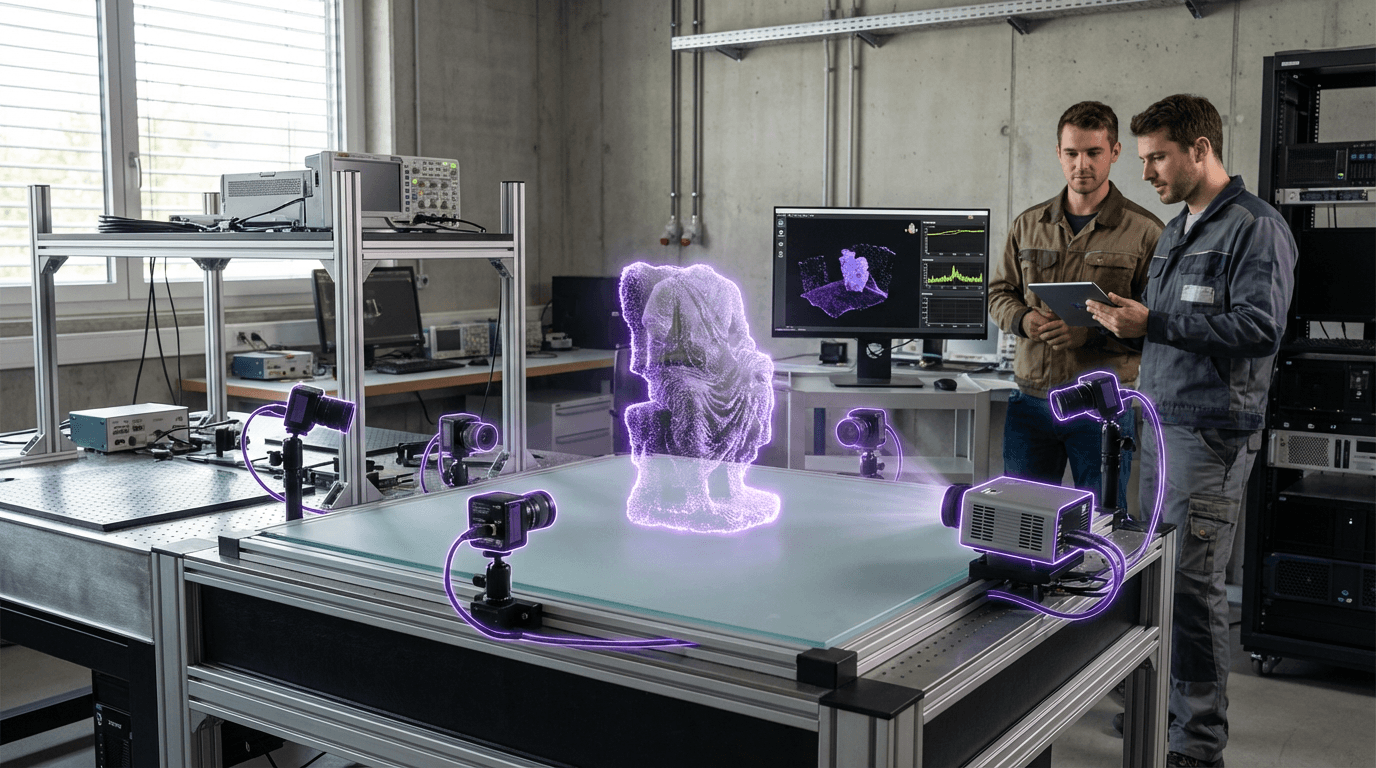

Gaussian Splatting Rendering represents a breakthrough in neural rendering that addresses longstanding challenges in creating photorealistic 3D environments from limited input data. Unlike traditional polygon-based rendering or earlier neural radiance field (NeRF) approaches that required extensive computation time, this technique models scenes as collections of millions of 3D Gaussian primitives—mathematical representations that define position, orientation, opacity, and color in three-dimensional space. Each Gaussian acts as a soft, blurred point that can be efficiently projected onto a 2D screen through a process called rasterization. The system learns these Gaussian parameters from a sparse set of photographs or point cloud data, optimizing their properties to reconstruct the original scene with remarkable fidelity. This approach leverages the computational efficiency of traditional graphics pipelines while incorporating the flexibility of neural learning, achieving rendering speeds measured in dozens of frames per second on consumer-grade hardware.

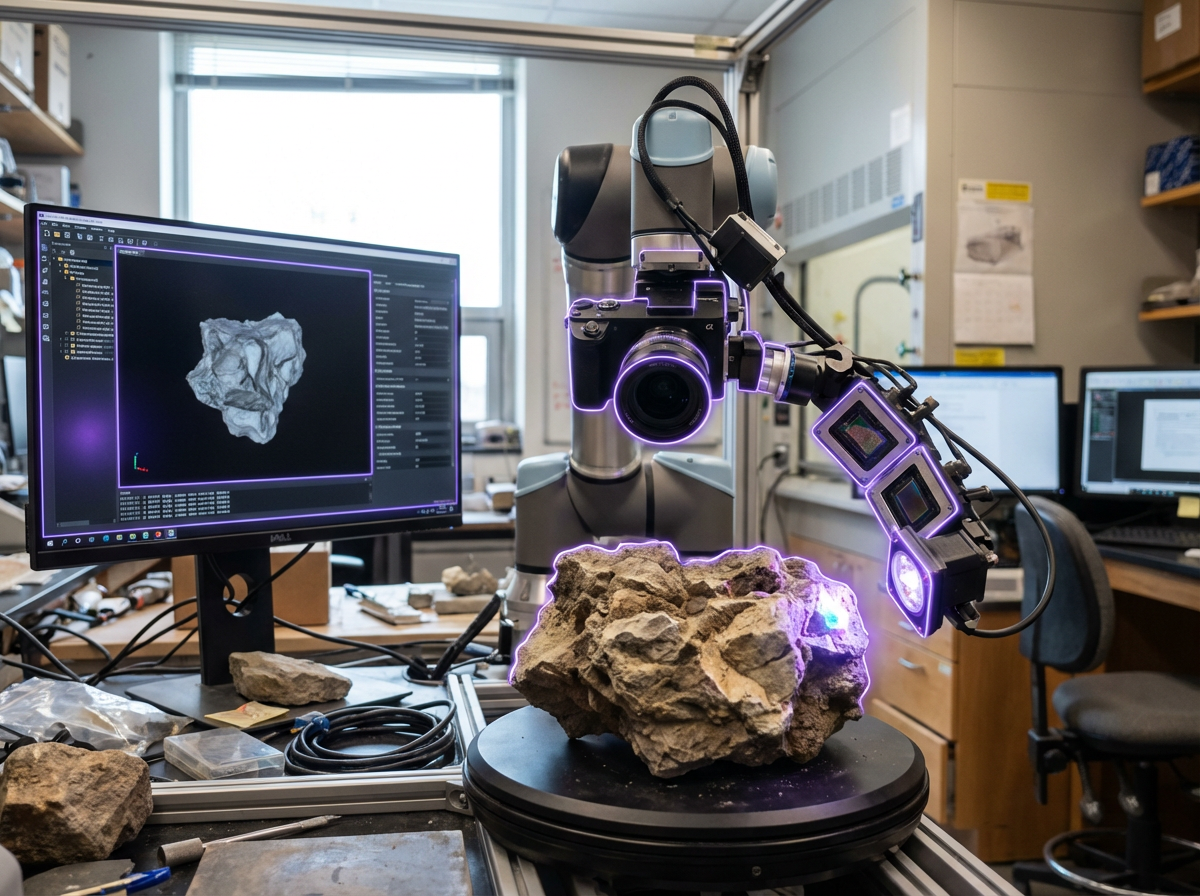

The technology directly addresses critical limitations in industries requiring real-time 3D visualization and telepresence. Traditional photogrammetry methods produce static models that lack the dynamic quality needed for interactive applications, while earlier neural rendering techniques like NeRFs, though capable of stunning photorealism, required minutes or hours to render single frames—making them impractical for real-time use. Gaussian Splatting bridges this gap by enabling both rapid scene capture and immediate playback, transforming workflows in fields from architectural visualization to virtual production. The method's ability to train on relatively sparse input data—sometimes just a few dozen photographs—reduces the extensive capture requirements that previously made high-quality 3D reconstruction prohibitively expensive or time-consuming. This efficiency opens new possibilities for applications where traditional 3D modeling would be too labor-intensive, such as preserving cultural heritage sites, creating digital twins of industrial facilities, or enabling remote collaboration in complex spatial environments.

Early implementations have demonstrated the technology's viability across multiple domains, with research groups and commercial developers exploring applications in gaming, film production, and immersive conferencing systems. The technique shows particular promise for mixed reality applications where virtual content must seamlessly blend with physical spaces, as the photorealistic quality and real-time performance enable convincing spatial overlays. Industry observers note growing interest in combining Gaussian Splatting with volumetric capture systems for telepresence, where participants could be represented as lifelike 3D presences rather than flat video feeds. The technology aligns with broader trends toward more accessible 3D content creation, potentially democratizing capabilities that previously required specialized equipment and expertise. As computational capabilities continue to advance and the technique matures, Gaussian Splatting Rendering is positioned to become a foundational technology for the next generation of spatial computing experiences, enabling more immersive, responsive, and visually compelling interactions between digital content and physical reality.

Related Organizations

The French National Institute for Research in Digital Science and Technology, heavily involved in AI research and Scikit-learn.

Creators of Dream Machine, a high-quality video generation model, and 3D capture technology.

Developers of 'Postshot', a specialized software for training and rendering Radiance Fields and Gaussian Splats.

Pioneers in Neural Radiance Fields (NeRF) and light-field reconstruction algorithms.

AR platform company that develops the Lightship ARDK and owns Scaniverse, a 3D scanning app leveraging LiDAR.

Developers of KIRI Engine, a cloud-based photogrammetry and Neural Surface Reconstruction tool.

A web-based 3D design tool enabling real-time collaboration.

Unreal Engine (Epic Games)

United States · Company

Game engine developer supporting Gaussian Splatting via plugins and emerging native support.