Emotion-driven conversational AI represents a significant evolution beyond traditional chatbots by combining natural language processing with affective computing—the science of recognizing and responding to human emotions. These systems employ multimodal analysis to interpret emotional states, examining textual cues such as word choice, sentence structure, punctuation patterns, and typing speed, while also processing vocal characteristics like pitch, tone, and speech rate when voice channels are available. Advanced implementations incorporate computer vision to analyze facial expressions and body language during video interactions. The underlying architecture typically combines sentiment analysis models, emotion classification algorithms, and intent recognition systems that work in concert to build a comprehensive understanding of the user's psychological state. Machine learning models are trained on large datasets of human conversations annotated with emotional labels, enabling the system to detect subtle indicators of frustration, confusion, satisfaction, or urgency that might escape rule-based systems.

The primary challenge this technology addresses is the longstanding gap between automated customer service efficiency and human emotional intelligence. Traditional chatbots often frustrate users by providing technically correct but emotionally tone-deaf responses, particularly when customers are already stressed or upset. Research suggests that emotional disconnect is a leading cause of customer service abandonment and brand dissatisfaction. Emotion-driven AI solves this by dynamically adjusting its communication style—adopting more formal language with anxious customers, showing patience with confused users, or expediting processes for those displaying urgency. The system can recognize when a situation requires human intervention, automatically escalating high-emotion interactions to live agents before frustration peaks. This capability enables organizations to optimize their support operations, routing straightforward queries to automation while ensuring complex or emotionally charged situations receive appropriate human attention. Early deployments indicate that these systems can reduce average handling time while simultaneously improving customer satisfaction scores, a combination previously difficult to achieve.

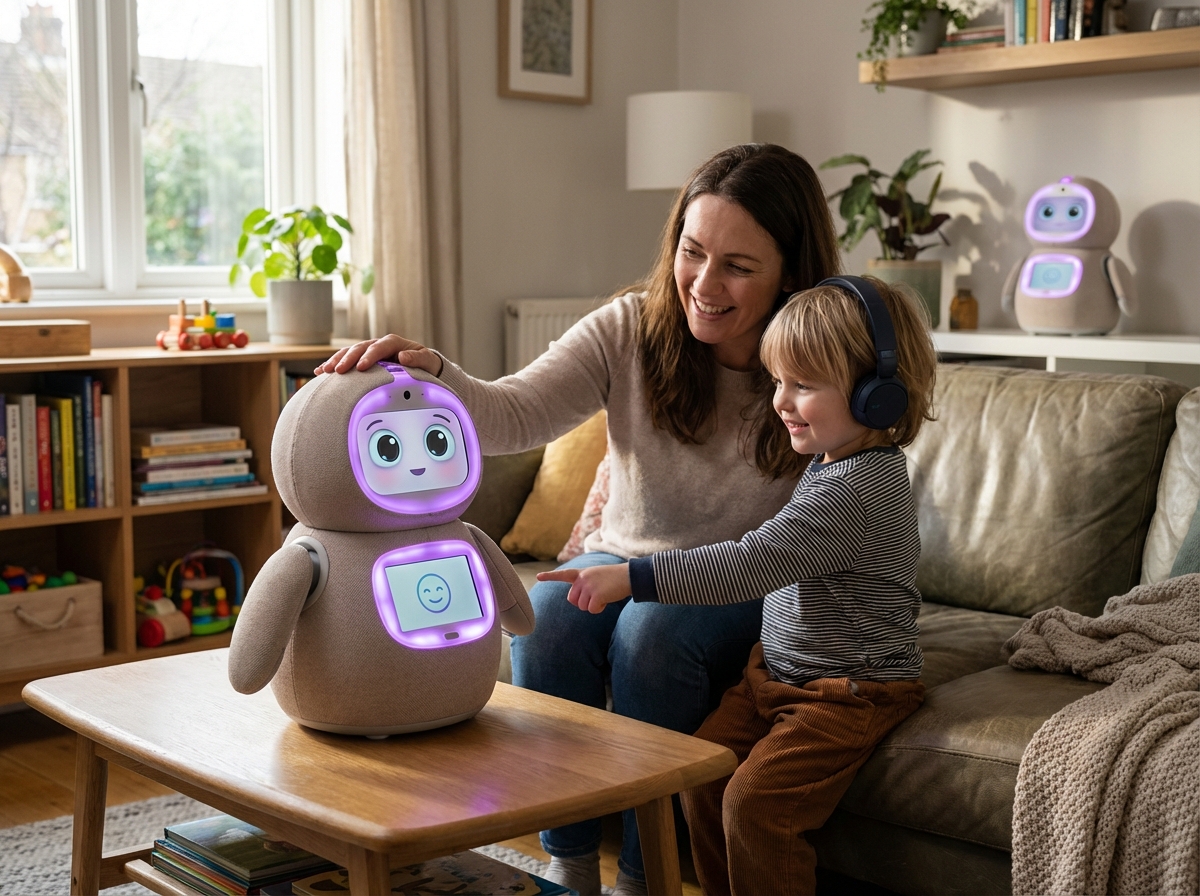

Current applications extend well beyond customer service automation. Mental health platforms are incorporating emotion-aware AI to provide more nuanced support, detecting signs of distress that might warrant professional intervention. Educational technology companies are developing systems that recognize student frustration or disengagement, adapting lesson pacing and difficulty accordingly. In healthcare settings, emotion-driven interfaces help elderly patients interact more comfortably with telemedicine platforms, while companion robots use emotional awareness to provide more meaningful social interaction for isolated individuals. The technology is also finding applications in human resources, where AI-powered interview systems can provide feedback on candidate communication patterns, and in accessibility tools that help individuals with social communication challenges practice emotional recognition. As voice assistants and smart home devices become more prevalent, industry analysts note a growing expectation that these interfaces will demonstrate emotional intelligence rather than mechanical responsiveness. The trajectory points toward ambient computing environments where emotional awareness becomes a standard feature rather than a specialized capability, though this evolution brings important considerations around consent, data privacy, and the ethical boundaries of machine empathy that the industry continues to navigate.