Edge AI

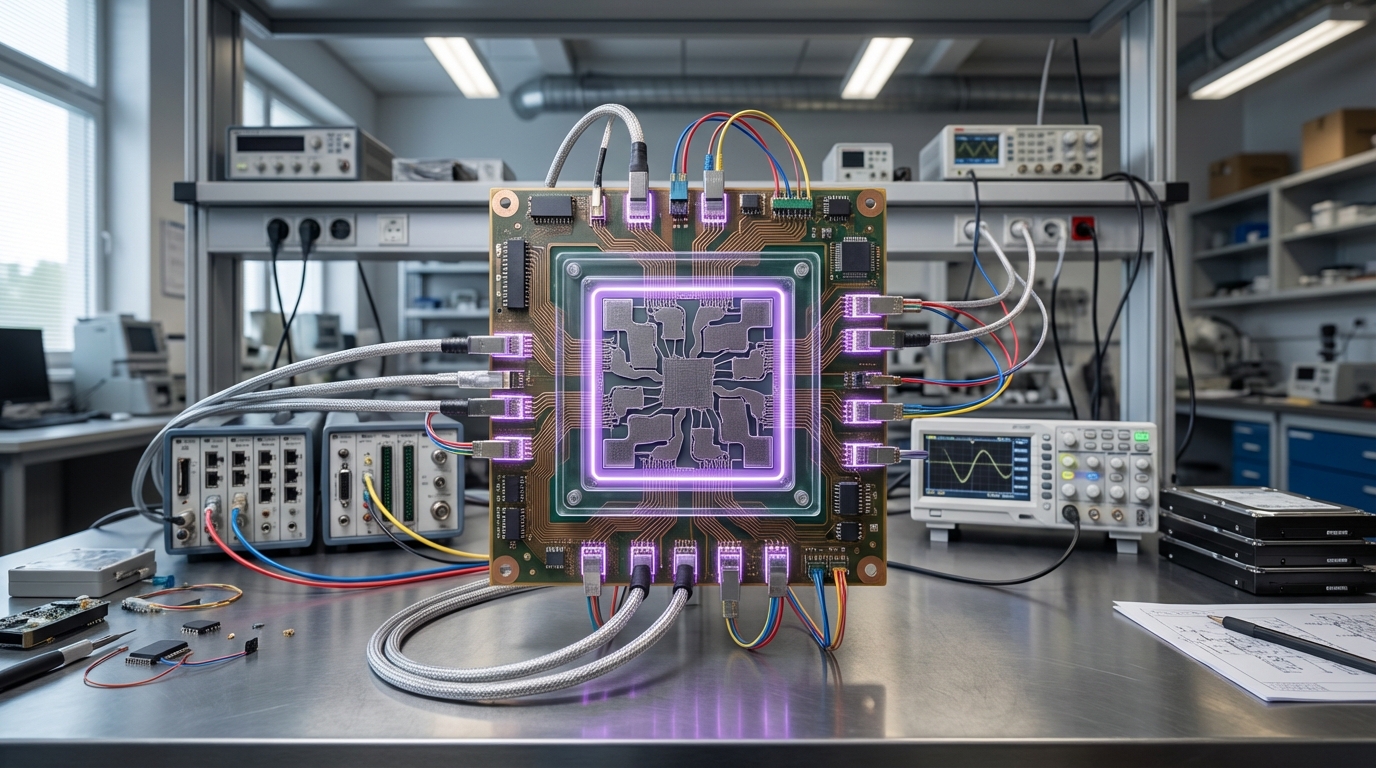

Edge AI runs artificial intelligence algorithms directly on local devices—such as smartphones, IoT sensors, embedded systems, or edge servers—rather than sending data to cloud servers for processing. This approach brings computation closer to where data is generated and where decisions need to be made, enabling real-time responses, reducing bandwidth requirements, and keeping sensitive data local. Edge AI systems use optimized models, specialized hardware like neural processing units (NPUs), and efficient algorithms to run AI workloads on resource-constrained devices.

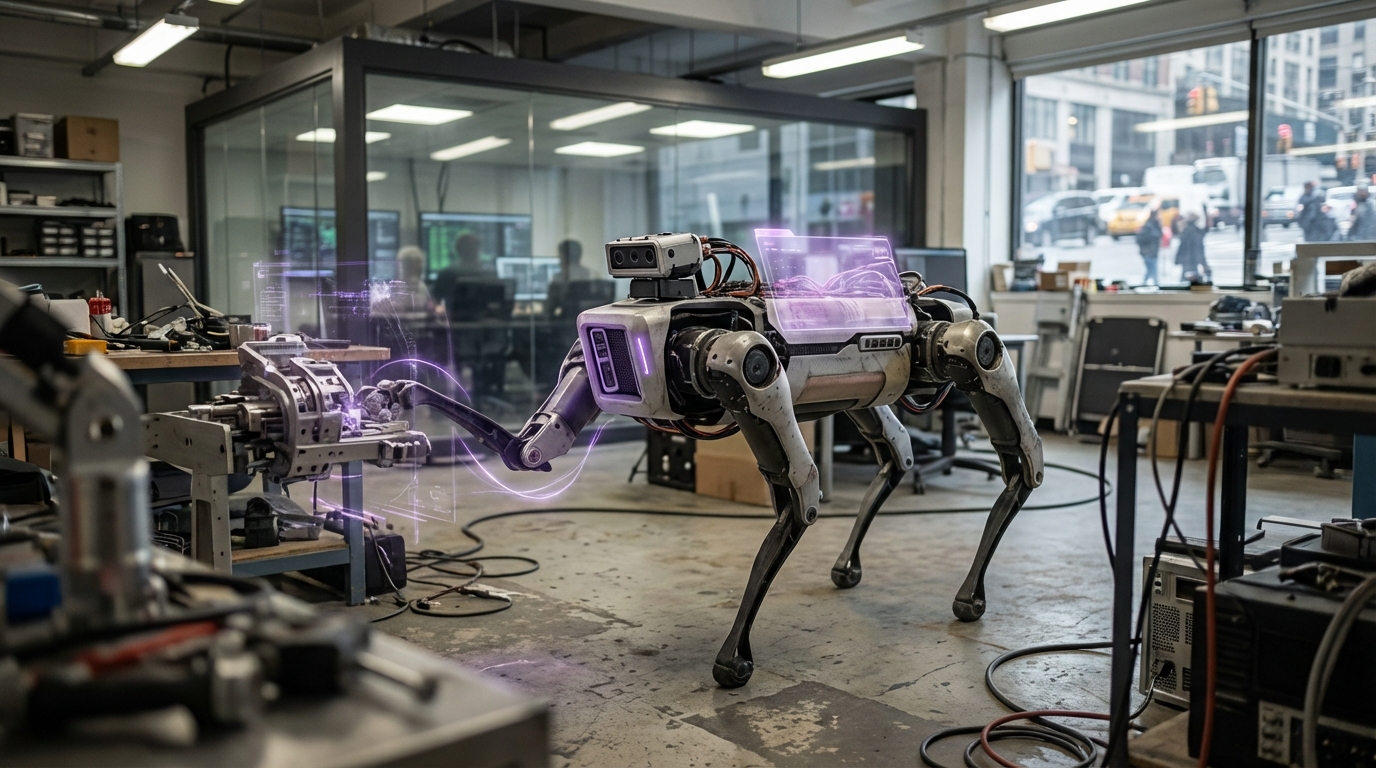

The technology addresses critical limitations of cloud-based AI: latency for time-sensitive applications, bandwidth costs and limitations, privacy and security concerns with sending data to the cloud, and dependency on network connectivity. Edge AI enables instant responses for applications like autonomous vehicles, real-time image recognition, voice assistants, and industrial control systems. Applications include smartphones with on-device AI features, autonomous vehicles that process sensor data locally, industrial IoT systems that make decisions at the edge, and privacy-sensitive applications where data cannot leave the device. Companies like Apple, Qualcomm, and various chip manufacturers are developing edge AI hardware and software.

At TRL 6, edge AI is commercially deployed in various devices and applications, though model optimization and hardware efficiency continue to improve. The technology faces challenges including running complex models on resource-constrained devices, balancing model accuracy with computational requirements, managing model updates across distributed devices, and ensuring consistent performance across different hardware. However, as edge hardware becomes more powerful and model optimization improves, edge AI becomes increasingly capable. The technology could enable new classes of applications that require real-time AI, improve privacy by keeping data local, reduce cloud computing costs, and enable AI in environments with limited connectivity, potentially making AI more responsive, private, and accessible while reducing dependence on cloud infrastructure.

Related Organizations

The leading development platform for machine learning on edge devices, enabling developers to deploy models to microcontrollers.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

United States · Nonprofit

A non-profit organization dedicated to growing the community and ecosystem for ultra-low power machine learning.

Develops Neural Decision Processors with near-memory compute architectures for ultra-low power edge AI.

Developer of the Akida neuromorphic processor IP and chips.

Machine learning system-on-chip company for the embedded edge.

GreenWaves Technologies

France · Startup

A fabless semiconductor company developing GAP application processors for IoT and hearables using RISC-V.

Provides a Graph Streaming Processor (GSP) architecture designed for low-latency AI processing at the edge.

Creator of FlightSense time-of-flight (ToF) sensors widely used in Android smartphones for depth sensing.