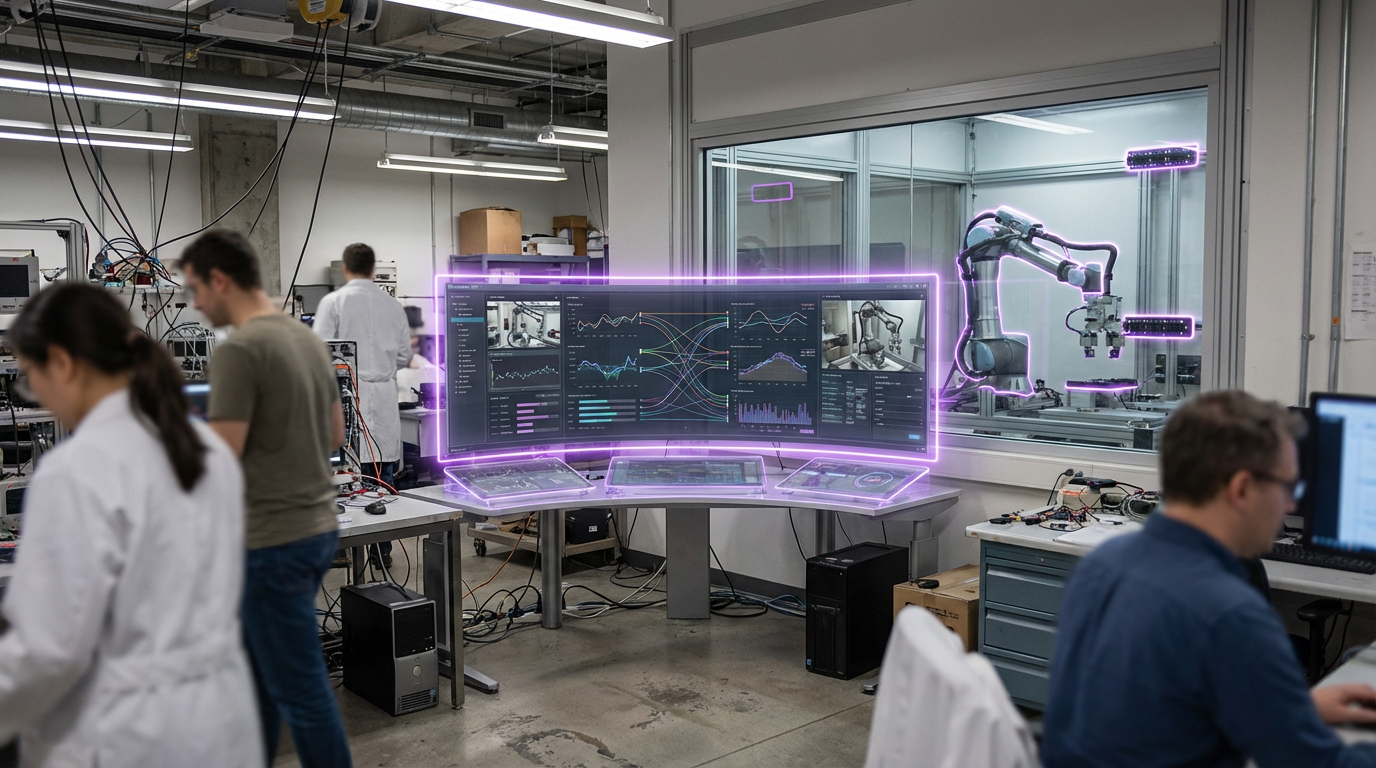

Physical AI

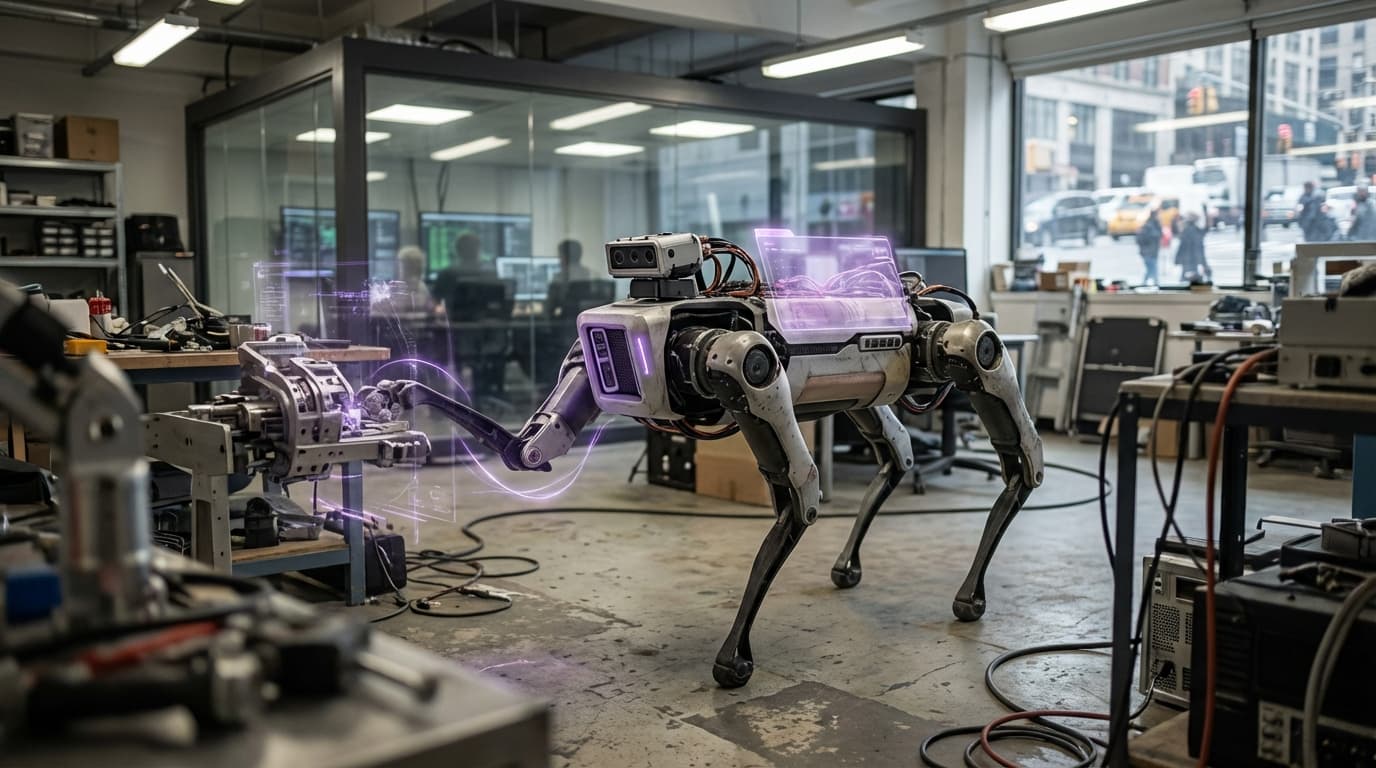

Physical AI represents the integration of artificial intelligence with robotics and material science to create intelligent systems that can perceive, reason about, and interact with the physical world. Unlike AI systems that operate purely in digital domains, physical AI must account for real-world constraints including physics, uncertainty, wear, and the complexities of physical manipulation. This requires AI systems that can learn from physical interactions, adapt to changing conditions, and operate safely and reliably in unstructured environments.

The technology enables robots and autonomous systems that can operate effectively in the real world, from manufacturing and logistics to healthcare and service applications. Physical AI combines advances in machine learning, computer vision, sensor fusion, and control systems with understanding of materials, mechanics, and physical processes. Applications include autonomous vehicles that navigate real roads, robots that manipulate objects with human-like dexterity, and systems that adapt their behavior based on physical feedback. Companies like Boston Dynamics, OpenAI (with robotics research), and various robotics firms are advancing physical AI capabilities.

At TRL 6, physical AI systems are being deployed in various applications, though capabilities remain limited compared to human performance in many tasks. The technology faces challenges including the complexity of real-world environments, the need for extensive training data from physical interactions, safety and reliability requirements, and the difficulty of transferring learned behaviors to new situations. However, as AI techniques improve and robots become more capable, physical AI systems are becoming increasingly practical. The technology could enable a new generation of robots and autonomous systems that operate effectively in human environments, potentially transforming industries from manufacturing to healthcare by providing intelligent systems that can work alongside or replace humans in physical tasks.

Related Organizations

A startup building a general-purpose brain for robots, backed by OpenAI and Thrive Capital.

Famous for Spot and Atlas, now integrating reinforcement learning for dynamic movement.

AI robotics company building a universal AI brain for robots.

Developing general-purpose humanoid robots designed for commercial workforce deployment.

Developers of the Gemini family of models, which are trained from the start to be multimodal across text, images, video, and audio.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

A Norwegian robotics company (backed by OpenAI) developing androids like EVE and NEO.

Creators of Digit, a bipedal robot designed for logistics work.

Developing general-purpose humanoid robots (Phoenix) powered by Carbon, their AI control system.

Automotive and energy company developing custom AI silicon for autonomous driving.

A spin-out from the Human Centered Robotics Lab at UT Austin, developing Apollo, a general-purpose humanoid.

The global hub for open-source AI models and datasets. Founded by French entrepreneurs with a major office in Paris.

A robotics company known for quadrupeds that recently launched the H1 general-purpose humanoid robot.