Digital-Biological Convergence Risks

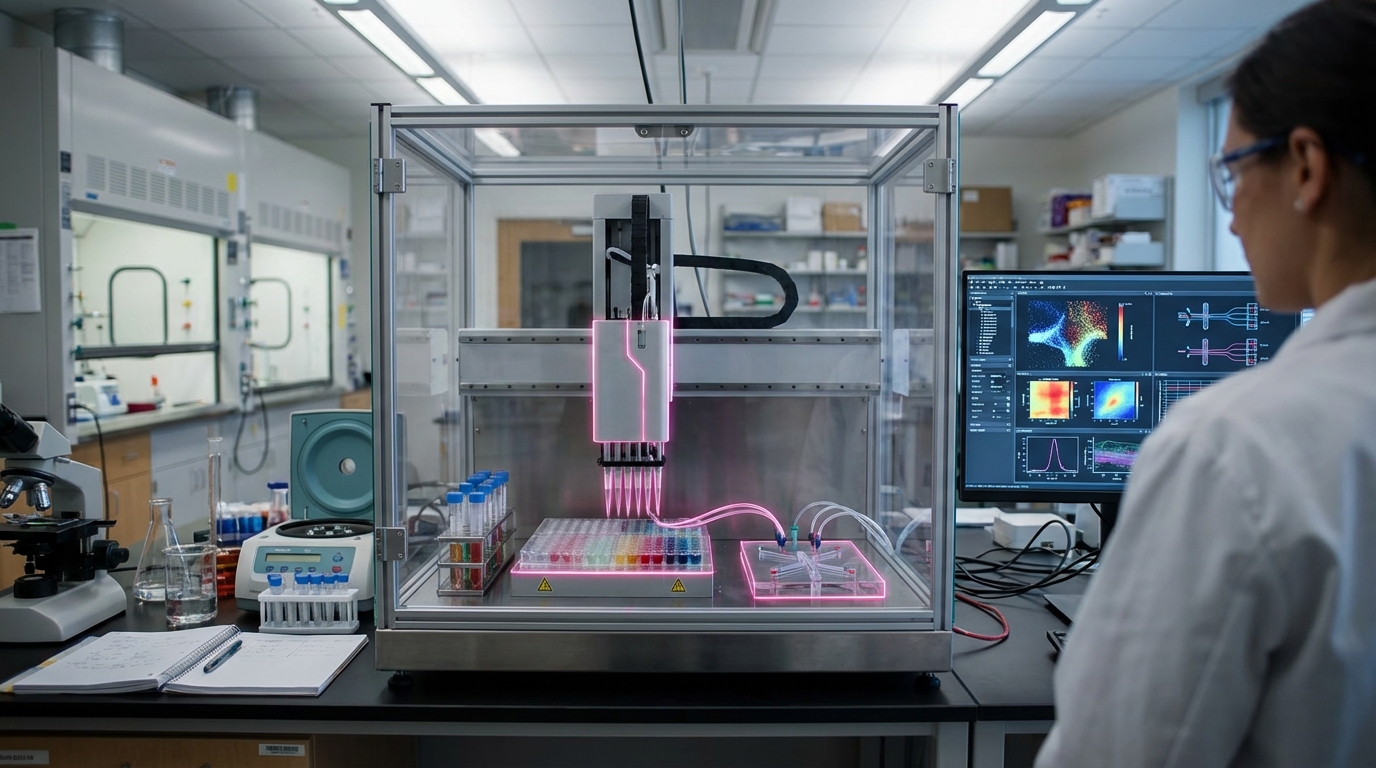

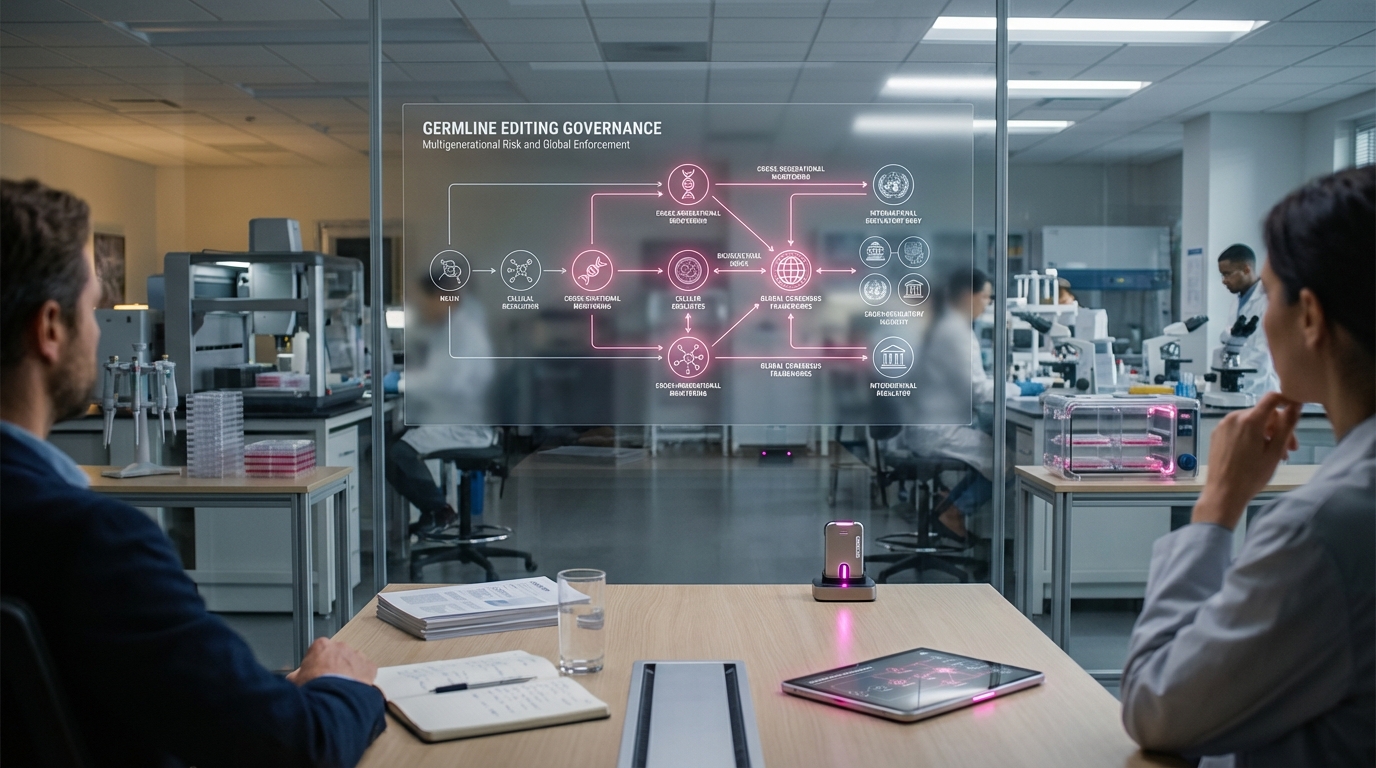

Digital-biological convergence risks refer to the safety and security challenges that arise at the intersection of artificial intelligence and biotechnology, where AI systems can design biological agents, automated laboratories can synthesize and test biological constructs, and the boundary between computer simulation and deployable biological systems becomes increasingly blurred. Managing these risks requires oversight frameworks for AI-designed biological agents, automated laboratory systems, and the processes that translate digital designs into biological reality, addressing concerns about accidental creation of dangerous organisms or malicious misuse of increasingly accessible biotechnology tools. This oversight is critical to preventing both accidental and intentional misuse as AI and biotechnology capabilities advance. Government agencies, research institutions, and companies are developing oversight mechanisms.

This innovation addresses the dual-use nature of biotechnology combined with AI, where the same tools that enable beneficial research and therapeutics could also be misused to create biological weapons or dangerous pathogens. As AI makes biological design easier and automated labs make synthesis more accessible, the risk of misuse increases, making oversight essential. The field requires careful balance between enabling beneficial innovation and preventing misuse.

The technology is essential for maintaining biosecurity as AI and biotechnology capabilities expand, where lack of oversight could lead to dangerous outcomes. As capabilities improve, effective oversight becomes increasingly important. However, developing comprehensive oversight, staying ahead of rapidly advancing capabilities, and balancing security with innovation remain challenges. The technology represents an important area of security and policy development, but requires ongoing attention as capabilities evolve. Success could enable safe advancement of AI and biotechnology while preventing misuse, but the field must continuously adapt to new capabilities and threats. The convergence of AI and biology creates both opportunities and risks that require careful management.

Related Organizations

A free, privacy-preserving, open-source screening platform for DNA synthesis providers to detect hazards.

A horizontal platform for cell programming that enables other companies to develop precision fermentation strains.

United States · Consortium

An industry-led organization of gene synthesis companies that promotes biosecurity by screening customers and sequences.

An AI safety and research company developing Constitutional AI to align models with human values.

A nonprofit analyzing systemic risks to security, with a strong focus on biological threats.

United States · Consortium

A public-private partnership dedicated to the safe and secure advancement of engineering biology.

Home of the Affective Computing research group led by Rosalind Picard.

OpenAI

United States · Company

Creator of GPT-4o, a natively multimodal model capable of reasoning across audio, vision, and text in real-time.

A synthetic biology company that manufactures synthetic DNA based on a silicon platform.

Research center at the University of Cambridge studying global catastrophic risks, including bio-risk.

United States · Company

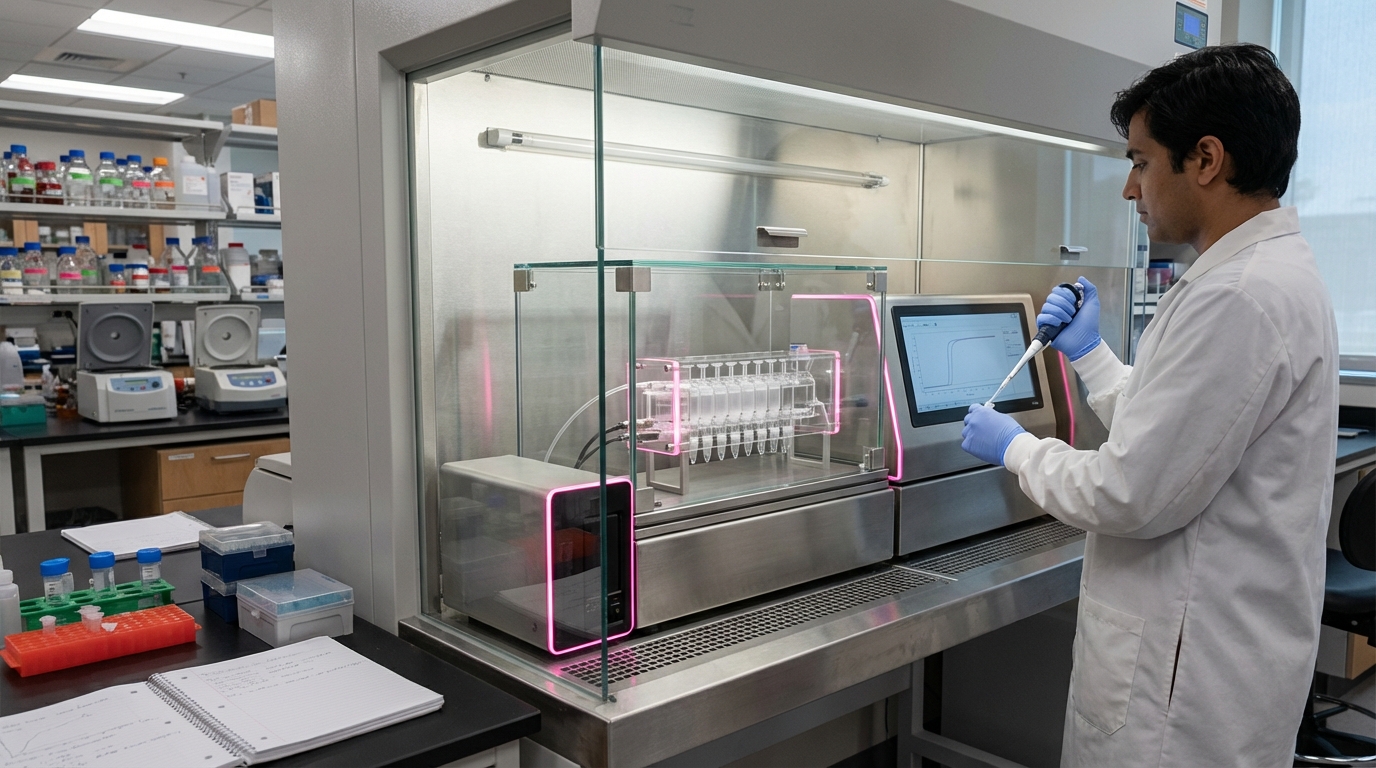

Provides a highly automated cloud laboratory where scientists can design and run experiments remotely via code.

United States · Company

Strategic investor for the US intelligence community with a dedicated biology practice (B.Next).