Explainable AI (XAI) in Logistics

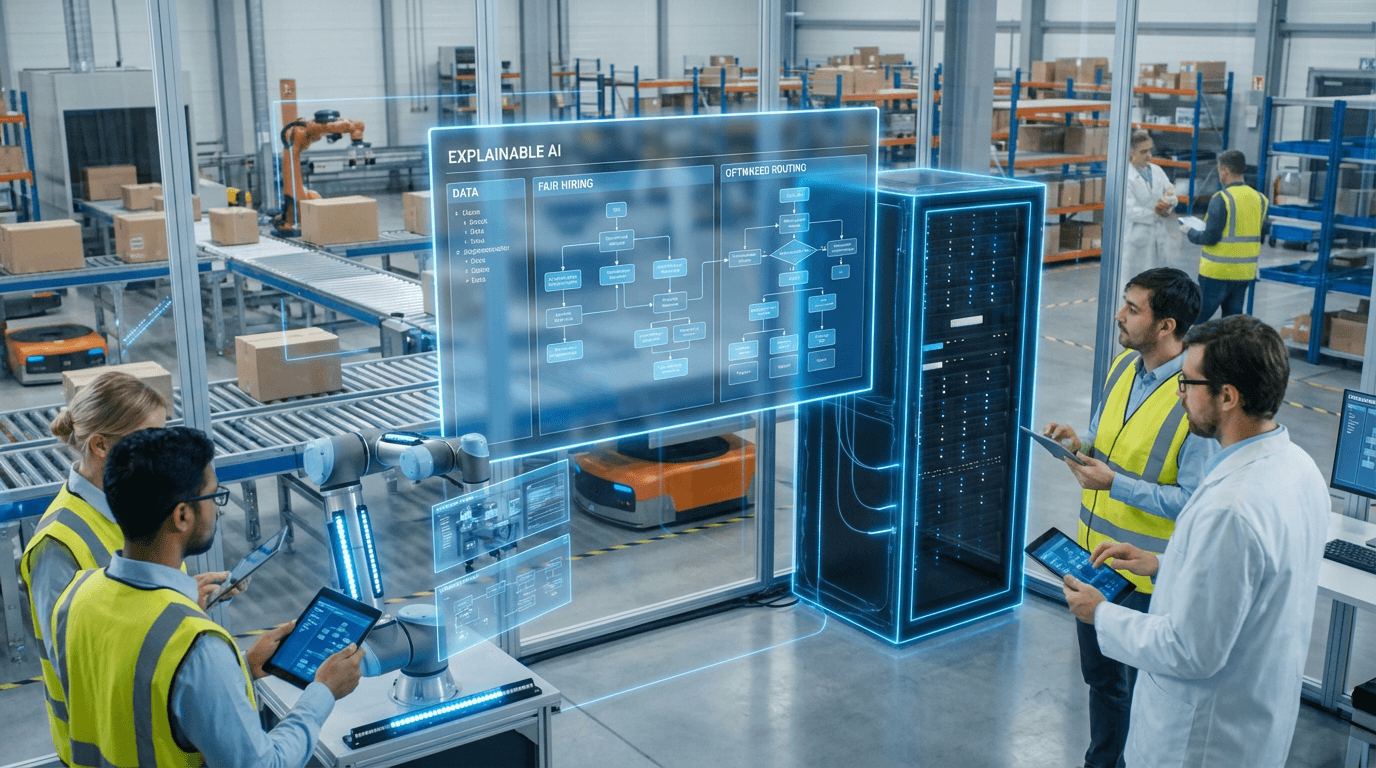

The rapid adoption of artificial intelligence in supply chain and logistics operations has introduced powerful optimization capabilities, but it has also raised critical concerns about accountability and fairness. Explainable AI (XAI) in logistics represents a set of methodologies and frameworks designed to make the decision-making processes of AI systems transparent and interpretable to human stakeholders. Unlike traditional "black box" machine learning models that produce outputs without revealing their reasoning, XAI techniques employ various approaches such as feature importance analysis, decision trees, rule extraction, and visualization methods to illuminate how algorithms arrive at specific conclusions. In logistics contexts, this might involve revealing which factors—delivery time windows, traffic patterns, driver availability, fuel costs, or customer priority levels—most heavily influence route assignments or scheduling decisions. These frameworks often incorporate model-agnostic interpretation tools that can work across different AI architectures, as well as inherently interpretable models designed from the ground up for transparency.

The logistics industry faces mounting pressure to ensure that algorithmic decision-making does not perpetuate or amplify existing biases, particularly as AI systems increasingly control critical functions like driver dispatch, route optimization, warehouse task allocation, and even hiring decisions. Without transparency, these systems risk creating unfair outcomes such as systematically avoiding deliveries to certain neighborhoods based on historical data patterns, disproportionately assigning less profitable routes to specific driver demographics, or making opaque performance evaluations that affect worker compensation and job security. XAI addresses these challenges by enabling logistics managers and compliance teams to audit AI decisions, identify potential sources of bias, and verify that optimization algorithms balance efficiency with equity. This transparency is becoming essential not only for ethical operations but also for regulatory compliance, as governments worldwide are introducing requirements for algorithmic accountability in employment and service delivery. Furthermore, XAI helps build trust among drivers, warehouse workers, and customers who are directly affected by AI-driven decisions, fostering acceptance of automation while maintaining human oversight.

Early implementations of XAI in logistics are already demonstrating practical value across the industry. Major logistics providers are deploying interpretable AI systems that allow dispatchers to understand why specific routes were assigned, enabling them to override decisions when local knowledge or exceptional circumstances warrant human judgment. In warehouse operations, XAI tools help managers understand task allocation patterns and ensure that workload distribution remains fair across different shifts and worker groups. Research in this domain suggests that transparent AI systems can actually improve overall performance by enabling human operators to identify and correct algorithmic errors or outdated assumptions embedded in training data. As regulatory frameworks around AI governance continue to evolve and stakeholders demand greater accountability, XAI is positioned to become a standard requirement rather than an optional feature in logistics technology. This trajectory aligns with broader industry movements toward ethical AI and responsible automation, ensuring that the efficiency gains from artificial intelligence do not come at the cost of fairness, transparency, or human dignity in the supply chain workforce.

Related Organizations

German research institute working on the 'Silicon Economy' and open source hardware/software for the Physical Internet.

Provides watsonx.governance for managing AI risk and compliance.

A world leader in supply chain management education and research.

A data science solutions provider with a strong focus on retail and CPG supply chain analytics and inventory management.

A model monitoring and observability platform that includes specific tools for evaluating LLM accuracy and hallucination.

Owned by Panasonic, their Luminate platform offers a digital twin of the supply chain for real-time visibility and prediction.

Data analytics company known for credit scoring, now developing Explainable AI (xAI) tools to ensure score fairness.

Enterprise AI software provider with a dedicated suite for predictive maintenance across energy, defense, and manufacturing.

Provides Driverless AI, an AutoML platform that includes architecture search and hyperparameter tuning.