Autonomy & Lethal Decision Boundaries

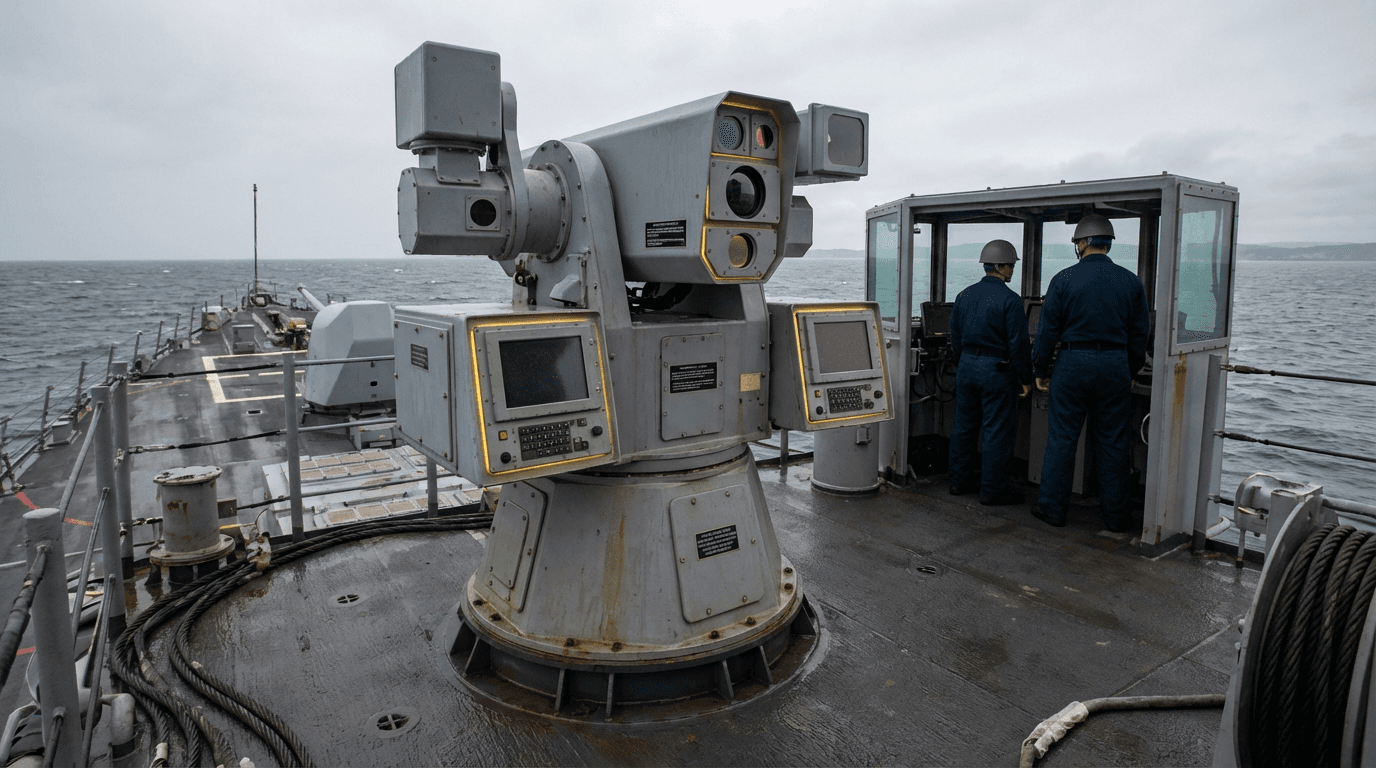

The deployment of autonomous weapon systems presents one of the most pressing ethical and operational challenges in modern defense: determining the appropriate level of human involvement in lethal decision-making. Traditional military doctrine has always placed human judgment at the center of decisions to use deadly force, but advances in artificial intelligence and autonomous systems now enable machines to identify, track, and potentially engage targets with minimal or no human intervention. This creates a fundamental tension between the operational advantages of speed and precision that autonomous systems offer and the moral imperative to maintain meaningful human control over life-and-death decisions. Autonomy and lethal decision boundaries address this challenge by establishing clear frameworks that define when and how humans must remain involved in the targeting cycle, distinguishing between different levels of control such as human-in-the-loop (where a human operator must approve each engagement), human-on-the-loop (where humans monitor and can override autonomous decisions), and human-out-of-the-loop (fully autonomous operation within predefined parameters).

These frameworks serve multiple critical functions within defense organizations and international security architecture. They provide operational commanders with clear rules of engagement that specify the circumstances under which autonomous systems may be deployed and the constraints under which they must operate. By establishing accountability chains, these boundaries help address legal questions about responsibility when autonomous systems cause unintended casualties or engage incorrect targets. The frameworks also respond to growing international concern about the proliferation of lethal autonomous weapons systems, offering a structured approach to maintaining ethical standards while leveraging technological capabilities. Defense establishments implementing these boundaries must grapple with complex questions about the reliability of target recognition algorithms, the predictability of autonomous behavior in contested environments, and the technical feasibility of maintaining effective human oversight when systems operate at machine speed.

Several nations and international bodies have begun developing policies and testing protocols around these frameworks, though consensus remains elusive on where exactly the boundaries should be drawn. Current military applications tend to favor human-on-the-loop configurations for defensive systems like counter-drone platforms, where reaction time is critical but human oversight remains technically feasible. Research programs are exploring technical mechanisms such as "ethical governors" that constrain autonomous behavior, time-delay requirements that ensure human review opportunities, and transparency measures that make autonomous decision-making auditable. As autonomous capabilities continue to advance and proliferate globally, these frameworks will likely evolve from voluntary guidelines toward more formalized international agreements, similar to existing treaties governing other weapons categories. The trajectory suggests a future where the boundaries between human and machine decision-making in warfare will be defined not just by technical capability but by deliberate policy choices that balance military effectiveness with fundamental principles of human dignity and accountability.

Related Organizations

Humanitarian institution based in Geneva.

UN body promoting nuclear disarmament and non-proliferation.

Bipartisan national security think tank.

DoD office responsible for accelerating the adoption of data, analytics, and AI.

Specialist non-profit organization focused on reducing harm from weapons.

International non-governmental organization that conducts research and advocacy on human rights.

International institute dedicated to research into conflict, armaments, arms control and disarmament.

The world's oldest defence and security think tank.

IEEE

United States · Nonprofit

The world's largest technical professional organization, producing the 'Ethically Aligned Design' standards.