Algorithmic Scouting Fairness

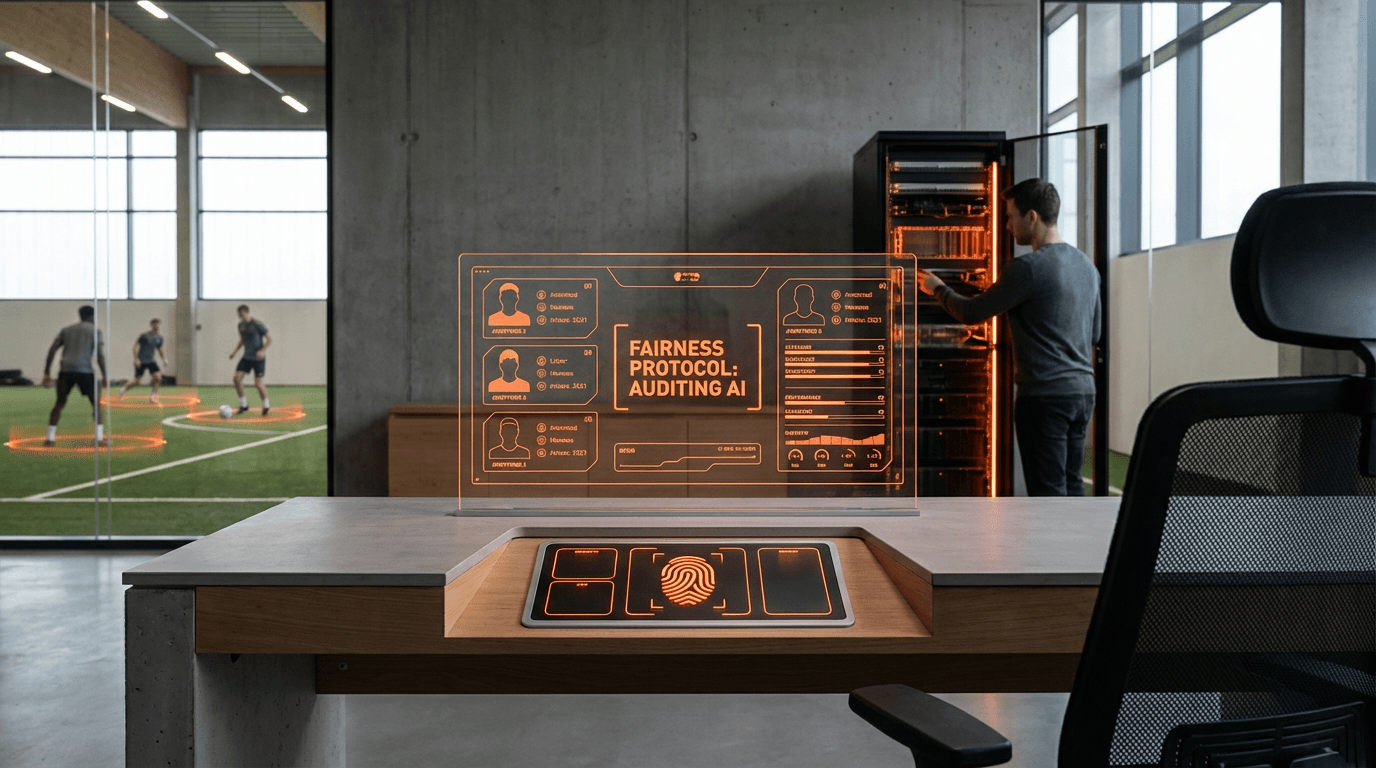

The sports industry has increasingly turned to artificial intelligence and machine learning algorithms to identify and evaluate athletic talent, yet these systems risk perpetuating historical biases embedded in training data. Algorithmic scouting fairness addresses a critical challenge: ensuring that AI-driven recruitment tools do not systematically disadvantage athletes from underrepresented communities, specific geographic regions, or lower socioeconomic backgrounds. At its core, this approach involves systematic auditing of the datasets, decision-making processes, and outputs of automated scouting systems. The technical mechanisms include bias detection frameworks that analyse how algorithms weight different performance metrics, demographic analysis of scouted versus overlooked athletes, and transparency tools that make algorithmic decision criteria visible to human scouts and coaches. These auditing systems examine whether training data adequately represents diverse athletic populations and whether evaluation criteria inadvertently favour athletes with access to elite training facilities, expensive equipment, or high-profile competitions.

The traditional scouting model in professional sports has long been criticised for relying on networks and visibility that favour athletes from well-resourced backgrounds or established sports programs. When AI systems are trained on historical data reflecting these patterns, they risk automating and amplifying existing inequities rather than correcting them. Algorithmic scouting fairness initiatives work to overcome these limitations by establishing standards for what constitutes fair and representative talent evaluation. This includes developing metrics that account for contextual factors—such as the quality of opposition faced or resources available during an athlete's development—rather than relying solely on raw performance statistics. By implementing these fairness audits, sports organisations can identify when their AI tools are systematically overlooking talent pools in rural areas, underfunded school districts, or regions with limited sports infrastructure. This capability is particularly valuable as leagues and teams seek to expand their global reach while ensuring they do not miss exceptional athletes simply because they lack traditional markers of visibility.

Early implementations of algorithmic fairness audits have emerged in professional football, basketball, and baseball organisations, where teams are beginning to recognise that biased scouting systems represent both an ethical concern and a competitive disadvantage. Some leagues have established working groups to develop shared standards for evaluating AI recruitment tools, while technology providers are incorporating fairness metrics into their scouting platforms. These systems are being deployed alongside traditional scouting methods, with human evaluators using fairness reports to question and refine algorithmic recommendations. The broader trend toward algorithmic accountability in sports reflects growing awareness that AI systems require ongoing monitoring and adjustment to serve their intended purpose of identifying talent wherever it exists. As youth sports participation becomes increasingly stratified by socioeconomic status, ensuring fair algorithmic scouting becomes not just a matter of equity but a practical necessity for maintaining diverse and competitive professional leagues. The future trajectory points toward standardised fairness certifications for sports AI tools and greater transparency in how algorithms shape career opportunities for aspiring athletes.

Related Organizations

A leading provider of football data intelligence that uses machine learning to calculate player potential and transfer value, actively addressing bias in data collection across global leagues.

A sports data company providing advanced contextual event data and analytics tools that help teams evaluate players objectively beyond basic metrics.

Provides video review and performance analysis tools (including Wyscout and Sportscode) that integrate data to reveal team tactics.

A football performance app for youth players to track stats and showcase talent, creating a data-driven pathway that bypasses traditional, potentially biased scouting networks.

A sports intelligence platform founded by former team analysts that builds custom tactical models for pro teams.

An independent organization working to align the world of sport with fundamental human rights principles.

A professional football club renowned for its data-driven R&D department and proprietary scouting applications.

A higher education institute dedicated to the sports industry, conducting research into sports management, analytics, and the ethics of player recruitment.

Provides watsonx.governance for managing AI risk and compliance.