Gaze-Contingent Displays

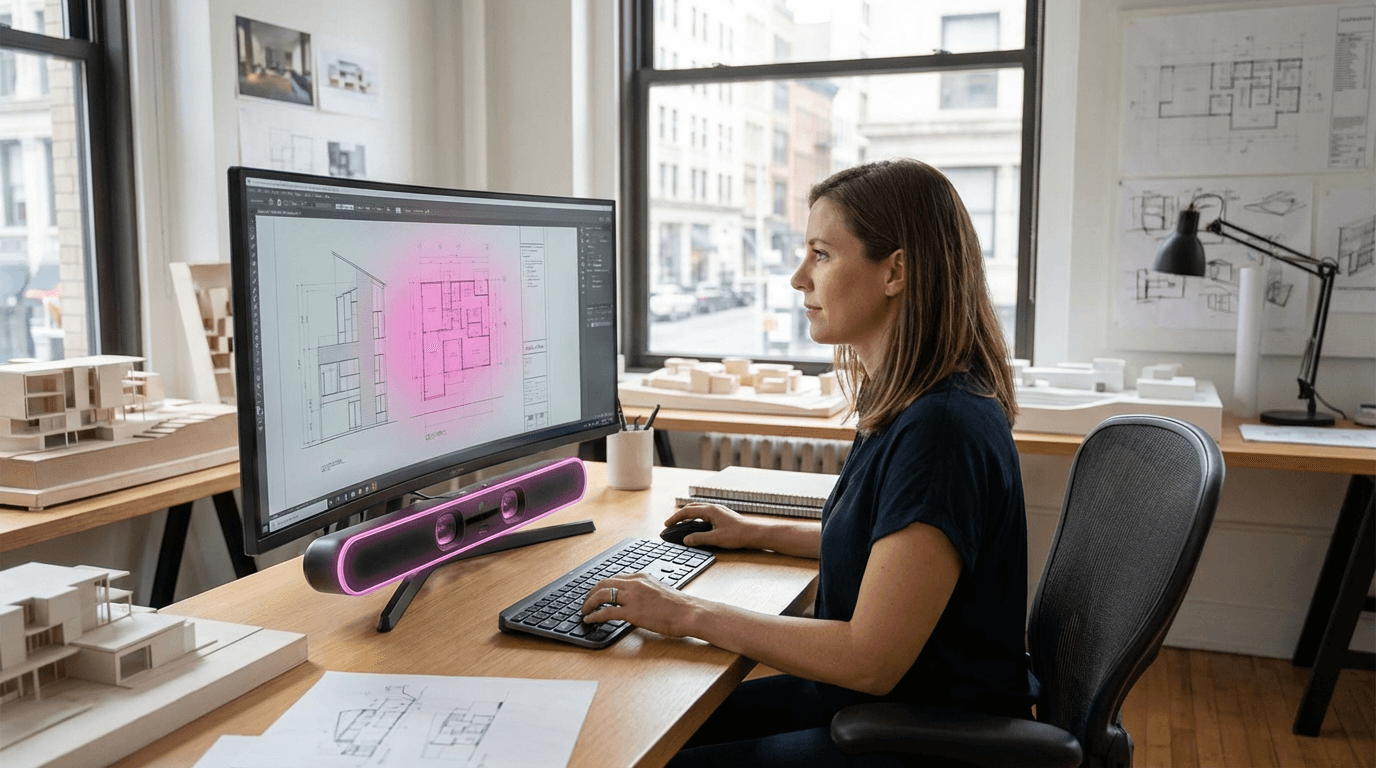

Gaze-contingent displays represent an advanced interface technology that dynamically adjusts visual content in real-time based on where a user is looking and how their eyes respond to what they see. These systems integrate high-precision eye-tracking sensors—typically infrared cameras operating at 60-120Hz or higher—with display technologies to create a feedback loop between human attention and digital content. The core mechanism relies on tracking both the point of gaze (where the fovea, the eye's high-resolution center, is directed) and physiological indicators like pupil dilation, blink rate, and saccadic movement patterns. By continuously monitoring these signals, the system can infer not just what the user is viewing, but also their cognitive state, level of engagement, and even emotional responses. This creates opportunities for interfaces that respond to implicit signals rather than requiring explicit input through traditional controls.

The technology addresses several critical challenges in human-computer interaction, particularly in contexts where traditional input methods are impractical or where understanding user attention is valuable. In virtual and augmented reality environments, gaze-contingent rendering can optimize computational resources by rendering only the area of direct visual focus in full detail while reducing quality in peripheral vision—a technique called foveated rendering that significantly improves performance without perceptible quality loss. For accessibility applications, these displays enable individuals with motor impairments to navigate interfaces and communicate using only eye movements. In educational and training contexts, gaze-contingent systems can detect when learners are confused or disengaged based on attention patterns and pupil responses, allowing adaptive learning platforms to adjust difficulty or provide additional support. Marketing and user experience research also benefit from understanding what captures and holds visual attention, enabling more effective interface design and content placement.

Current implementations of gaze-contingent displays are most advanced in VR headsets from major manufacturers, where eye-tracking has become increasingly standard for both interaction and performance optimization. Research institutions and technology companies are exploring applications ranging from driver monitoring systems that detect fatigue and distraction to medical diagnostic tools that assess cognitive function through eye movement analysis. Early commercial deployments in retail environments use gaze tracking to understand customer attention patterns, while accessibility-focused products enable eye-controlled communication for individuals with conditions like ALS. As eye-tracking sensors become more affordable and accurate, and as machine learning models improve at interpreting gaze data, these displays are positioned to become a standard component of next-generation interfaces. The convergence of gaze-contingent technology with affective computing and adaptive systems suggests a future where digital interfaces seamlessly respond to human attention and emotional state, creating more intuitive and personalized experiences across entertainment, productivity, healthcare, and education domains.

Related Organizations

The global leader in eye-tracking technology, providing the sensor stack required for dynamic foveated rendering.

Manufacturer of 'bionic display' headsets that use a high-density focus display inside a peripheral context display.

A medical device company using eye-tracking for vision assessment and therapy.

Develops camera-free eye tracking using MEMS scanners for faster, lower-power tracking.

AR headset manufacturer utilizing dynamic dimming and eye-tracking for optimized rendering.

Creates open-source and research-grade eye tracking hardware and software.

Developers of the 'Beam' eye tracker app which turns standard webcams into gaming eye-trackers using AI.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

Develops FOVIO driver monitoring technology to detect fatigue and distraction using advanced computer vision.