Explainable Consent Interfaces

In an era where digital services routinely collect vast amounts of personal data and deploy increasingly sophisticated AI systems, traditional consent mechanisms have become fundamentally inadequate. Dense privacy policies written in legal language, combined with binary "accept or decline" choices, fail to communicate the actual implications of data sharing to everyday users. This disconnect creates a crisis of informed consent, where people unknowingly surrender control over information that may affect their mental health, social relationships, and personal autonomy. Explainable Consent Interfaces address this challenge by transforming how data practices and algorithmic behaviors are communicated, replacing impenetrable legal documents with interactive, human-centered experiences that genuinely illuminate what happens to personal information after it is shared.

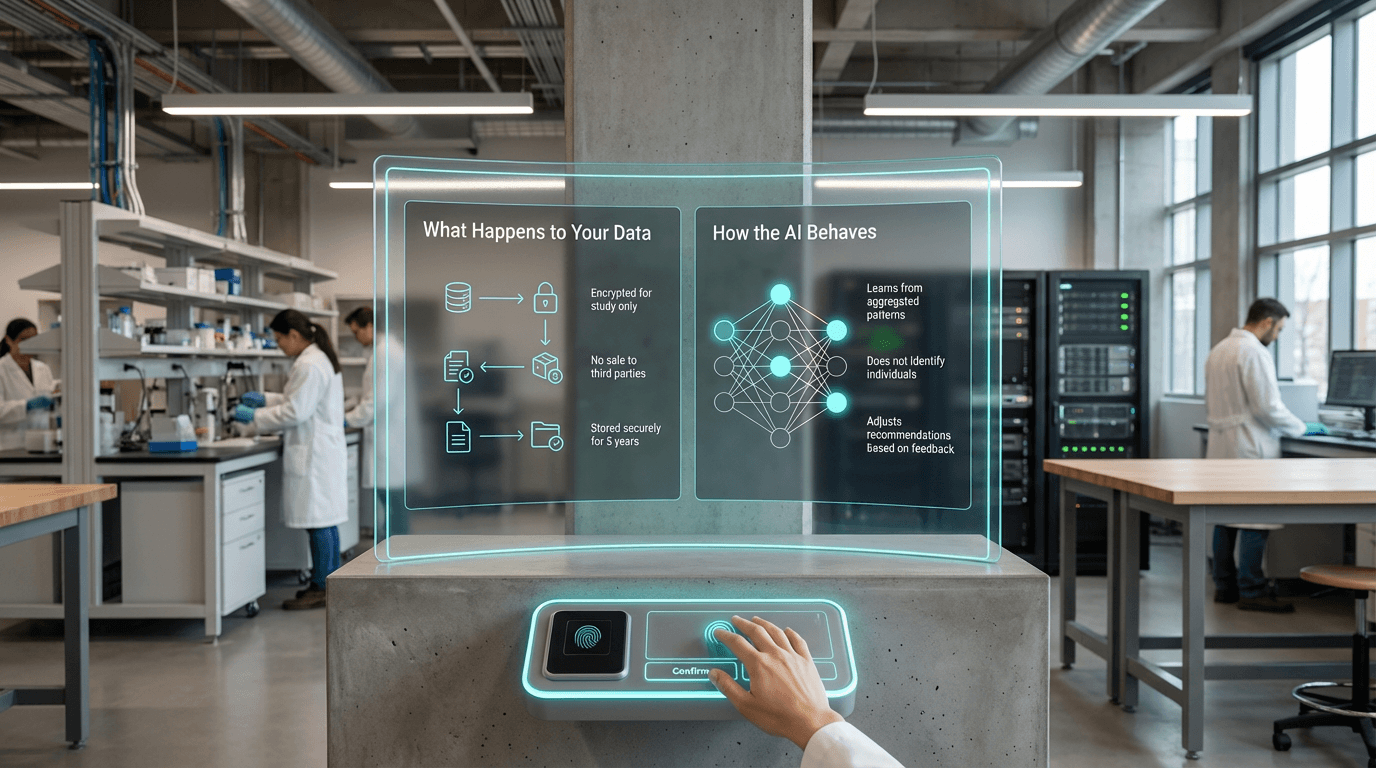

These interfaces employ a combination of narrative storytelling, data visualization, and interactive simulation to make abstract data flows concrete and comprehensible. Rather than presenting static text, they might show animated timelines depicting how a photograph shared today could be analyzed, combined with other data sources, and used to infer sensitive attributes years into the future. Visual metaphors help users understand complex concepts like algorithmic profiling or data aggregation, while scenario-based walkthroughs demonstrate potential emotional or social consequences of different consent choices. Crucially, these systems support granular control, allowing users to grant permission for specific uses while withholding consent for others, and to revoke permissions as circumstances change. The technical architecture often includes consent management layers that translate user preferences into enforceable policies, ensuring that interface choices have meaningful downstream effects on data processing.

Early implementations of explainable consent frameworks are emerging in healthcare applications, mental health platforms, and educational technology, where the sensitivity of personal information demands higher standards of transparency. Research in human-computer interaction suggests that when people can visualize data journeys and understand algorithmic decision-making through concrete examples rather than abstract descriptions, they make more deliberate choices aligned with their values and wellbeing priorities. As regulatory frameworks increasingly emphasize meaningful consent and as public awareness of data harms grows, these interfaces represent a critical evolution in digital ethics. They embody a shift from compliance-focused privacy notices toward genuinely humane technology design, where transparency serves not merely legal requirements but the deeper goal of preserving human dignity and autonomy in algorithmic systems.

Related Organizations

An interdisciplinary team working at the intersection of law, design, and technology to make legal information, including consent forms, usable and accessible.

A community project that analyzes and grades the terms of service and privacy policies of major websites.

Conducts advanced research in social cybersecurity and the detection of online influence campaigns (e.g., ORA tool).

A global consortium that developed the 'Consent Receipt' specification to provide users with a record of what they agreed to.

The UK's independent regulator for data rights, providing specific guidance on AI and data protection.

Non-profit promoting open science and patient engagement.

Formerly 'Simply Secure', they provide design resources and research to open-source projects to improve usability, specifically around trust and consent.

Provides a Consent Management Platform (CMP) and Preference Center to manage user consent and preferences.

A data privacy platform that provides a 'Privacy Score' for websites and simplifies consent management for companies.

A leading Consent Management Platform helping companies collect, manage, and document user consent.