Synthetic Relationship Disclosure

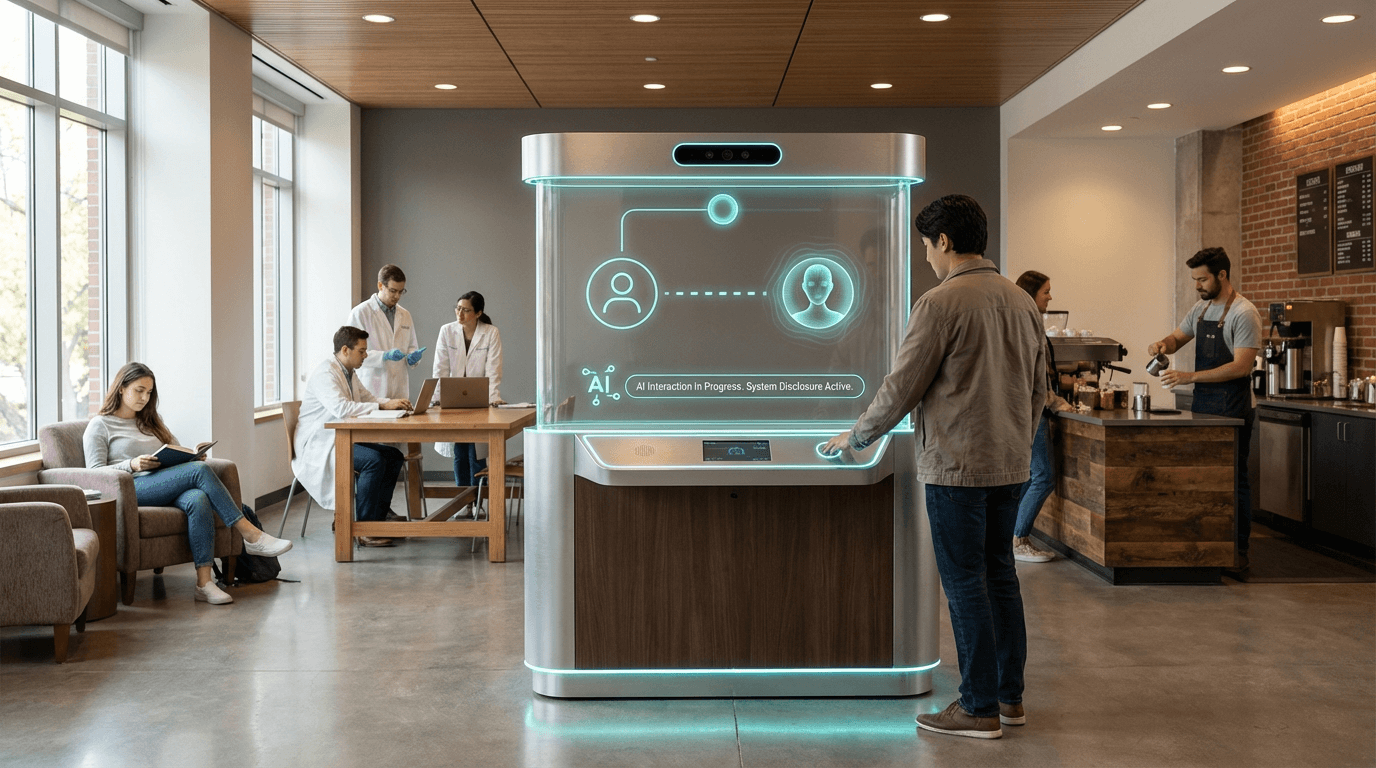

The rapid proliferation of conversational AI systems has introduced a fundamental challenge to digital interactions: the difficulty of distinguishing between human and artificial interlocutors. Synthetic Relationship Disclosure addresses this challenge through technical standards, interface design patterns, and regulatory frameworks that mandate transparent identification of AI agents in digital communications. At its core, this approach relies on persistent visual and textual indicators embedded within user interfaces—such as distinctive avatars, color-coded message backgrounds, or explicit labeling systems—that remain visible throughout the duration of any AI-mediated interaction. These disclosure mechanisms operate across multiple touchpoints, from initial contact through ongoing conversations, ensuring that users maintain continuous awareness of an agent's synthetic nature. The technical implementation typically involves both client-side interface elements and server-side metadata protocols that prevent the suppression or circumvention of disclosure markers, creating a robust system that prioritizes transparency over engagement optimization.

The absence of clear disclosure standards has created significant ethical and psychological risks in digital environments where AI agents increasingly serve customer service, mental health support, companionship, and educational roles. Without transparent identification, users may unknowingly invest emotional energy, trust, and vulnerability into relationships they believe to be human-mediated, only to later experience feelings of betrayal or manipulation upon discovering the synthetic nature of their interlocutor. This phenomenon, sometimes termed "bot deception," undermines user autonomy and informed consent while potentially exploiting human psychological tendencies toward anthropomorphization and social bonding. Industry research suggests that clear disclosure frameworks help establish appropriate expectations for AI interactions, reducing the risk of emotional harm while paradoxically often improving user satisfaction by eliminating the dissonance that occurs when synthetic agents attempt to pass as human. These protocols also address broader concerns about digital manipulation, helping organizations demonstrate ethical AI deployment practices and build trust with increasingly skeptical user populations.

Early implementations of synthetic relationship disclosure have emerged across various sectors, with some jurisdictions beginning to mandate transparency requirements for AI-powered communications. Mental health platforms deploying AI chatbots have pioneered disclosure practices, recognizing the particular vulnerability of users seeking emotional support and the ethical imperative to ensure informed consent in therapeutic contexts. Customer service applications increasingly adopt visual distinction systems that clearly differentiate AI agents from human representatives, allowing users to request human escalation when desired. As regulatory frameworks evolve—with proposed legislation in several regions requiring explicit AI identification in consumer-facing applications—industry standards are beginning to coalesce around best practices for disclosure timing, persistence, and clarity. The trajectory of this technology points toward a future where synthetic relationship disclosure becomes a fundamental component of digital literacy and user protection, embedded not as an afterthought but as a core design principle in any system involving AI-human interaction. This evolution reflects a broader shift toward humane technology practices that prioritize psychological safety, informed consent, and the preservation of human dignity in increasingly AI-mediated social landscapes.

Related Organizations

The Coalition for Content Provenance and Authenticity develops technical standards for certifying the source and history of digital content.

Software giant and founder of the Content Authenticity Initiative (CAI).

The executive branch of the EU, responsible for the AI Act.

Developers of the Gemini family of models, which are trained from the start to be multimodal across text, images, video, and audio.

OpenAI

United States · Company

Creator of GPT-4o, a natively multimodal model capable of reasoning across audio, vision, and text in real-time.

A coalition of tech companies and nonprofits developing best practices for AI, including guidelines on human-AI interaction.

Focuses on image provenance and authentication, helping verify that media has not been altered (the inverse of detection).

An AI safety and research company developing Constitutional AI to align models with human values.

Provider of digital watermarking and identification technologies.