Trauma-Informed AI Conversation Frameworks

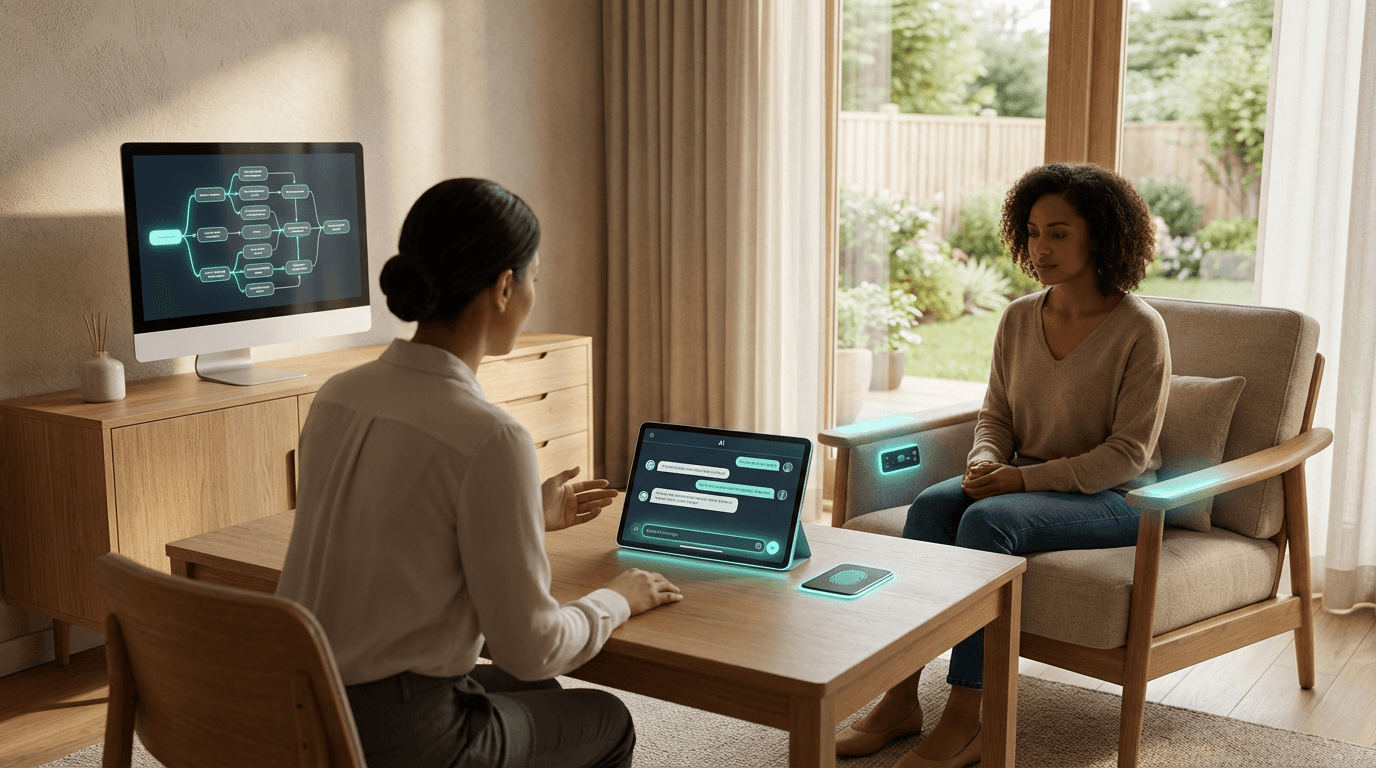

Trauma-informed AI conversation frameworks represent a specialized approach to designing conversational systems that prioritize psychological safety when interacting with users who may be experiencing distress, crisis, or vulnerability. These frameworks combine technical guardrails with ethical design principles to ensure that AI-powered chatbots, virtual assistants, and mental health support tools do not inadvertently cause harm to users who may be processing trauma, experiencing mental health challenges, or navigating sensitive life circumstances. At their core, these systems employ multi-layered detection mechanisms that monitor conversation patterns, language sentiment, and contextual cues to identify when a user may be in distress. The frameworks typically include carefully calibrated response protocols that avoid common pitfalls such as minimizing user experiences, offering unsolicited advice, or using language that could trigger re-traumatization. Technical components often include content filtering systems, conversation pacing controls that prevent overwhelming users with information, and clearly defined topic boundaries that prevent the AI from venturing into areas requiring professional clinical expertise.

The mental health and wellness technology sector faces a critical challenge: how to leverage AI's scalability and accessibility while maintaining the safety standards traditionally upheld by trained human practitioners. Many individuals seeking mental health support encounter barriers such as cost, stigma, or limited availability of qualified professionals, making AI-powered tools an attractive first point of contact. However, without proper safeguards, these systems risk causing harm through inappropriate responses, privacy breaches, or failure to recognize crisis situations. Trauma-informed frameworks address these concerns by establishing clear protocols for when and how conversational AI should escalate to human support, whether that means connecting users with crisis hotlines, licensed therapists, or trusted contacts. They also tackle the complex issue of data sensitivity by implementing transparent consent mechanisms that explain exactly how conversations will be stored, analyzed, and potentially shared, giving users meaningful control over their personal mental health information. This approach enables organizations to deploy supportive AI tools while maintaining ethical accountability and reducing liability risks.

Research institutions and mental health technology companies are increasingly adopting these frameworks as awareness grows around the potential harms of poorly designed conversational AI. Early implementations have appeared in crisis text lines, employee assistance programs, and digital mental health platforms, where organizations recognize the need for specialized safety protocols beyond general AI ethics guidelines. Industry observers note a growing emphasis on interdisciplinary collaboration, bringing together AI engineers, clinical psychologists, trauma specialists, and user experience designers to create systems that balance technological capability with human-centered care principles. The frameworks typically undergo iterative testing with diverse user groups, including trauma survivors and mental health advocates, to identify potential failure modes before deployment. As conversational AI becomes more prevalent in healthcare and wellness contexts, trauma-informed design principles are likely to become standard practice rather than optional enhancements, reflecting a broader shift toward recognizing AI systems as active participants in sensitive human experiences that require thoughtful, compassionate design.

Related Organizations

A mental health company offering an AI-powered chatbot based on Cognitive Behavioral Therapy (CBT).

An AI-enabled mental health support platform that provides early intervention and self-help tools through a conversational interface.

AI referral and triage tool used by the UK NHS to assess mental health patients.

Suicide prevention organization for LGBTQ youth that uses AI (Riley) to train counselors.

Provides safety services and AI interventions for social platforms to detect and assist users in distress.

Uses AI to analyze therapy conversations and provide feedback on quality and empathy to clinicians.

Developing an Empathic Voice Interface (EVI) that detects and responds to human emotion.

A non-profit dedicated to establishing independent AI assessments and certifications.