Affective Computing Middleware

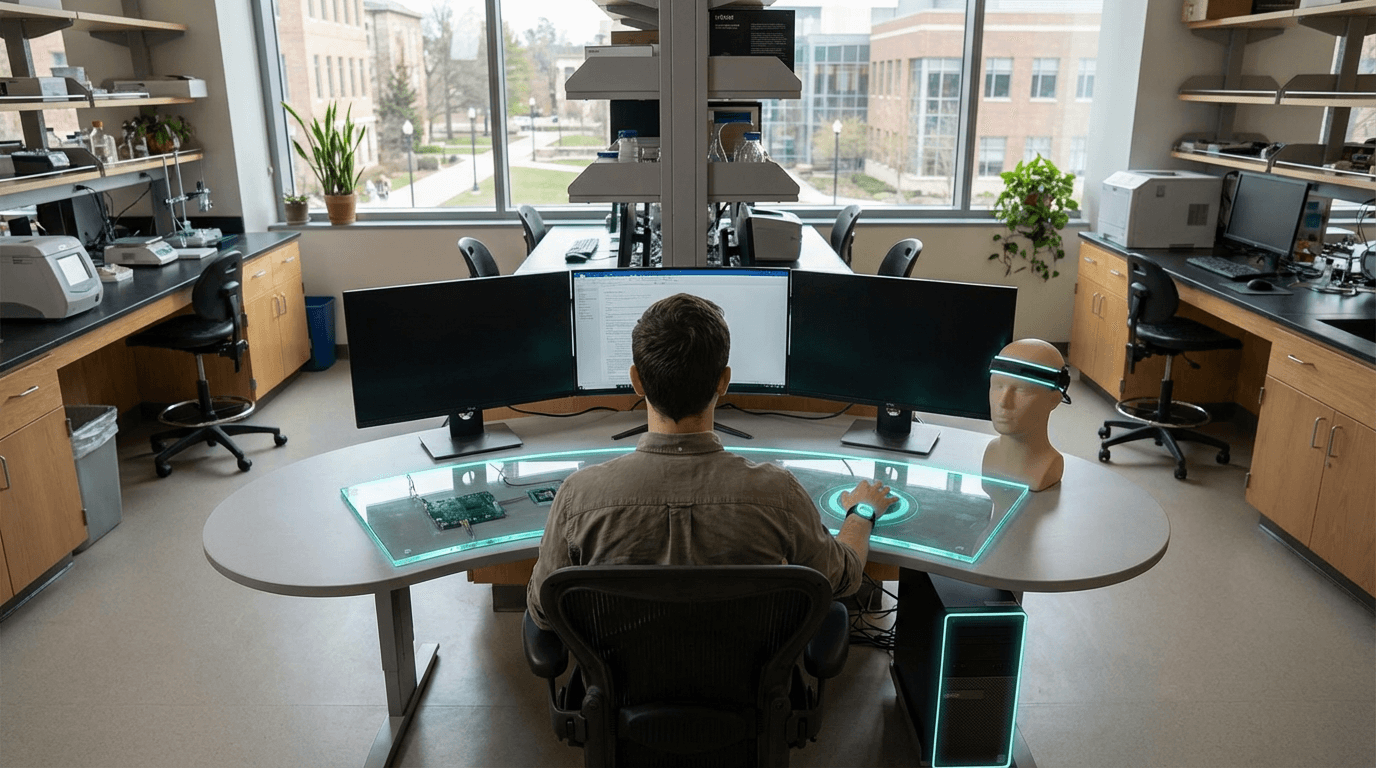

Affective computing middleware represents a foundational software layer that sits between operating systems and applications, designed to continuously interpret and respond to users' emotional states through non-invasive behavioral signals. Unlike traditional user interfaces that remain static regardless of a person's mental state, this middleware analyzes subtle patterns in how people interact with their devices—variations in typing rhythm and pressure, mouse movement velocity and trajectory, voice pitch and cadence during calls, and even facial micro-expressions captured through standard webcams. These signals are processed locally on the user's device using machine learning models trained to recognize patterns associated with stress, frustration, fatigue, or cognitive overload. The system operates as a transparent layer, requiring no conscious input from users while maintaining privacy by keeping all emotional inference processing on-device rather than transmitting sensitive behavioral data to external servers.

The fundamental challenge this technology addresses is the growing epidemic of digital burnout and technology-induced stress in modern work and personal environments. Traditional software operates on the assumption that users maintain consistent cognitive capacity and emotional resilience throughout their interactions, leading to poorly timed notifications during high-stress moments, overwhelming interface complexity when users are fatigued, and relentless information streams that ignore human psychological limits. Research suggests that inappropriate timing of digital demands contributes significantly to workplace stress and reduced productivity. By providing a standardized emotional awareness layer, this middleware enables any compatible application to become contextually sensitive to user wellbeing. For instance, email clients could delay non-urgent notifications when the system detects elevated stress levels, or complex software interfaces could automatically simplify when signs of cognitive fatigue emerge, presenting only essential functions until the user's state improves.

Early deployments of affective computing middleware are emerging primarily in enterprise wellness programs and therapeutic applications, where organizations seek to reduce employee burnout and mental health professionals aim to provide more responsive digital interventions. Some productivity software developers are beginning to integrate emotional awareness capabilities that adjust task recommendations and break reminders based on detected stress patterns. The technology aligns with broader industry movements toward humane technology design and digital wellbeing, representing a shift from engagement-maximizing interfaces toward systems that prioritize psychological safety and sustainable interaction patterns. As workplace mental health concerns intensify and regulatory attention to digital wellbeing increases, affective computing middleware may become a standard component of operating systems, much like accessibility features evolved from specialized tools to universal design principles. The trajectory points toward computing environments that recognize users as whole people with fluctuating emotional needs rather than as productivity units with unlimited capacity for digital demands.

Related Organizations

The pioneer in Emotion AI, spun out of MIT Media Lab, now part of Smart Eye.

A spin-off from TU Munich specializing in audio analysis and speech emotion recognition.

Home of the Affective Computing research group led by Rosalind Picard.

Develops voice biomarker technology for mental health.

Develops FaceReader, the standard software tool for automated analysis of facial expressions in scientific research.

Spin-out from the University of Nottingham focusing on face and voice analysis for health.

Provides a client-side JavaScript SDK for Emotion AI in the browser.

Creates autonomously animated 'Digital People' with simulated nervous systems.

Uses webcams to measure attention and emotion in response to video advertising.