Differential Privacy

Differential Privacy is a mathematical framework that enables organizations to extract valuable insights from sensitive datasets while providing provable guarantees against individual re-identification. At its technical core, the approach works by injecting precisely calibrated statistical noise into query results or data releases, ensuring that the output remains nearly identical whether any single individual's data is included or excluded from the dataset. This noise injection follows rigorous mathematical definitions—typically measured by an epsilon parameter that quantifies the privacy-loss budget—allowing data custodians to balance the trade-off between analytical utility and privacy protection. The framework encompasses various implementation techniques, including the Laplace mechanism for numerical queries, the exponential mechanism for non-numeric outputs, and more sophisticated methods like local differential privacy where noise is added at the point of data collection rather than during analysis.

In the context of identity verification and authentication systems, Differential Privacy addresses a critical challenge: organizations need to detect fraud patterns, improve security models, and understand user behavior without creating datasets that could expose individual identities or sensitive attributes. Traditional anonymization techniques like data masking or pseudonymization have repeatedly proven vulnerable to re-identification attacks, particularly when multiple datasets are cross-referenced or when adversaries possess auxiliary information. Differential Privacy overcomes these limitations by providing mathematical guarantees that hold even against attackers with arbitrary background knowledge. This capability is particularly valuable for financial institutions conducting anti-money laundering analytics, healthcare providers analyzing patient authentication patterns, or technology platforms training machine learning models to detect account takeovers—all scenarios where aggregate insights are essential but individual privacy must be rigorously protected.

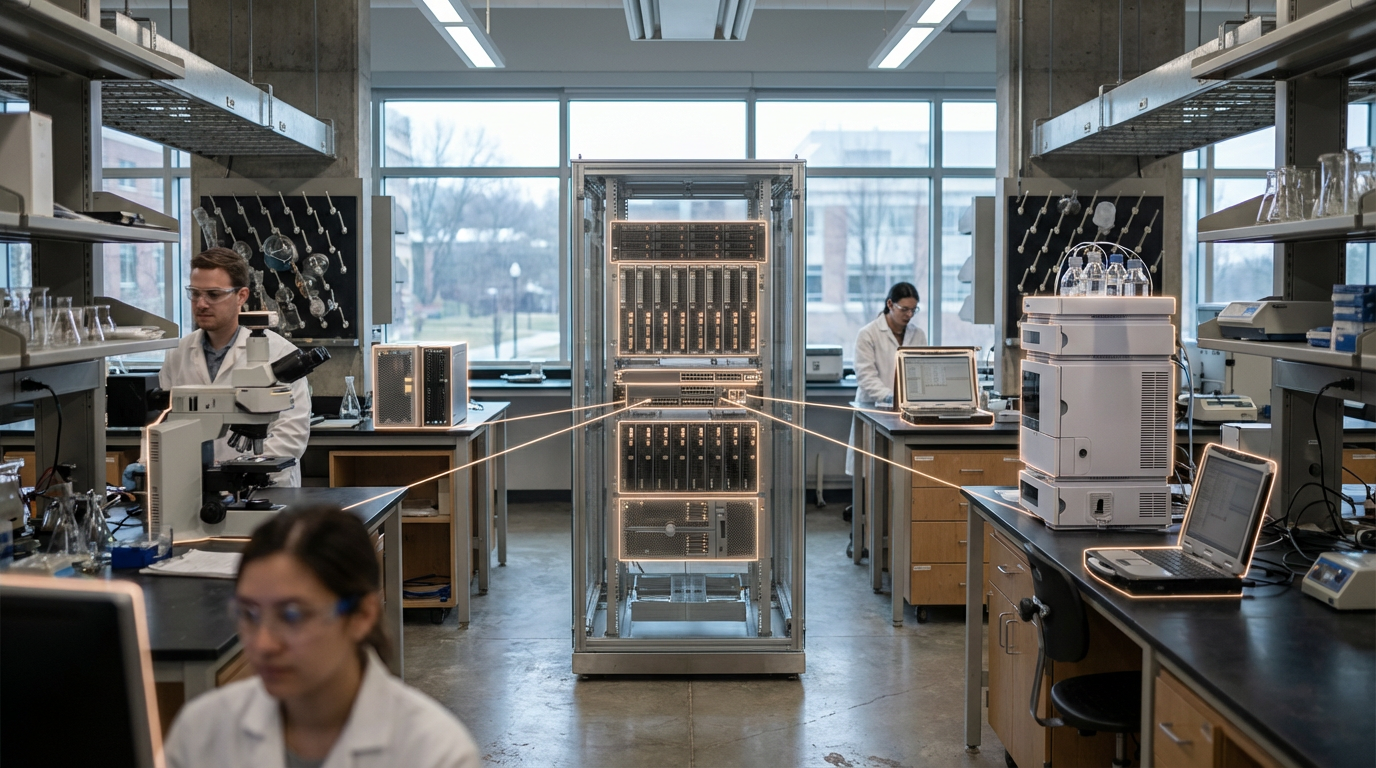

Research institutions and technology companies have begun deploying Differential Privacy in production systems, with notable implementations in census data releases, mobile operating system telemetry, and cloud-based analytics platforms. The framework enables privacy-preserving identity verification by allowing organizations to validate credentials against population statistics without exposing individual records, and supports behavioral biometrics systems that can detect anomalous authentication attempts while limiting the risk that behavioral patterns could be reverse-engineered to identify specific users. As regulatory frameworks increasingly demand both robust security measures and strong privacy protections—often creating apparent tensions between fraud prevention and data minimization—Differential Privacy offers a mathematically grounded path forward. The technology aligns with broader industry movements toward privacy-enhancing technologies and zero-knowledge architectures, positioning it as a foundational component in next-generation identity systems that must simultaneously verify trust, prevent abuse, and respect individual privacy rights in an era of increasingly sophisticated re-identification techniques.

Related Organizations

A community effort to build a suite of open-source tools for enabling differential privacy analysis.

The principal agency of the US Federal Statistical System.

Tumult Labs

United States · Startup

Founded by the inventors of differential privacy, providing a platform to safely publish sensitive data.

Through Copilot and the 'Recall' feature in Windows, Microsoft is integrating persistent memory and agentic capabilities directly into the operating system.

Data privacy software company enabling organizations to use sensitive data safely for analytics.

Released Arctic, an enterprise-grade Mixture-of-Experts language model designed for complex enterprise workloads.

Invests heavily in privacy-preserving computation, including differential privacy for financial data analysis.

Enclave computing and privacy enhancing technologies provider.