Foveated Display Systems

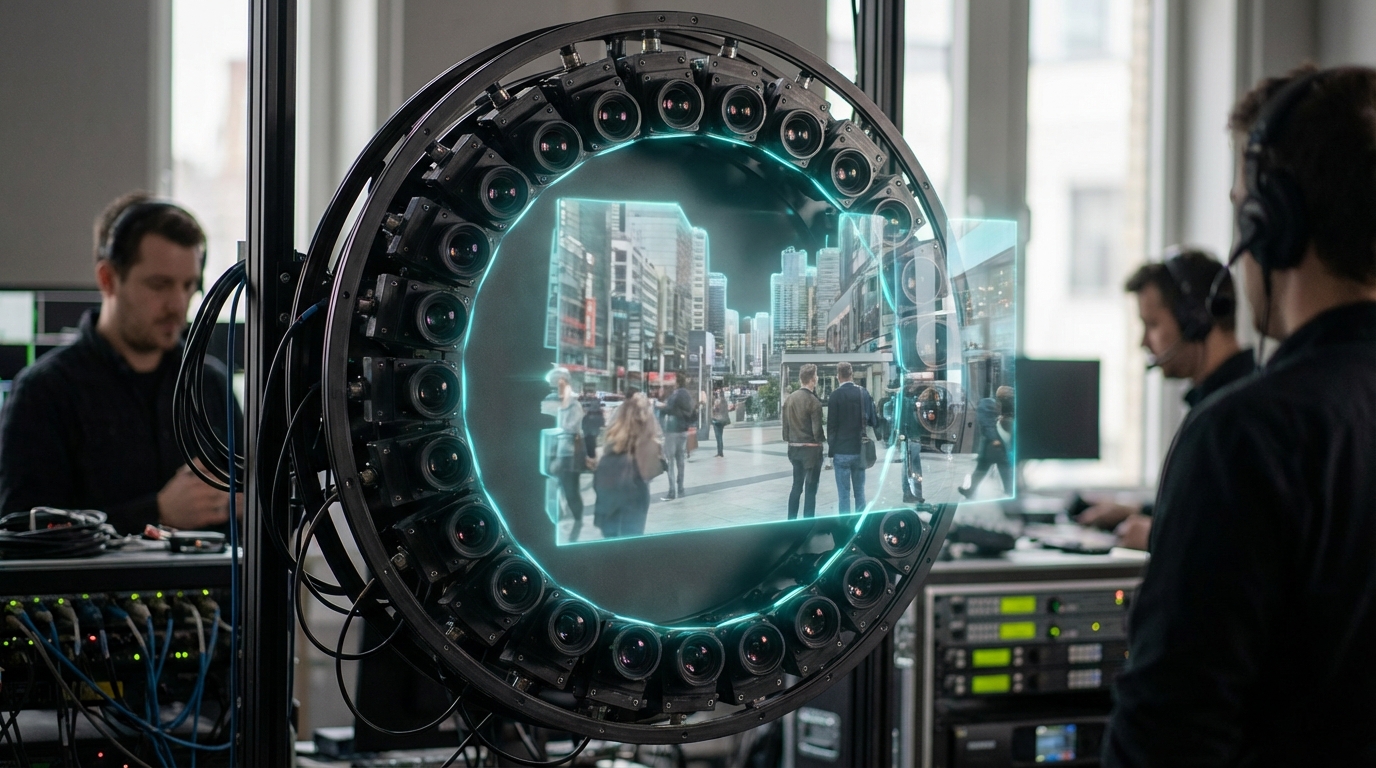

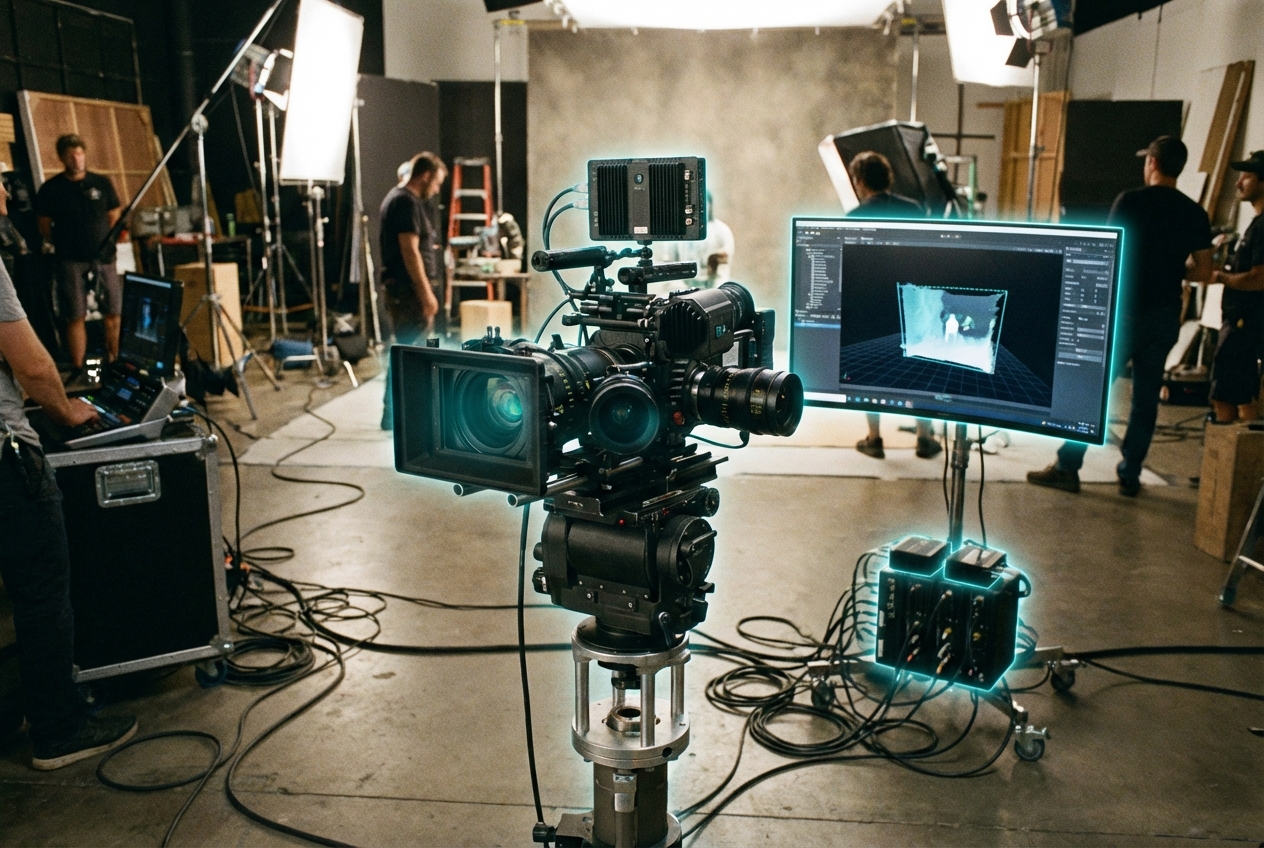

Foveated display stacks combine sub-millisecond eye tracking with multi-zone micro-OLED or micro-LED panels so only the foveal region renders at maximum resolution. Beam splitters or varifocal optics steer the high-density “sweet spot,” while GPU pipelines output concentric layers—full fidelity within the gaze cone, aggressively decimated textures elsewhere—to cut shading cost and bandwidth. Purpose-built ISPs feed gaze vectors to the rendering engine in under 10 ms so imagery remains sharp even during saccades.

Media platforms lean on foveation to deliver cinema-grade detail inside lightweight headsets. VR filmmakers can present legible subtitles, nuanced facial acting, and intricate UI without blowing thermal budgets, while cloud-streamed experiences reduce network load by only transmitting pixels the viewer actively inspects. Sports broadcasters and productivity suites already expose foveated overlays to keep stat panels crisp while leaving peripheral ambiance impressionistic.

Remaining hurdles include calibration drift, eye-tracking bias for different eye shapes, and content authoring pipelines that must export multi-resolution assets. Khronos, OpenXR, and the MPEG Immersive Video group are defining metadata so foveation patterns travel with content, and headset OEMs collaborate with Unity/Unreal to expose adaptive shading APIs. With PS VR2, Varjo, and Meta Quest Pro demonstrating TRL 6 viability, expect foveated display pipelines to become mandatory for next-gen spatial streaming and mixed reality productivity.

Related Organizations

Develops the Quest Pro and research prototypes (Butterscotch, Starburst) focusing on foveated systems.

The global leader in eye-tracking technology, providing the sensor stack required for dynamic foveated rendering.

Manufacturer of 'bionic display' headsets that use a high-density focus display inside a peripheral context display.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

Creators of the PlayStation VR2, which features standard foveated rendering.

Develops camera-free eye tracking using MEMS scanners for faster, lower-power tracking.

Japan · Company

Created the world's first eye-tracking VR headset specifically for foveated rendering.

AR headset manufacturer utilizing dynamic dimming and eye-tracking for optimized rendering.

Offers the AI Stack which includes tools for hardware-aware model efficiency and architecture search.

Creates open-source and research-grade eye tracking hardware and software.